by Richard Saumarez

Many continuous signals are sampled so that they can be manipulated digitally. We assume that the train of samples in the time domain gives a true picture of what the underlying signal is doing, but can we be sure that this is true and the signal isn’t doing something wildly different between samples? Can we reconstruct the signal between samples and, more important, can we tell if the signal has been incorrectly sampled and is not a true representation of the signal?

This is one of the most basic ideas in signal processing and is worth discussing because it is regularly abused. A number of us have been caught out by this problem, when it occurs rather subtly, so this is cautionary tale. I will illustrate it using temperature records and suggest that it is not a trivial problem.

A common method of expressing climate temperature data is to take monthly averages. This seems a perfectly reasonable thing to do, after all an average is simply the average. If we wanted to compare the mean July temperature in Anchorage with that in Las Vegas using conventional statistics, it presents no problems. But, when the averages are treated as time series or the representation of the underlying continuous signal, problems can occur.

The number of samples required to describe a varying signal is determined by the Nyquist Sampling theorem, which states that the sampling frequency must be at least twice the highest frequency in the (Fourier series representation) of the signal. If this done, the signal is correctly sampled and in principle the intermediate signal between samples can be reconstructed perfectly. (In practice there are some limitations and trade-offs that stem from estimating the Fourier series of an arbitrary signal).

However, if the signal is under-sampled, it is irretrievably corrupted and is said to be aliased. This is well understood in many fields and is absolutely basic (Signals 101). If one has, say, an audio signal and we wish to record it digitally, we would wish to resolve ~20KHz, the highest audible frequency and so we would record at a sampling frequency of at least 40KHz. There may be higher frequencies around during the recording, although we can’t hear them, or artefacts such as clicks, so the analogue signal is filtered with an anti-aliasing, low pass filter to remove high frequency components before sampling or is sampled at a very high frequency, filtered digitally to simulate the anti-aliasing filter and finally re-sampled, or “decimated”, at 40KHz. Although this discussion is based on signals in time, the concept applies to any sampled system: images, computed tomographic imaging, temperature over the surface of the Earth and so on.

Difficulties arise when either one can’t do this because the measurement system doesn’t allow it or the problem of aliasing isn’t recognised. In this case one can be led, unsuspecting, up a long and tortuous garden path.

Sampling a signal is equivalent to multiplying a continuous function by an equally spaced train of impulses (figure 1).

Since, the Nyquist theorem is stated in terms of a frequency, we have to consider what sampling does to the spectrum of the signal and I am taking a short cut by going straight to the discussion of a sampled signal, rather than through the long winded formal theory.

The spectrum of a signal is represented in both positive and negative frequency. Computation of Fourier series coefficients is a correlation with a sine wave and a cosine wave of a particular frequency and

cos (wt) = 1/2 [exp(iwt) + exp(-jwt)] And sin (wt) = 1/2 [exp(iwt) – exp(-jwt)]/j

Therefore the spectrum of a cosine wave with an amplitude of 1 and frequency wf, is (½,0) at a frequency of wf and (½,0) at frequency of -wf. Similarly, a sine wave of the same frequency has a spectrum of (0, ½j) at wf and (0,-½j) at -wf, i.e.: the negative frequency component of the spectrum the complex conjugate of the positive frequency component.

The spectrum of the sampling process itself is an infinite train of impulses in frequency domain spaced at intervals equal to the frequency of the sampling process (figure 1).

Since sampling is multiplication of a continuous signal by a train of impulses, we can obtain the sampled signal spectrum by convolving their two spectra. The spectra of a correctly sampled signal and an aliased signal are shown below in figure 2:

The highest frequency that can be determined is half the sampling frequency, known as the Nyquist frequency. In the case of monthly temperature series, the highest frequency that can be resolved is 2 months-1

Although this is the theoretical minimum, in practice one generally samples at a higher rate than the theoretical minimum. Therefore in most correctly sampled signals, there will be gap in the spectrum around the Nyquist frequency, as in the upper drawing of figure 2. Therefore the spectrum is resolvable because there is no overlap between its representation at w=0, and its reflection about the sampling frequency. In an aliased signal, high frequency components of the signal overlap and are summed (as complex numbers) with low frequency components and so the spectrum become irresolvable and the signal is corrupted. This has two rather important implications:

a) One cannot interpolate between samples to get the original signal.

b) A high frequency’ aliased component in the signal will appear as a lower frequency component in the sampled signal.

Figure 2 Spectrum of a correctly sampled signal (upper) and an aliased signal (lower).

Figure 2 Spectrum of a correctly sampled signal (upper) and an aliased signal (lower).

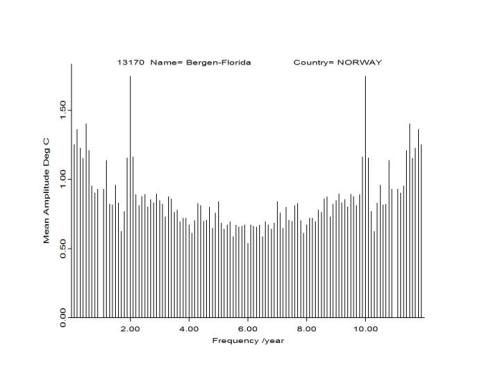

Figure 3. Mean amplitude spectrum of 10 yearly records from Bergen, Norway. The 1-year component is suppressed, as it is huge compared to the other components

Are Temperature records aliased?

Out of curiosity I looked at the HADCRUT series. I extracted intact 10-year records from the series that contained no missing data and calculated the amplitude spectrum (after trend removal and windowing), for individual stations (Figure 3) and the mean of all valid records, shown in figure 4.

Figure 4 Ensemble amplitude spectrum of 5585 10 year monthly temperature records.

Figure 4 Ensemble amplitude spectrum of 5585 10 year monthly temperature records.

These certainly look aliased at first sight, but without access to daily records, it is impossible to be sure that aliasing is occurring, the temperature might be fortuitously sampled at exactly the correct frequency.

This begs two questions:

a) Can one explain how the temperature record has become aliased?

b) Does it really matter?

[Or, in translation: is this simply a load of pretentious, flatulent, obfuscating, pseudo-academic navel-gazing? I will show that, yes, aliasing is likely to be present and it may matter.]

Why are temperature records aliased?

Temperature records are constructed by taking daily, or more frequent, observations and taking their average over each month. In signal processing terms this is a grotesque operation.

Forming the average of a train of samples is a filter. One has convolved the signal with an impulse response that consists of 30 equal weight impulses and this filter has an easily calculable frequency response, shown in figure 5:

Note that the first zero is at 1/month. If this filter were run over every daily observation, one would get a low pass filtered version of the daily signal. Clearly, this does not attenuate all the high frequency components in the daily signal.

What is then done, is that filtered daily signal is sampled at monthly intervals, so the Nyquist frequency is 1/6 of a year. If components of the signal with higher frequencies than the Nyquist frequency exist and are not attenuated by the averaging filter, these will become aliased components.

Figure 5 Frequency response of 30-day average.

To investigate this I have modelled a daily temperature record with the following components:

1) A basic sinusoid, -cos(2 pi t), where t is years starting on January 1st, to model the yearly cycle with an amplitude of 30oC.

2) This modified by a random, amplitude modulation of 2.0 oC to simulate variability of peak summer and minimum winter temperatures.

3) A 15-day random modulation of phase to that spring and autumn can come a bit early or late.

4) A heat wave during summer that can occur randomly from the beginning of June until the end of August and last a random length of between 5 and 15 days. Its amplitude is random, between 0.5 and 5oC. A similar “cold snap” is added during December and January.

5) A random, normally distributed measurement error with a standard deviation of 0.25oC.

6) Rounding of the temperature reading to the nearest 0.1oC.

One would not claim this is an exact model of a temperature station, but it contains features that are slowly and rapidly varying that would give the signal some of its properties. Any rapidly moving components, for example short temperature excursions, will generate high frequencies, while the modulations will generate harmonics of the yearly cycle.

The spectrum of a 10-year record is shown in figure 6, with the frequency response of the averaging filter.

Figure 6 Spectrum of a 10-year record of simulated temperature with the amplitude response of the averaging process superimposed upon it.

Figure 7 The spectrum after applying a 30-day averaging filter. Note: the spectrum of this signal sampled at 1/month is obtained by reflecting this spectrum around 1/month (red line) and adding it (as a complex number) to the original.

Figure 7 The spectrum after applying a 30-day averaging filter. Note: the spectrum of this signal sampled at 1/month is obtained by reflecting this spectrum around 1/month (red line) and adding it (as a complex number) to the original.

Once this spectrum is filtered, as shown in figure 7, the spectrum of the monthly signal is obtained by convolving it with the spectrum of the monthly sampling process, which is a train of impulses spaced in the frequency axis of one month apart.

This results in a severely aliased spectrum which is shown in figure 8 and this suggests that the HADCRUT data is aliased.

Figure 8 The calculated spectrum after sampling at 1-month intervals. Note that this is aliased. The simple yearly cycle has been subtracted; the components shown around 1 year-1 are due to modulation.

Does it matter?

One important feature of aliasing is that it creates spurious low frequency signals that can become trends. Using 150-year model records, this can be examined by creating yearly anomaly signals.

Figure 9 Spurious trends in the error between the true integrated yearly signal and the value obtained by taking monthly and then yearly averages.

Figure 9 Spurious trends in the error between the true integrated yearly signal and the value obtained by taking monthly and then yearly averages.

The daily, simulated signals (I have constructed them carefully to ensure that they aren’t aliased) are reduced to monthly averages and then used to form yearly errors between the processed signal and the integral of the daily signal, which is trend free. A particularly bad example of this is shown in Figure 9.

Note that the magnitudes of these trends are significant in terms of the variability of temperature records and that they are artefacts created by processing records where there are no trends.

Repeating this process for 100000 records, the distribution of the magnitude and duration of these spurious trends can easily be estimated and is shown in a 2d histogram in figure 10. The green area is the empirical 95% limit. The marginal distributions, shown in red are not on the same vertical (probability) scale but merely indicate the shape of the distribution.

Figure 10 Histogram of trend magnitude and duration.

Figure 10 Histogram of trend magnitude and duration.

Therefore one can conclude that aliasing in temperature records may be important.

Given daily records, it is preferable to integrate them in order to get average temperatures, paying careful attention to the effects of the finite precision of a thermometer, which is probably a maximum of 1:150 over most temperature ranges, but is more typically 1:100. To get a monthly time series, one should filter the daily record with a carefully designed filter, which will inevitably have a long impulse response, to obtain a smoothed daily record. This can then be sampled at monthly intervals.

There is a further problem in using data that may aliased as inputs to models. The effects will clearly depend on the model, but as an example, I made a simple linear model of a system with three negative feedbacks with different feedback gains and time constants. This is driven with low pass filtered broadband noise (not aliased), and also the input signal, which is decimated to create an aliased input. The results are shown below. The true output is shown in black, the green is the input sampled at 25% below, and red 50%, below the Nyquist frequency. If you wanted to extract the parameters of the model from the aliased input, they would of course be wrong.

Predicting what would happen in more complex situations, for example using principle component analysis, when some components were aliased and some were not, is difficult (even a nightmare). However, any model that is constructed using sampled data should be viewed critically.

Figure 11 Output of a simple feedback model driven with a correctly sampled signal (black) and increasingly aliased versions as inputs (green and red).

Figure 11 Output of a simple feedback model driven with a correctly sampled signal (black) and increasingly aliased versions as inputs (green and red).

Aliasing is a problem that rears its ugly head in many different fields. In terms of pure analogue time-domain signal conversion, the procedure to prevent it is well-known and straightforward – anti-aliasing filters. Problems occur when you can’t simply filter out high frequency components. For example, this was a problem in early generation CT scanners where abrupt transitions in bone and soft tissue radio density caused aliasing because they could not be sampled adequately by the x-ray beams used to form the projections.

The key to dealing with aliasing is to recognise it and given any time series one’s first question should be “Is it aliased?”

JC comment: My concerns regarding aliasing relate particularly to the surface temperature data, especially how missing data is filled in for the oceans. Further, I have find that running mean/moving average approaches can introduce aliases, I have been using a Hamming filter when I need one for a graphical display. This whole issue of aliasing in the data sets seems to me to be an under appreciated issue.

How many will “get it”? Perhaps a detailed example could be of help.

Not detailed but here is simple demonstration

In electronics this is resolved easily by placing an “anti-aliasing” filter before the ADC (analog to digital filter) which performs the interval sampling. This is simply a low pass filter that removes any part of the signal spectrum above the sampling rate of the ADC.

This is a proper and necessary step to trust the data you analyze.

If you have a signal with a lot of variation, you should either average it with a moving window filter (which is a low pass filter, or select a low pass of your choice) if you want to decimate it (reduce the data set size).

Reading a rain gauge once a day is actually a low pass filter. It give you the average rain per day instead of say checking it every hour. Obviously it you summed 24 hourly readings you would get identical results to the daily gauge reading. However the classic aliasing mistake would be to take just one of the hourly readings, say 2 pm, and multiply it by 24 to get a daily average. This is clearly not equivalent and subject to error.

All things being equal, it is usually best to not throw away data and analyze what you have in full.

I believe, and in my experience find to be true, that many (most?) scientists are much more lacking in basic signal processing skills than the public would assume. In fact it was Mann’s lack of math skills so carefully identified by McIntyre that led my down the skeptic trail, and revealed to me how sloppy some of the academia research is done, and how defensive they are about admitting basic errors, to the detriment of the whole of science.

Stern Caution:

Aliasing doesn’t necessarily imply sampling error.

Earth itself aliases.

Vaughan, P.L. (2011). Shifting Sun-Earth-Moon Harmonies, Beats, & Biases.

http://wattsupwiththat.com/2011/10/15/shifting-sun-earth-moon-harmonies-beats-biases/

http://wattsupwiththat.files.wordpress.com/2011/10/vaughn-sun-earth-moon-harmonies-beats-biases.pdf

To make it more complicated, the aliasing integrates (via circulation).

Don’t forget about spatial aliasing. Too many signal processing expert contributors appear hardwired to the time-only dimension.

Remember that marginal temporal & spatial summaries differ fundamentally from joint spatiotemporal summaries.

There’s a spectacular logjam on the mainstream river to climate enlightenment, caused by failure to recognize the spatiotemporal version of Simpson’s Paradox.

Might as well call it Simpson’s Logjam.

Best Regards.

Don’t forget about spatial aliasing. Too many signal processing expert contributors appear hardwired to the time-only dimension.

There’s a spectacular logjam on the mainstream river to climate enlightenment, caused by failure to recognize the spatiotemporal version of Simpson’s Paradox.

Might as well call it Simpson’s Logjam.

Well said.

I think that the term “aliasing” dramatically understates the problem.

I think what you meant to say is that obtaining contradictory results does not always imply erroneous results due to sampling error.

Richard S,

Excellent post. Shifting the view to signal processing refocuses the discussion on real data and algorithm shortcomings.

And DSP hugely overlaps with statistics. This stuff all leads back to statistics.

I’ll second that!

–>”… I will illustrate it using temperature records and suggest that it is not a trivial problem….”

Ditto re the measurement of atmospheric CO2 at Mauna Loa, the site of an active volcano where the concentratin can swing 600 ppm in a single day–e.g., Tim Ball: “Elimination of data occurs with the Mauna Loa readings, which can vary up to 600 ppm in the course of a day. Beck explains how Charles Keeling established the Mauna Loa readings by using the lowest readings of the afternoon. He ignored natural sources, a practice that continues. Beck presumes Keeling decided to avoid these low level natural sources by establishing the station at 4000 meters up the volcano. As Beck notes “Mauna Loa does not represent the typical atmospheric CO2 on different global locations but is typical only for this volcano at a maritime location in about 4000 m altitude at that latitude.” (Beck, 2008, “50 Years of Continuous Measurement of CO2 on Mauna Loa” Energy and Environment, Vol 19, No.7.) Keeling’s son continues to operate the Mauna Loa facility and as Beck notes, “owns the global monopoly of calibration of all CO2 measurements.” Since Keeling is a co-author of the IPCC reports they accept Mauna Loa without question.” (“Time to Revisit Falsified Science of CO2,” December 28, 2009)

Judith said

‘My concerns regarding aliasing relate particularly to the surface temperature data, especially how missing data is filled in for the oceans’.

Using the word ‘missing’ hardly does justice to the way that some grid cells in SSTs are occupied by a handful of observations in a year which then fills in data for that cell for the entire year.

Or by missing did you mean ‘made up?’

Tonyb

Yeah yeah complain away, but I bet many of the skeptics that will scream bloody murder about missing grid cells in the temperature records will nevertheless be referencing hadcrut warming trends in the early 20th century in argument…

You never hear a skeptic wonder if the early 20th century warming perhaps never happened….

Hmm. I wonder if the early 20th century warming never happened, and it actually cooled half a degree to the 1940s? That would mean we had just returned to normal…

Happy?

Well, this agnostic has said several times that the data are so dodgy that one cannot confidently say anything about global warming, other than it might have occurred. And if that is the case, we cannot say that whatever happened in the 20th century was ‘unprecedented’, either.

Well it may be that some of the apparent cooling post-1940 never really happened…I seem to remember a series of posts at CA and maybe here about bucket adjustments…

There are indeed spurious trends in published surface temperature series, but they aren’t introduced by aliasing. They’re introduced by “corrections” applied to the raw data. Details on request.

An interesting analysis of coastal versus non-coastal raw data is presented at http://joannenova.com.au/2011/10/messages-from-the-global-raw-rural-data-warnings-gotchas-and-tree-ring-divergence-explained/#more-18275

“… is this simply a load of pretentious, flatulent, obfuscating, pseudo-academic navel-gazing…”

Rather than unabashed Omphaloskepsis, it is far more likely that wilful ignorance and unconscious incompetence underlies most of what we observe in the climatology of global warming alarmists, which outside Western civilization has been likened to the science of ancient astrology.

Rather than looking at simulated data the obvious thing to do is to look at some real daily data and see if this actually matters in practice. You can get daily data from http://climate.usurf.usu.edu/products/data.php

Of course there are subtle issues about how daily data is acquired, but that’s to some extent a different question.

Thanks. I did this after you suggested it, but I have not done an exhaustive study as the data is difficult to access in a convenient form (or at least I havn’t found out how to do it). One difficulty is dealing with drop outs and I have simply linearly interpolated across them.

I agree that this is important when looking at trends, but to do this for the whole US data set would be a major undertaking.

As one might expect, the effects vary from station to station. Wapato shows this effect with a .35 degree excursion between the data (as integrated) over a 20 year period. One problem is that there is a bias in the data,which is recorded at 1 degree intervals and the integral drifts over time – this is, Isuppose, inevitable when the data has been rounded, truncated(?).

For simple dropouts, you can construct an indicator series that is 1 where you have data and 0 where you don’t. The transform of that gives you something that gets convolved with your signal. It’s a generalisation of the finite sample length problem, and if serious might be addressed by some of the same methods, like tapering.

A bigger issue is when dropouts are due to things like station moves, when the error is a step function or something worse. The sharp edges of the step contribute high frequencies, and its persistence low ones. As you’ve already done, you can Monte Carlo possible distributions (many of them are known or obvious) and look at the uncertainty this adds.

Quantisation error is also tricky. I’m not convinced that the usual assumptions of independent, uniform distributions are valid – particularly after taking anomalies. But I don’t know what you could do about that.

I seem to recall that Lucia Liljegren took a long hard stare at quantisation error and decided that it probably didn’t matter? But I may be misremembering to the point of making this up…

Yep. it doesnt matter either. That was a nice piece of work by Lucia

Yes, I agree it doesn’t matter. It was simply an observation.

“I seem to recall that Lucia Liljegren took a long hard stare at quantisation error and decided that it probably didn’t matter?”

That is one thing that particularly irks me over the way climate scientists behave – and Steve Mosher on this thread is demonstrating it again – and that is this idea that you can say “the errors don’t matter” and everything is alright again.

Even if they don’t make a difference to the conclusion, the errors do matter. It matters if you haven’t checked, and just got lucky. It matters if the data is OK for one purpose but not another, and this isn’t made obvious. It matters if you leap at the throat of everyone who tries to check these things for themselves, or even asks the question without checking, as if it were the conclusion rather than the method that mattered.

It’s interesting and potentially educational to ask the question. If it’s already been thought about and there’s an easy answer, then a shortcut or guide would be gratefully received. But the only time errors “don’t matter” is when they had already been quantified and everybody made aware of it.

I don’t know if quantisation errors matter. You’re combining flaky and intermittent data rounded to the nearest 1 C, putting it through the statistical meat grinder, and deriving results to 0.1 C precision or better. How is that possible? I know there are people who believe that if you just take enough quantised data to average you could resolve individual atoms, but real world errors don’t work that way. Mathematical assumptions and approximations that are good enough for most purposes are not perfect and cannot be pushed indefinitely. How far can you go? How close are you to the edge?

While I tend to agree that the issues raised here are not a big deal for calculating trends (and disagree profoundly with the claim that such trends are necessarily meaningful), trends are not the only thing people are interested in. The same thing happened with Watts’ paper on station siting – everybody leapt on the fact that various large errors contributing to the trend happened to cancel, and ignored everything else. And then said “the errors didn’t matter”.

Have we made sure everybody knows *why* they don’t matter?

Lucia published her code.

rather than argue whether it mattered or not she tried to prove that it didnt.

So, go get her code, have a look. Maybe there is a problem. Maybe not.

But simply because you have an argument ( words on a page) means nothing. It means less than nothing when people have given you the tools and you refuse to use them.

What bothers me is that people forget why we fought for data and code. We fought for it so that people like you could have the tools to either prove your points or not. we fought to give you power. the power to look at the data yourself and process it yourself. Instead, you ignore the tools that people produced for you and resort to words on a page.

man up. write some code and prove Lucia wrong. prove that it does matter and why? I used to think it mattered. hell go back to CA and see the idiot mosher blather on about all these things. working through the problem for yourself changes things. It makes you less tolerant of people who refuse to do the work, even when you make it easy for them.

Mosher, could you provide a link to where Lucia did this? I tried searching her blog, and I didn’t find it.

I think there are two issues here, which I alluded to, but didn’t make clear.

1) If you want to compute a “global mean temperature” durin 1960-1970, aliasing of the data doesn’t matter a fig.

2) If you want to use data as an input to drive a model or calibrate it, this is a more subtle problem, which concerns me much more.

Suppose you have a GCM and these models, I believe are run at 1 hour steps. If you are going to drive them, you need data with a bandwidth of at 30 mins-1. So such data we have had, historically, isn’t sampled at anything like that rate. If you are going to use it, you have to filter it heavily and interpolate.

Daily data, unless gathered instrumentally, with an anti-aliasing filter is also aliased, quite badly in fact, because it is full of jumps and rapid processes. Turning this is into a mean over a month, or even a year, gets rid of the problem as a statistical observation, However, you use this data to drive a short term model, you will encounter problems unless you are very careful.

“could you provide a link to where Lucia did this?”

The best I can find is here.

http://rankexploits.com/musings/2009/false-precision-in-earths-observed-surface-temperatures/

There’s a spreadsheet, where Lucia models rounding errors with the usual perfect mathematical properties (0.499999 gets rounded down, 0.500001 gets rounded up) and a link to Chad who does something similar applied to some real data.

They appear to be arguing about something slightly different – the idea that with 1 C quantisation, you cannot get an average to *any* better than 1 C or 0.5 C accuracy. As they say, that’s not true. You *can* improve accuracy by averaging, but not indefinitely.

Maybe there’s something else at Lucia’s I’ve missed?

Richard,

In what circumstances do you think temperature data are used to “drive a model”?

Nullius in Verba, surely there is some other thread or source where Lucia actually examines the problem you discussed. Otherwise, Mosher would have been rudely challenging you based upon a complete fabrication.

Nullius in Verba, a quick addendum. That spreadsheet made me interested in the effect of rounding, so I decided to check it out. Unless my math is off (which it very well could be), rounding values which are normally distributed will actually introduce biases in your results. The magnitude of the biases will cancel out, but it will skew the distribution of your results.

@Nick Stokes.

SB2011 and D2011 use monthly intervals and HADCRUT to drive their “models”. They calculated results at monthly intervals although the processes involved may, I repeat may, have a shorter time scale than that. This is my interpretation of what they said and I may be wrong. As I pointed out in the main post, this will produce some rather odd results, even if you are postulating a lowpass filter as suggested by their first order ODE.

“Unless my math is off (which it very well could be), rounding values which are normally distributed will actually introduce biases in your results.”

The usual analysis takes the distribution of the data (pdf of a Normal, say), chops it into blocks one quantum thick, and then adds all the blocks together. When the pdf changes only slowly compared to the quantisation size (i.e. you quantise at a much finer resolution than the spread of the variable) and it’s not too skewed or spiky, each block is fairly flat and the result is approximately uniform on the interval (-1/2,1/2). You get from that the usual basketful of statistical parameters – zero mean, 1/12 variance, etc. – that allows you to draw conclusions.

But as you indicate, if the distribution changes quickly compared to the quantisation, you can get a significantly non-uniform distribution, with a non-zero mean. And if you examine it closely enough, it will *never* be exactly uniform.

In the case of temperatures (not temperature anomalies) you get a distribution that looks more like sec(T-T_av) than a bell-shaped normal, with peaks at the ends where the temperature hangs around for a while near the maximum or minimum. You might expect some sharp changes near the ends. However, the middle of the distribution is fairly flat, and even the ends are blurred out, so it’s unlikely to be very obviously non-uniform. You most commonly get problems when you quantise *twice* at different resolutions. (e.g. round to the nearest degree F, then convert to C and round again.)

But even with this analysis, it assumes that humans reading a thermometer round according to some exact, constant error distribution. That you can in principle get more and more accuracy by averaging more and more data. Eventually it becomes like the Emperor of China’s nose. You wind up measuring the measurers, and tracking fashions in the metrology instead of the meteorology.

It’s clear that using monthly data, it’s not possible to study processes of shorter time scale, and that using such data influences also results with a time scale of about one month. That has, however, nothing to do with aliasing unless there’s a periodic phenomenon of shorter period that is strong enough to make the average dependent on the relative timing of this phenomenon and the cutoff points of the months used in calculating averages (more generally the effect is present if there’s an autocorrelation that extends significantly to a time lag of one month, but which varies at a faster rate).

This kind of problems are possible in principle, but very unlikely to be significant enough in comparison with other issues to warrant special handling. Using a filter to smoothen the data before it’s used in the analysis and in particular using it before another filter is introduced in calculating the average would only lose information.

Pekka,

Please comment on what I wrote below at 9:40, if you’re interested.

Bill

Very true. If there was say a spike that happened every July 21st where everything momentarily got hotter and the sampling did not pick that up, then it would be an issue. But these are what are referred to as pathological or degenerate cases. One can spend a lot of time tracking down these phantoms if you are so inclined.

Nullius in Verba, thanks for your response. It’s good to know I understood the situation properly. Now if only Mosher could clarify his comment about what Lucia has actually done.

“I agree that this is important when looking at trends, but to do this for the whole US data set would be a major undertaking.”

Judith – sounds like a job for the BEST Team

nonsense.

The data for 26,000 daily temperature stations is easily downloadable.

Its takes a while, but the code is all written for you. And its free.

its as easy as this. using the DailyGhcn package in R

#### Downloads all the data that has either Tmin or Tmax

if (!file.exists(DAILY.QA.DIRECTORY)) dir.create(DAILY.QA.DIRECTORY)

if (!file.exists(DAILY.DATA.DIRECTORY)) dir.create(DAILY.DATA.DIRECTORY)

if (!file.exists(DAILY.FILES.DIRECTORY)) dir.create(DAILY.FILES.DIRECTORY)

if (!file.exists(MONTHLY.DATA.DIRECTORY)) dir.create(MONTHLY.DATA.DIRECTORY)

cntryFilename <- downloadCountry()

inventoryFilename <- downloadDailyInventory()

metadataFilename <- downloadDailyMetadata()

MinInv <- readDailyInventory(elements= "TMIN" )

MaxInv <- readDailyInventory(elements= "TMAX" )

all <- merge(MaxInv,MinInv, by.x = "Id", by.y = "Id", all = TRUE)

dlist <- makeDownloadList(all)

downloadDailyData(dlist)

Takes a day to download 26,000 daily stations from around the world.

its raw data with 14 quality control flags.

removing data that fails for QA..

convertRawtoDat()

doing trends for the whole US maybe a days work unless you want custom work.. Not a big job at all.

Actually, I don’t think that this is quite as simple as you think. Had you noticed aliasing? Daily data is aliased.

If you want to drive or calibrate a model, this is a problem. If you want to integrate the data over time and space, it doesn’t matter.

There are minute-by-minute and hour-by-hour temperature data available by anonymous ftp from http://ftp.cmdl.noaa.gov. I have a program to extract specific data. It’s not refined or turn-key and requires hand-crafting and a ( shudder ) Fortran compiler to be useful. Let me know if I can assist with analyses of the data.

Dan,

I managed to get to the website you linked by typing in ftp://ftp.cmdl.noaa.gov/.

Interesting. I don’t know anything about signal processing, but what if you took one of the longest hourly series, Mauna Loa or Barrow Alaska and did successive filtering on it to try to find the highest relevant frequency and then compared it to a monthly average?

I guess this is what Richard is talking about above at 12:18 pm but it’s too brief, I don’t know….

Could minute-by-minute observations possible matter in this context (for T, w/r/t signal analysis?). I can see them mattering for some variables…is it reasonable to say a priori that the daily T cycle is the one with the highest frequency?

Replying to your post below.

Let me say that I found aliasing very difficult to understand when I first encountered it. The fine details can be very subtle, even for people who have been trained in the subject.

Aliasing is an unpredictable, non linear transformation of a continuous signal into a sampled signal. The way this is normally avoided is to use a low pass filter to remove high frequency components in signal before sampling it. If you have an analogue electrical signal, this makes the problem easy.

However, if there were an impulse between samples, you would see evidence of this in the sampled signal because the signal going in to the Analogue to digital converter, would have the impulse response of the anti-aliasing filter imposed upon it.

If you have a signal that is “sampled” by some other means, i.e.: reading a thermometer and recording the result, this safeguard is lacking. You may catch the physical effect of the impulse creating a slow increase and then a decrease in temperature, in say 6 hours. Now imagine that the next day, the same thing happens, but at a different time. You will capture a different part of the impulse response. The problem is that if you don’t sample this adequately, you can’t tell that the temperature has risen and fallen twice, but you will record what appears to be a slowly moving trend in the daily temperature.

Therefore, in daily readings, you will get a spurious impression of what is happening between samples. If there is activity on a higher frequency than1/2days, you will not capture it with daily sampling. Features, such as impulses or step changes, have a high bandwidth, so in spectral terms, they can be aliased.

One quick and dirty way to determine frequency content of a signal, and this is a variant on what you are suggesting, is to take a signal and decimate it. You then interpolate the decimated signal optimally, which is a convolution with the samples and sin(t)/t, and see if there are systematic differences between the original and reconstructed versions.

You can’t tell whether a signal is aliased by filtering with increasingly severe low-pass filters, because the theory of the filter explicitly assumes that signal is not aliased. If, however the signal is genuinely not aliased, using filters of different bandwidths allows one to tell what features are located in which part of the spectrum. This is a valuable technique since, if say toy decide that the process you are interested in is a slow moving trend, or alternatively rapid excursions, this enables you to isolate the features of interest in the signal

I used to use a very good analog to digital converter and always found very strange harmonics. The stirring bar of my oxygen electrode was a good source. I eventually found that I could get rid of a huge amount of cyclical noise by sampling at a prime frequency, then filtering at a different prime.

I really like this article.

If you know the frequency of your interference and the frequency of your signal of interest, you can usually select a sampling rate that moves all the energy of the interference away from your signal of interest.

It does get complicated that anything but a sine wave will have harmonics and you must make sure the harmonics of both signals do not conflict. Using primes is a cool idea, although you have to be able to control the interference and signal source, which is rare in the real world in my experience.

Usually my problems are related to 50/60 Hz electrical interference, or similar with light sources.

“Let me say that I found aliasing very difficult to understand when I first encountered it.”

Here’s a homely analogy that I found helpful. I know you don’t need it, but some might. Suppose you see a snapshot of a long distance running race between two runners on a 400m circular track. One is leading by 40m.

Or seems to be. But he could be 360m behind. Or 760m, or 440 m ahead. You don’t know, but you probably think the 40 m lead is most likely, as lapping is not common.

Whenever you observe a frequency in a regularly sampled signal, there is a similar ambiguity. Corresponding to the lap length is the sampling frequency. Signals separated by the sampling frequency are indistinguishable. Usually, you expect that the lowest possibility is the one you want. So if the sampling frequency is high, that enhances the contrast.

As you approach the Nyquist frequency, the distinction between lowest and next lowest fades. It’s like seeing that race with the runners 200m apart, on opposite sides of the track. You can’t guess who’s in front. The Nyquist frequency is half the sampling freq, just as the point of max ambiguity is half the lap length. But there’s some ambiguity at any frequency level.

But the notion of aliasing results from your wish to interpret frequencies as being the lowest. Sometimes you don’t. If you’re timing an engine, you have a strobe light running at the target frequency. You see a fan blade slowly rotating. But if you know what you are doing you interpret this as the next frequency up, and adjust accordingly. If you don’t know, you risk injury – the lowest frequency interpretation is wrong.

One way to detect aliasing is to change the sampling rate, and then examine the spectrum to see if it changes “as expected”.

If you slow the sampling rate down to say 90% of the data rate, and one of your signal peaks in the spectrum jumps, you can be fairly confident that the signal is aliased. You can even calculate the signals actual frequency with some ugly math and enough sampling rate changes.

Finally some real science instead of the usual arm waving conjecture that has kept this ludicrous AGW alive for so long even though it is refuted by all physical data.

Global temperature is in fact nothing more than a time series and therefore is best evaluated through fourier analysis and aliasing is the least of the problems that those pushing AGW must reckon with.

This is a bread and butter issue of my profession as an exploration geophysicist and with over 40 years of signal processing of siesmic data under my belt I can point to several areas of failure of AGW apart from the alias problem of this article.

Reflections on the seismic record section are limited to the frequency spectrum of the input seismic source wavelet which means the time series must be limited to the frequency spectrum of the driver to claim that a particular driver is predominantly responsible for the time series.

The HadCRUT3 global temperature dataset represents a 150 year time series with a dominant 65 year period as demonstrated by the 50 year moving average applied to thius data shown on http://www.climate4you.com under the heading “Global temperature” and the sub heading cyclic air temperature changes” (9th in the listing of contents).

The increase in atmospheric CO2 concentration which is another time series shows a smooth accelerating increase ending in a linear trend of about 2ppmv/year over the past decade. This time series does not contain this predominent 65 year period so increase in CO2 concentration cannot be the driver of observed global temperature change; full stop!

Interestingly, Frank Lemke’s post on CE uses no theory or filters, and comes to the same conclusion:

“The atmospheric CO2 at a time is described very well by the CO2 concentration observed 12 months before, exclusively (auto-regressive model). This model has been posted earlier. However – and this is a most important finding -, CO2 does also not influence any other of the system variables including global temperature. It remains completely autonomous.”

See Figure 2, here.

There are no limits in the number of errors that can be made in data analysis, but I fail to see, how the aliasing problem discussed in this post could be relevant.

Judith mentioned the issue of filling missing data, where also errors related to aliasing are certainly possible. On that point I have been wondering, whether it’s really too difficult to develop methods that would not be dependent on filling the missing data, but use only the existing data directly in estimating the values that are being searched for. Methods based on the concept of maximum likelihood work often well also in presence of missing data points.

Pekka,

What do you think of what BEST says about their methods?

From the FAQ page, http://berkeleyearth.org/FAQ.php#agenda:

“What is new about the statistical approach being used?

The central challenge of global temperature reconstruction is to take spatially and temporally diverse data exhibiting varying levels of quality and construct a global index series that can track changes in the mean surface temperature of the Earth. This challenge presents no easy solution and we believe that there is inherent value in comparing different approaches to this problem as well as understanding the weaknesses intrinsic to any given approach. Thus, we are both studying the existing methodologies for averaging and homogenizing data as well as looking for new approaches whose features seem to incorporate valuable alternatives to the existing methods.

The statistical methods that we use have been developed by Robert Rohde in close collaboration with David Brillinger, a Professor of Statistics at the University of California at Berkeley, and the other team members. They include the statistical approach called Kriging (a process which allows us to combine fragmented records in an optimum way), the scalpel (which identifies discontinuities and cuts the data at those points) and weighting (in which the program estimates numerically the reliability of a data segment and applies a weight that reduces the contribution of the poor samples). The methods all use raw data as input. There are no manual corrections applied; all the weights and scalpel points are determined using automated and reproducible methods.

Our algorithms aim to:

Make it possible to exploit relatively short (e.g. a few years) or discontinuous station records. Rather than simply excluding all short records, we prefer to design a system that allow short records to be used with a low – but non-zero – weighting whenever practical.

Avoid gridding. All three major research groups currently rely on spatial gridding in their averaging algorithms. As a result, the effective averages may be dependent on the choice of grid pattern and may be sensitive to effects such as the change in grid cell area with latitude. Our algorithms seek to eliminate explicit gridding entirely.

Place empirical homogenization on an equal footing with other averaging. We distinguish empirical homogenization from evidence-based homogenization. Evidence-based adjustments to records occur when secondary data and/or metadata is used to identify problems with a record and to then propose adjustments. By contrast, empirical homogenization is the process of comparing a record to its neighbors to detect undocumented discontinuities and other changes. This empirical process performs a kind of averaging as local outliers are replaced with the basic behavior of the local group. Rather than regarding empirical homogenization as a separate preprocessing step, we plan to incorporate empirical homogenization as a process that occurs simultaneously with the other averaging steps.

Provide uncertainty estimates for the full time series through all steps in the process.

The equations that provide a schematic outline of the approach we are currently pursuing are described in a summary document available here. Our ultimate algorithm will require additional features and modifications to address statistical and observational problems.”

Bill

The issue is too complex for me to make any specific proposals, but it’s clear that the text that you picked from the BEST faq is written in the same spirit than, what I have in mind.

The basic idea is to develop methods that are capable of using directly the existing data and avoiding all intermediary steps that would lose or distort information. The text mentions also the important point that data should be weighted based on the accuracy and information content. Doing all that involves technical risks as new tools must be developed and errors may be introduced in that. Even so the results should ultimately be more accurate and reliable.

One essential point is, however, whether all that extra effort is justifiable. I’s not, if it can more easily be shown that the improvements will be too small to have significance for any final conclusions. That’s quite possible, but often proving that is more difficult than doing the better analysis and finding out that nothing changed on relevant level.

A better methodology for handling the surface data may well have benefits by producing better data on local and regional level even, if it turns out that nothing essentially better is obtained on the global average temperatures.

One methodology, where the aliasing errors are certainly a real problem concerns spatial analysis over the whole globe using some set of orthogonal functions. The details of geography may lead to significantly erroneous results, because continents and other specific features form too strong gradients for being handled with a reasonable number of orthogonal functions.

Pekka,

Thanks. I didn’t expect a detailed analysis. :)

“One essential point is, however, whether all that extra effort is justifiable. It’s not, if it can more easily be shown that the improvements will be too small to have significance for any final conclusions. That’s quite possible, but often proving that is more difficult than doing the better analysis and finding out that nothing changed on relevant level.”

Especially in the current context….

Does anyone know why can’t remote sensing or thermal imaging fill in the gaps on the globe?

Yes, it can help, but the satellite record was only begun in the 70’s. There are studies which collect all data for a specific point (See for example http://www.arm.gov/). This includes lidar and cloud radar from measuring from below and satellites measuring from above, radiosondes and sometimes in-situ flights in between.

Currently using the BEST-type methods ( methods closely related) I can pretty much say that the BEST methods don’t give you significantly different results when performed on the same data source. What the methods do allow you to do is to use fragmentary records.. so you get some better coverage ( which doesnt change the answer) you also get the standard errors, which helps with better CIs. So better uncertainty measures and some minor perturbations in trend estimates. Guess what?

The warming in the 1930s doesn’t go away. neither does the warming of the past 50 years.

go figure this: If I take the current data and use different methods, including BEST type methods I get the same answer.

Go figure this: If I take the all those methods and cut my data in half…

I get the same answer

Go figure this; If I select 200 stations that have the longest records..

I get the same answer

Go figure this: If I add MORE data ( say from GCOS, or ghcn daily)…

I get the same answer.

So against the very real theoretical concerns stand the very practical results. The theoretical concerns are interesting for the technically inclined. But WRT the numbers that really matter.. not so interesting.

At least they havent be proven to be scientifically interesting.

I have no doubt the pattern is in the data. This misses the point entirely. The uncertainties are with the data.

By the way, I don’t believe there is any way to compute true confidence intervals in the area averaging method. Not CIs that capture the underlying averages used to compute the averages used to compute the average global temperature. You can treat the cell averages as measurements but this too misses the entire point. Every layer of averages has its own CIs, which you would have to combine and aggregate somehow. Nor can there be CIs when you do extensive interpolation and extrapolation. Area averaging on a grid is not statistical sampling. The math of statistical sampling just does not apply.

There is no gridding in the BEST approach.

Any prediction on how the mean from a gridded approach will compare with non gridded approaches?

Any prediction on how the BEST confidence interval will compare with say Jones?

Now’s the time to make a prediction about how important your concern is

What “warming of the last 50 years” are you referring to? First of all, HadCRU only shows a roughly 20 year warming spurt, from 1978-98. According to UAH the only warming during that period was a jump during the 1998-2001 ENSO cycle. There was no warming from 1978-1997. There was no warming after 2001, but the flat line is higher than before the ENSO. See: http://www.mediafire.com/file/a9tv9tad9e6216p/UAH_2011_06_19_two_regressions.pdf

So what is this 50 years of warming?

You don’t get the “same answer”. Your answer is just not meaningfully different for its intended purpose. I know what you mean, and agree with you,and get your point. There is enough data and removing parts of it introduces little error.

But I agree with a comment above that this tends to generalize too much with these kind of statements and can be misleading sometimes.

What would be more complete and useful is if you told us at what point of cutting your data (2, 4, 8, 16?) that you did not get the “same answer”. Even a general statement like “things tend to fall apart at 64 stations” gives us a better feel for your hard work on the data.

A visual example is a picture of a dog at say 2048 x 2048 resolution. When you cut the resolution to 1024 x 1024, you get the “same answer”, a dog. A meaningful data point would be to determine at what point it cannot be differentiated from a cat reliably.

Hmmm. So no matter how you measure the temperature (terrestrial or satellite), and no matter how you process it, you get the same result.

Probably time to stop arguing about it then.

I would suggest that if you are attempting to validate a model, as in the discussion of my last post, if the input data is aliased and the output data isn’t or vice-versa, you are going to get some pretty odd results.

Aliasing is the most basic concept in signal processing and ensuring that the data is not aliased is fundamental in processing any time series as it represents a highly non-linear, and unpredictable, transformation of the data.

The difficulty arises when you don’t realise that a time series is a signal.

A time series is a signal and signals have signatures so this might be a way to expose “Mike’s Nature trick” of adding thermometer data to the proxy data in the zone of overlap to make the proxy data perfectly match the thermometer data.

There is about a 80 year overlap of proxy and thermometer data. If there was no fiddling with the proxy data the spectral signature of the 80 years of proxy data before the overlap should be identical to the spectral signature of the proxy data in the 80 year overlap and if there was some mischief the spectral signature of the thermometer data will show up in the 80 year overlap proxy data but not in the proxy data from the previous 80 years.

Perhaps Michael Mann didn’t realize that a time series is in fact a signal and this signal may have left fingerprints on the crime scene.

Any systematic procedure or “intervention” with the data would impose a signal, and leave a “fingerprint”. Adventitiously or by selection or by design, this might be (like) a signal you are hoping/trying to detect in the data. And therein lies the rub.

A seismic record is the convolution of a wavelet with a reflectivity sequence and the objective od seismic data processing is to reduce the wavelet yo a spike which would reveal the reflectivity sequence perfectly. This of course can’t be done because the wavelet is band limited and typically minimum phase commplicated with various phase distortions and amplitude variations.

If we know the source signal we can extract the wavelet with a process called signature deconvolution which is essentially an operator designed on the phase and amplitude spectra of the source wavelet which convolved with the wavelet will produce a zero phase wavelet with a “boxcar” shaped amplitude spectrum.

Typically we do not know rhe wavelet signature and determine it through various assumptions and use various deconvolution algorithms to replace the wavelet with as close to a spike as possible.

If the data was “fiddled with” this type of analysis would show different phase and amplitude spectra fort the proxy data before and after the overlap and this can be compared to the measured data which will have its own spectral signature.

The only rub is that the input data for the hockey stick is not made readily available as a time series for this type of analysis so I can only speculate as to its outcome.

Can’t aliasing be simply avoided by just using the daily temperatures? Or, if you check your monthly averaged graph against your graph that only uses the raw daily data, it should be possible to see if aliasing is an issue. For example, it would be nice to see a graph of averaged monthly or yearly surface temperatures plotted against a graph using non-averaged data, and then to compare the underlining trends of both.

Daily high or daily low? What about hourly?

The problem with samples is that the are only samples.

How about 5 minute data? I’ve got hourly data, I can request 5 minute data. In the end you’ll see that concerns about aliasing are overblown. having compiled hourly data into daily data, daily data into monthly data, monthly data into yearly data, yearly data into decadal data… there is nothing there of SCIENTIFIC note. They are technically interesting, but scientifically un important. Simply, the estimates of warming trends are relatively insensitive to

1. sampling period

2. spatial coverage

It’s still warming. GHGs play a role in this. The question is how much?

Caveat: dan hughes work on Hurst and daily data IS interesting but for an entirely different reason

I’m sure that you are correct in what you say – but this is missing the point. We are presented with datasets in which every elementary theorem of signal processing has violated. These are the datasets on which we have to draw conclusions.

“We are presented with datasets in which every elementary theorem of signal processing has violated. “

No, I think that is missing Steven’s point. A superficial investigation sees the data presented in a certain way. But it has been sampled monthly in what you see for convenience, mainly to reduce the size of data files. More frequently sampled data is available and has been analyzed. The importance of aliasing is known.

Nick,

so if “The importance of aliasing is known.” it must be documented somewhere?

If the problem of aliasing is well known, may I ask why those producing the data sets didn’t apply a carefully designed digital filter to the data before decimating it.

This comes back to my original point. If you are going to apply statndard statistics to averages of sets of data obtained at different places, this is fine. If you are goind to treat the averages as a time series, then it may not be.

This is qualifed by the context of the question. If you are going to integrate the observations in time and space, aliasing effects will be negligible. If you are going to use them as model inputs (i.e.:SB2011), the effects may be important.

there is nothing there of SCIENTIFIC note. They are technically interesting, but scientifically un important. Simply, the estimates of warming trends are relatively insensitive to

1. sampling period

2. spatial coverage

It’s still warming. GHGs play a role in this. The question is how much?

The question of how much is a scientific question, and I think it likely that it can only be answered with data collected at least hourly on small grid scales. It has certainly not been shown to be independent of grid and time scales, and the potentially negative feedback effects of clouds happen on hourly scales. If an increase in CO2 were to cause an acceleration of daytime cloud formation and increase the duration of afternoon cloud cover and rainfalls in the tropics or American Midwest, then this effect would be totally missed by averaging over space and time. Not a lot of people think this matters , AFAIK, but no one has shown by data and analysis that it is irrelevant. I think almost everyone agrees that the role of clouds is the biggest unknown, and they are mostly transient phenomena, accumulating and dissipating over epochs measured in hours, and spatially spotty besides.

Matt – As I think Andy Lacis has mentioned in one of the other recent threads, these processes are evaluated at the grid scale level at less than hourly intervals – the need you mention is not neglected. The results for clouds come out from the GCM models as a net positive feedback, long term, in the longwave (greenhouse) component, and in some cases the shortwave (albedo) component as well, with an overall net positivity. HIRS and ISCCP cloud observational data are consistent with this, although the trends are not long enough to assure us that other factors are not also operating. In any case, those data tend to rule out a substantial negative feedback. Climate sensitivity can also be evaluated by methods independent of specific feedbacks by looking at forcing/temperature relationships, wherein the final results implicitly include the feedbacks even if they are not evaluated separately, obviating the need to ask what clouds are doing, or water vapor, lapse rate, ice-snow melting, etc..

It would be silly to look for C02 effects on an hourly or grid scale basis.

In fact the theory can tell you why you would not see anything.

But if you want to look at hourly data it exists. Knock yourself out. Code is written and freely distributed to make that job easier for you. Support for that software is free. Now, arguing is easier than proving. Given that there is data, given that the software exists to get that data for you, given that it was done for free, I’d argue that I made proving your case easier. So, go prove it.

Stephen Mosher: But if you want to look at hourly data it exists.

I am looking into it.

It would be silly to look for C02 effects on an hourly or grid scale basis. In fact the theory can tell you why you would not see anything.

The theory is incomplete and inaccurate. The effect of CO2, like the effect of clouds, is not constant throughout the day-night cycle.

The effect of CO2, like the effect of clouds, is not constant throughout the day-night cycle.

Matt – Of course it isn’t, but the radiative calculations are time stepped to address the changing dynamics. If you claim that this isn’t done with complete accuracy, you would be right, but if you claim that it isn’t done at all, or is far off the mark, you need to justify that claim by going to the heart of the models, and pointing out exactly where you think the mismatches are. David Young has actually criticized models in regard to time stepping and suggested possible improvements. That doesn’t mean that their current performance is poor regarding radiative transfer. It’s probably pretty good, and there are greater problems elsewhere..

Also, I’m not disagreeing with Steven Mosher on this point. I interpret him to mean that you won’t see CO2-mediated climate change on an hour to hour basis. You will see changes in radiative up and down fluxes. You will also certainly see cloud changes, some of which are entirely unrelated to the concurrent changes in CO2.

Fred Moolton: You will see changes in radiative up and down fluxes. You will also certainly see cloud changes, some of which are entirely unrelated to the concurrent changes in CO2.

Remember what it is that the CO2 does: it absorbs the upwelling radiated energy and transmits it via collisions to the adjacent atmosphere. Otherwise the N2 and O2 components of the atmosphere would not warm up. Since CO2 is densest near the surface, the effect of increasing CO2 will be to slightly increase the disparity between lower troposphere temp and upper troposphere temp, and increase the intensity of thermals and cloud formations. This could have the effect of increasing the speed at which the lower troposphere achieves its maximum temperature, increase the total duration of cloud cover, and increase the total mass of water that ascends, cools, and descends. The effect could be a net reduction of the daily mean temperatures.

Not by much, of course, but the estimated (from equilibrium assumptions and calculations) change in the near surface Earth temperature is only 1% of the baseline, and the estimated transient climate response (Padilla et al, over 70 years) is half of that. The standard theory omits a mechanism that is potentially potent enough to reverse the estimated short-term and long-terms equilibrium-based projections.

You are repeatedly asserting that what you know from equilibrium models means that these non-equilibrium transients are negligible, but they are not.

the effect of increasing CO2 will be to slightly increase the disparity between lower troposphere temp and upper troposphere temp, and increase the intensity of thermals and cloud formations

Matt – Yes, convective changes and their consequences are accommodated in the GCMs but are not hourly consequences of increasing CO2 at current rates of increase but are far more gradual . Even for a small sudden CO2 increase, they would be measured more in days and weeks, although cloud changes unrelated to hourly increases in CO2 do occur in times measured in hours.. To say that increasing CO2 will increase clouds is an enormous oversimplification of the CO2 forcing/cloud relationship and is an inaccurate portrait of what happens. None of this is “missed” by the models, though. It seems to me that if you want to challenge how these are handled, you need first to find out how they are handled. Your assumption that they are overlooked because they are ultimately incorporated into averages is incorrect.

Your statement that an effect could be a reduction in daily mean temperature is also unjustified by your speculations about heat transfer mechanisms and not easily reconciled with the relationship between temperature and heat loss from the surface via radiation, conduction, and latent heat transfer – at least for anything resembling our current climate. That the effect should be an increase based on known geophysics principles has been worked out quantitatively and is of course supported by observations (including radiative flux measurements) although not proved by them. The Trenberth/Fasullo/Kiehl energy budget diagram gives some clue to the relative strength of the individual phenomena (radiative, latent heat, thermals), but the important data are in the references. The general relationships aren’t controversial, although there are uncertainties about the exact quantitation.

I think this is an area that you need to know much more about before you decide what is or isn’t being done, and at what timescales the different phenomena operate.

There’s also a subtle point regarding your claim that disproportionate warming of the lower troposphere via a CO2 increase could result in a mean temperature reduction because of increased upward movement of heat in various forms.The statement is wrong in its final effects, but it is true that when the lower troposphere is warmed disproportionately, the eventual convective adjustments remove some of that excess warmth and so the final result is a lower temperature than if the adjustment had not occurred. It is still a higher temperature than before the change in CO2 – a net warming but readjusted downward to maintain an adiabatic lapse rate.

Fred, I am flattered that you have read my posts. I do need to write something longer on these issues sometime, but alas I still have a day job at least for a few more years. I’ll try to summarize some points that are amply documented in the literature. I apologize for not including links. you can look up some of my publications if you want on fluid dynamics.

1. Time stepping is just the tip of the iceberg. Usually the larger errors lurk in spatial discretization, the methods used to convert the partial differential operators to discrete representations on a finite grid. GCM’s use an essentially uniform grid and so far as I can tell finite differences. There are much more modern methods called finite element methods that enable solution adaptive error control through grid refinement and derefinement. We are not talking about factors of 2 here but orders of magnitude. This is critical for a problem as complex as the atmosphere or the ocean. For the ocean, the bottom profile is very complex and has a lot to do with the circulation patterns. Without grid adaptivity, you can get answers that are not just wrong quantitively, but qualitatively. The shape of the shore likewise has a huge effect.

2. Its a coupled system. The dynamics and the radiative forcing are strongly coupled. Just think of cumulous convection. Yet convection is a very complex process. To say that “the details may be wrong, but the overall radiative balance is right” is purely conjecture until proven and in my experience is just wrong. If you get the details of the flow over an airplane wrong, the far field effects will also be wrong, such as downstream vortices and you will miss critical unsteady effects that can overwhelm the “long term” statistics.

3. A common source of error in simulations is dissipation. Now there is real dissipation because the fluid is viscous. The problem is that naive numerical schemes, like leapfrog with RA filter introduce additional viscosity. This viscosity damps the real dynamics and washes it out. Basically, it can convert everything of real meaning into entropy and the result is totally wrong, not just off a factor of 2. This dissipation is often associated with the spatial operators as well.

4. The doctrine of the modelers is generously stated thus: The attractor is the climate and if its strong enough, the trajectory you take to get there doesn’t matter. You will get sucked into the long term statistics. This is based SOLELY on the fact that the models seem to produce “reasonable” patterns run after run. It seems to have no theoretical basis whatsoever. Excessive dissipation would produce EXACTLY the SAME result. With the grid spacings being used, there is a lot of dissipation.

5. There are simple numerical checks that most people do with simulations that don’t seem to be common in climate such as refining the grid and seeing if the answer changes, increasing the accuracy of the distribution of the forcings (they are not uniform you know), increasing the fidelity of boundary conditions, etc. and trying to get asymptotic convergence. There are also usually simple cases where analytic solutions are known that you can test your model against.

6. The issue of subgrid models is also a problem that has tormented fluid dynamics for 100 years. Basically, turbulent processes are tremendously complex and impossible to resolve accurately with current computers. The attempts to model these things are always based on fitting special cases and often are just wrong for other situations. Trust me on this, we can’t even model a turbulent boundary layer in a pressure gradient even with very sophisticated subgrid models that have been worked on intensively for 50 years. Clouds or convection are much more complex.

7. I like Andy Licas and he is a very good scientist. However, several of his statements raise big red flags. I’m paraphrasing here. If Andy wants to restate these things, I’ll stand corrected.

a. “The numerical methods for climate models are a field in themselves.” If I had a nickel for every time I’ve heard this assertion about some field of computational physics, I’d be a wealthy man. My experience is that this usually means that the modelers have been too busy with modeling issues to upgrade their methods and that the methods are not very good.

b. “The methods used tend to be constrained by computer speed.” Another red flag. The way to do this is to first find a method that is stable and accurate. Then, you speed it up. Andy says that various ad hoc filters are needed for example in the polar regions. That means the underlying methods are very fast but not very accurate.

c. “Internal variability will be modeled poorly for the foreseeable future.” This means that the models in fact DO have LARGE ERRORS in the dynamics. To assert that the long term statistics or the radiative balance is nonetheless anywhere near the correct result is merely a naked assertion of experience with the models and not of anything that has been verified by rigorous testing.

Andy, I love you, but I still think you need to rewrite your methods from scratch.

Anyway, the kind of assertions you are making about the models require rigorous testing and I have yet to see any evidence whatsoever of this testing. You know in CFD, this was the situation for 30 years and finally in the last 10 years, NASA started doing this kind of thing. When I write something longer, I’ll give references, but the results shocked people. The subgrid models were worse than people thought and the prediction of small effects was very bad. Incidently since this work has been, there has been little significant improvement. By the way, there is a NASA Langley site on validation of turbulence models and its quite interesting.

Anyway, my only point is that there are rigorous criteria by which to judge these things. They are well documented and used in many other fields of computational physics. In a field where policy is at stake, I would come down very hard on people who hadn’t done it. Certainly in structural design or aircraft design, the standards are much more rigorous and the consequences of missing some critical output such climate sensitivity by 40% as Hansen did in 1988 are much more serious.

Sorry for such a long post. I’ll try to carve out some time to do this right, maybe at Christmas.

David – Thanks for your long comment. It requires a response from someone more qualified than I am to match your criticisms against the details of model construction and performance. Perhaps Andy will have a chance to respond, as well as others more directly involved in the numerics and/or the fluid dynamics..

By “models”, we are essentially referring to GCMs. However, it is possible with much simpler models to arrive at similar conclusions about global temperature change and climate sensitivity, as discussed in various threads including the one on transient climate sensitivity. In these cases, if I understand the data correctly, the models are simply physics-based mathematical relationships between heat input in and out of the climate system and surface temperature, and don’t involve the complex GCM-type simulations of individual climate elements where errors in the numerical solutions to differential equations would be particularly problematic. They are based primarily on physical laws about temperature/radiation relationships and on observational data on climate forcings. They don’t address feedbacks because those are encompassed within the forcing/temperature relationship. With the GCMs, however, I would mention parenthetically that It is also possible to confirm some feedback estimates from observations.

What I’ve concluded from all this is that we are left with a range of climate responses about which we can be reasonably confident. The range is too wide, and the suggestions you make should be seriously considered by the professional modelers in terms of narrowing the range – I can’t judge that. The GCMs also have important potential roles regarding phenomena other than global temperature change – precipitation, ocean and atmospheric circulation, tropical cyclones, extreme weather events, regional and short term projections, etc. Here, the simpler models are inapplicable, and since these phenomena are of great practical importance, improving the GCM simulations will be critical.

Ultimately, I agree the models need improvement, and this will help greatly to improve all types of future projections as well as the use of the models to understand climate dynamics. However, I think we can even now make fairly solid judgments about climate behavior, including responses to anthropogenic greenhouse gases, based on current tools. Improvements will be desirable, but don’t contradict the conclusion that we already know a good deal about global temperature change.

Fred Moolton: Yes, convective changes and their consequences are accommodated in the GCMs but are not hourly consequences of increasing CO2 at current rates of increase but are far more gradual .

Let’s leave it at that for now. You are saying that the increase in temperature toward the new equilibrium can’t be occurring in any particular hours. If the 1% change happens at an even rate over 70 years, then the hourly effect can’t be detected.

Trenberth/ Fisullo/Kielh has holes, as they admit.

We agree that I need to know more. Where are the references that describe the heat transfer in the summer squalls and such that I have been writing about?

I agree hourly data exists now. I presume that there are anti-aliasing filters so the data won’t be aliased and will it behave properly. That is not the point. Older data was sampled at daily intervals and this is aliased. If monthly averages are used improperly, this will lead to errors.

The question is one of analysis. How much does this matter and in what context?