by Judith Curry

On possibilities, known neglecteds, and the vicious positive feedback loop between scientific assessment and policy making that has created a climate Frankenstein.

I have prepared a new talk that I presented yesterday at Rand Corp. My contact at Rand is Rob Lempert, of deepuncertainty.org fame. Very nice visit and interesting discussion.

My complete presentation can be downloaded [Rand uncertainty]. This post focuses on the new material.

Scientists are saying the 1.5 degree climate report pulled punches, downplaying real risks facing humanity in next few decades, including feedback loops that could cause ‘chaos’ beyond human control.

To my mind, if the scientists really wanted to communicate the risk from future climate change, they should at least articulate the worst possible case (heck, was anyone scared by that 4″ of extra sea level rise?). Emphasis on POSSIBLE. The possible worst case puts upper bounds on what could happen, based upon our current background knowledge. The exercise of trying to articulate the worst case illuminates many things about our understanding (or lack thereof) and the uncertainties. A side effect of such an exercise would be to lop of the ‘fat tails’ that economists/statisticians are so fond of manufacturing. And finally the worst case does have a role in policy making (but NOT as the expected case).

My recent paper Climate uncertainty and risk assessed the epistemic status of climate models, and described their role in generating possible future scenarios. I introduced the possibilistic approach to scenario generation, including the value of scientific speculation on policy-relevant aspects of plausible, high-impact scenarios, even though we can neither model them realistically nor provide a precise estimate of their probability.

How are we to evaluate whether a scenario is possible or impossible? A series of papers by Gregor Betz provides some insights, below is my take on how to approach this for future climate scenarios based upon my reading of Betz and other philosophers working on this problem.

I categorize climate models here as (un)verified possibilities, there is a debate in the philosophy of science literature on this topic. The argument is that some climate models may be regarded as producing verified possibilities for some variables (e.g. temperature).

Maybe I’ll accept that a few models produce useful temperature forecasts, provided that they also produce accurate ocean oscillations when initialized. But that is about as far as I would go towards claiming that climate model simulations are ‘verified’.

An interesting aside regarding the ‘tribes’ in the climate debate, in context of possibility verification:

- Lukewarmers: focus on the verified possibilities

- Consensus/IPCC types: focus on the unverified possibilities generated by climate models.

- Alarmists: focus on impossible scenarios and/or borderline impossible as ‘expected’ scenarios, or worthy of justifying precautionary avoidance of emitting CO2.

This diagram provides a visual that distinguishes the various classes of possibilities, including the impossible and irrelevant. While verified possibilities have higher epistemic status than the unverified possibilities, all of these possibilities are potentially important for decision makers.

The orange triangle illustrates a specific vulnerability assessment, whereby only a fraction of the scenarios are relevant to the decision at hand, and the most relevant ones are unverified possibilities and even the impossible ones. Clarifying what is impossible versus what is not is important to decision makers, and the classification provides important information about uncertainty.

Let’s apply these ideas to interpreting the various estimates of equilibrium climate sensitivity. The AR5 likely value is 1.5 to 4.5 C, which has hasn’t really budged since the 1979 Charney report. The most significant statement in the AR5, which is included in a footnote in the SPM: “No best estimate for equilibrium climate sensitivity can now be given because of lack of agreement on values across assessed lines of evidence and studies.”

The big disagreement is between the CMIP5 model range (values between 2.1 and 4.7 C) and the historical observations using an energy balance model. While Lewis and Curry (2015) was not included in the AR5, it provides the most objective comparison of this approach with the CMIP5 models since it used the same forcing and time period.

The Lewis/Curry estimates are arguably corroborated possibilities, since they are based directly on historical observational data, linked together by a simple energy balance model. It has been argued that LC underestimate values on the high end, and neglect the very slow feedbacks. True, but the same holds for the CMIP5 models, so this remains a valid comparison.

Where to set the borderline impossible range? The IPCC AR5 put a 90% limit at 6 C. None of the ECS values cited in the AR5 extend much beyond 6 C, although in the AR4 many long tails were cited, apparently extending beyond 10 C. Hence in my diagram I put a range of 6-10 C as borderline impossible based on information from the AR4/AR5.

Now for JC’s perspective. We have an anchor on the lower bound — the no-feedback climate sensitivity, which is nominally ~1 C (sorry, skydragons). The latest Lewis/Curry values are reported here over the very likely range (5-95%). I regard this as our current best estimate of observationally based ECS values, and regard these as corroborated possibilities.

I accept the possibility that Lewis/Curry is too low on the upper range, and agree that it could be as high as 3.5C. And I’ll even bow to peer/consensus pressure and put an upper limit of the v likely range as 4.5 C. I think values of 6-10 C are impossible, and I would personally define the borderline impossible region as 4.5 – 6 C. Yes we can disagree on this one, and I would like to see lots more consideration of this upper bound issue. But the defenders of the high ECS values are more focused on trying to convince that ECS can’t be below 2 C.

But can we shake hands and agree that values above 10C are impossible?

Now consider the perspective of economists on equilibrium climate sensitivity. The IPCC AR5 WGIII report based all of its calculations on the assumption that ECS = 3 C, based on the IPCC AR4 WGI Report. Seems like the AR5 WGI folks forgot to give WGIII the memo that there was no longer a preferred ECS value.

Subsequent to the AR5 Report, economists became more sophisticated and began using the ensemble of CMIP5 simulations. One problem is that the CMIP5 models don’t cover the bottom 30% of the IPCC AR5 likely range for ECS.

The situation didn’t get really bad until economists start creating PDFs of ECS. Based on the AR4 assessment, the US Interagency Working Group on the Social Cost of Carbon fitted a distribution that had 5% of the values greater than 7.16 C. Weitzmann (2008) fitted a distribution 0.05% > 11C, and 0.01% >20C. While these probabilities seem small, they happen to dominate the calculation of the social cost of carbon (low probability, high impact events). [see Worst case scenario versus fat tail]. These large values of ECS (nominally beyond 6C and certainly beyond 10 C) are arguably impossible based upon our background knowledge.

For equilibrium climate sensitivity, we have no basis for developing a PDF — no mean, and a weakly defended upper bound. Statistically-manufactured ‘fat tails’, with arguably impossible values of climate sensitivity are driving the social cost of carbon. Instead, effort should be focused on identifying the possible or plausible worst case, that can’t be falsified based on our background knowledge. [see also Climate sensitivity: lopping off the fat tail]

————–

The issue of sea level rise provides a good illustration of how to assess the various scenarios and the challenges of identifying the possible worst case scenario. This slide summarizes expert assessments from the IPCC AR4 (2007), IPCC AR5 (2013), the US Climate Science Special Report (CSSR 2017), and the NOAA Sea Level Rise Scenarios Report (2017). Also included is a range of worst case estimates (from sea level rise acceleration or not).

With all these expert assessments, the issue becomes ‘which experts?’ We have the international and national assessments, with a limited number of experts for each that were selected by whatever mechanism. Then we have expert testimony from individual witnesses that were selected by politicians or lawyers having an agenda.

In this context, the expert elicitation reported by Horton et al. (2014) is significant, which considered expert judgement from 90 scientists publishing on the topic of sea level rise. Also, a warming of 4.5 C is arguably the worst case for 21st century temperature increase (actually I suspect this is an impossible amount of warming for the 21st century, but lets keep it for the sake of argument here). So should we regard Horton’s ‘likely’ SLR of 0.7 to 1.2 m for 4.5 C warming as the the ‘likely’ worst case scenario? The Horton paper gives 0.5 to 1.5 as the very likely range (5 to 95%). These values are much lower than the range 1.6 to 3 m (and don’t even overlap).

There is obviously some fuzziness and different ways of thinking about the worst case scenario for SLR by 2100. Different perspectives are good, but 0.7 to 3 m is a heck of a range for the borderline worst case.

———-

And now for JC’s perspective on sea level rise circa 2100. The corroborated possibilities, from rates of sea level rise in the historical record, are 0.3 m and less.

The values from the IPCC AR4, which were widely criticized for NOT including glacier dynamics, are actually verified possibilities (contingent on a specified temperature change) — focused on what we know, based on straightforward theoretical considerations (e.g. thermal expansion) and processes for which we have defensible empirical relations.

Once you start including ice dynamics and the potential collapse of ice sheets, we are in the land of unverified possibilities

I regard anything beyond 3 m as impossible, with the territory between 1.6 m and 3.0 m as the disputed borderline impossible region. I would like to see another expert elicitation study along the lines of Horton that focused on the worst case scenario. I would also like to see more analysis of the different types of reasoning that are used in creation of a worst case scenario.

I regard anything beyond 3 m as impossible, with the territory between 1.6 m and 3.0 m as the disputed borderline impossible region. I would like to see another expert elicitation study along the lines of Horton that focused on the worst case scenario. I would also like to see more analysis of the different types of reasoning that are used in creation of a worst case scenario.

The worst case scenario for sea level rise is having very tangible applications NOW in adaptation planning, siting of power plants, and in lawsuits. This is a hot and timely topic, not to mention important. A key topic in the discussion at Rand was how decision makers perceive and use ‘worst case’ scenario information. One challenge is to avoid having the worst case become anchored as the ‘expected’ case.

———

Are we framing the issue of 21st century climate change and sea level rise correctly?

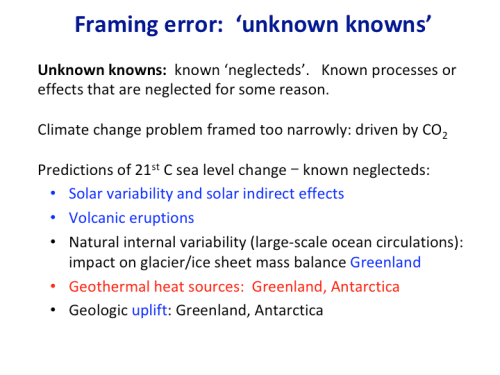

I don’t think Donald Rumsfeld, in his famous unknown taxonomy, included the category of ‘unknown knowns’. Unknown knowns, sometimes referred to as ‘known neglecteds,’ refer to known processes or effects that are neglected for some reason.

Climate science has made a massive framing error, in terms of framing future climate change as being solely driven by CO2 emissions. The known neglecteds listed below are colored blue for an expected cooling effect over the 21st century, and red for an expected warming effect.

——-

Much effort has been expended in imagining future black swan events associated with human caused climate change. At this point, human caused climate change and its dire possible impacts are so ubiquitous in the literature and public discussion that I now regard human-caused climate change as a ‘white swan.’ The white swan is frankly a bit of a ‘rubber ducky’, but nevertheless so many alarming scenarios have been tossed out there, that it is pretty unimaginable that a climate surprise caused by CO2 emissions that has not been imagined.

The black swans related to climate change are associated with natural climate variability. There is much room for the unexpected to occur, especially for the ‘CO2 as climate control knob’ crowd.

Existing climate models do not allow exploration of all possibilities that are compatible with our knowledge of the basic way the climate system actually behaves. Some of these unexplored possibilities may turn out to be real ones.

Scientific speculation on plausible, high-impact scenarios is needed, particularly including the known neglecteds.

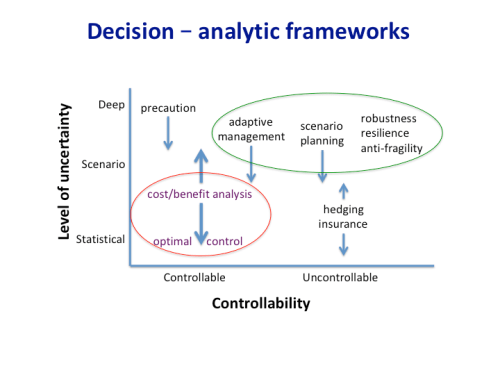

Is all this categorization of uncertainty merely academic, the equivalent of angels dancing on the end of a pin? The level of uncertainty, and the relevant physical processes (controllable or uncontrollable) are key elements in selecting the appropriate decision-analytic framework.

Controllability of the climate (the CO2 control knob) is something that has been been implicitly assumed in all this. Perhaps on millennial time scales climate is controlled by CO2 (but on those time scales CO2 is a feedback as well as a forcing). On the time scale of the 21st century anything feasible that we do to reduce CO2 emissions is unlikely to have much of an impact on the climate even if you believe the climate model simulations (see Lomborg)

Optimal control and cost/benefit analysis, which are used in evaluating the social cost of carbon, assume statistical uncertainty and that the climate is controllable — two seriously unsupported assumptions.

Scenario planning, adaptive management and robustness/resilience/antifragility strategies are much better suited to conditions of scenario/deep uncertainty and a climate that is uncontrollable.

How did we land in this situation of such a serious science-policy mismatch? Well, in the early days (late 1980s – early 1990’s) international policy makers put the policy cart before the scientific cart, with a focus on CO2 and dangerous climate change. This focus led climate scientists to make a serious framing error, by focusing only on CO2-driven climate change. In a drive to remain relevant to the policy process, the scientists focused on building consensus and reducing uncertainties. The also began providing probabilities — even though these were unjustified by the scientific knowledge base, there was a perception that policy makers wanted this. And this led to fat tails and cost benefit analyses that are all but meaningless (no matter who they give Nobel prizes to).

The end result is oversimplification of both the science and policies, with positive feedback between the two that has created a climate alarm monster.

This Frankenstein has been created from framing errors, characterization of deep uncertainty with probabilities, and the statistical manufacture of fat tails.

“Monster creation” triggered a memory of a post I wrote in 2010 Heresy and the Creation of Monsters. Yikes I was feisty back then (getting mellow in my old age).

Reblogged this on Climate Collections.

how can anyone trust any climate model, which is not able to reproduce the regional historic climate variability. If you eliminate all external forcings, you get a flat line climate over centuries and millennia, something never ever happened in the dynamic nature of the system.

If analysts are going to calculate the worst case for global warming, then the worst case for remediation efforts also needs to be calculated. One could theoretically, see huge increases in poverty or deaths from inadequate medical care if fossil fuels were eliminated and the economy collapses. If unlikely, but very bad consequences are to be theoretically considered, then they need to be considered from all angles.

The warmist/left community has blinders on which leads them to look at problems from a very narrow perspective, which not unsurprisingly is consistent with their world-view and political goals.

JD

As I read things, the whole debate makes the assumption that by belching carbon dioxide into the atmosphere, we have excluded the entry into a new Ice Age.

Clearly when no humans were around to belch carbon dioxide, oceanic temperatures were the primary drivers in regulating levels and when cooling reduced carbon dioxide to just about 200ppm, something triggered descent into cold. That is no longer necessarily the sole case.

It is clear that the IPCC is solely involved in developing radiator technology, namely focussed on warming, how fast and how high. There is no focus on how fast and how low could things go. Mainly as scientists do not have robust understanding of what triggers a descent into cold, even if theories are now being evaluated about how escape into an interglacial may occur.

There is also no focus on the US military heating up the ionosphere using HAARP. SSW frequency has recently increased radically. As a skeptical due diligence practicioner, I would ask them to help humanity with their enquiries, as it would be criminality of the highest order for the military of one nation to control global climate and everything downstream through unaccountable military experimentation. It might also impact on climate modelling, knowing that solar mimicry was being beamed out from Alaska or wherever….it would certainly have impacts on commodity harvests, knowledge which could make insider-traders billions on Wall Street.

While we are wishing – I would like to see a best case, a most likely case and a worst case.

It is also a little frustrating that the existing technology of nuclear power is rejected out of hand for solving the worst case scenario.

In the USA, we have 100 nuclear power plants and they produce 20% of the electricity. It would be easy (relatively) to have each state (or perhaps a region for very small states) build 2 plants each (for another 100 plants) and double the share of nuclear to 40%. Do that every five years and in 15 years 80% of the power is being provided by nuclear power.

No one is even looking at the nuclear option, as far as I can see.

Pick a passive cooling design and build 100 of the exact same design. How hard can this be?

Instead we are screwing around with renewables, which cannot provide power when it is dark and not windy (intermittent). I would leave renewables for about 20%-30% of the solution and do the rest with nuclear power.

The people who sell power have been looking at it for decades. It’s an economic loser.

Cheap renewables and a closure of expensive nuclear plants caused California electricity price skyrocket. An economic winner?

“Great” thinker stifled by paper bag.

IEA/NEA Report on Projected Costs of Electricity Generation 2015

“The report also reveals that nuclear energy costs remain in line with the cost of other baseload technologies, despite persistent reports to the contrary.”

A ‘solution’ to 25% of the ‘problem’? (AGW – SMH)

https://www.epa.gov/sites/production/files/styles/medium/public/2016-05/global_emissions_sector_2015.png

close to 40% if you accept (as I do) that electric cars and trucks are inevitable. That moves a big chunk of “transportation,” as well as some agriculture and land use (No reason you cant have electric equipment) and “other energy” (which I assume includes a lot of ICE engines for generators, boats, lawnmowers, etc etc etc.)

And, of course, you realize you just made the world’s strongest case for why we should all be ticked off at all the taxpayer cash dumped into renewables- which can’t handle electricity sector, much less any part of the others.

Judy, thanks. Let me work out another aspect in the light of two recent papers. My thesis is: only lukwarmer can set realistic aims for the prevention of a climate disaster and activate the people doing some action against it. When we consider a TCR of about 1.3 ( just like L/C18 do) then we’ll get a critical theshold for GMST at about 2100 ( 1.8 deg. C). Up to this time the mankind has to manage, that emissions go near zero to hold this limit. It’s some kind of sci-fi to hope that it could be earlier. This paper https://www.tandfonline.com/doi/abs/10.1080/14693062.2018.1532872 states that it’s contraproductive to make the people fatalistic with the doom and gloom, this would produce some kind of “it’s all over”- mood and not the wanted technological boost to overcome the fossil fuel as the energy source No. one. This value is the realistic one from observations and the only arument against this is: hidden patterns boosting the ECS to near 3 (TCR about 2). If it would be so the mankind would have no chance! Doom and gloom and there’s no chance to do something about it.

The other paper describing the social effects of climate chance is this one: https://www.sciencedirect.com/science/article/pii/S1631071318301159

Grundmann states that Climate change is a wicked problem and stresses the uncertainty in the decisions to make. If we would have certainty in the doom and gloom predicitons of some models we should stop every acion and enjoy the last years of the mankind! Is this the aim of activists? No, seems to be the right answer. I’m optimistic to solve this problem. But this will only work with scientific confidence when we stop to follow the Frankenstein adorers.

What we need now are expert engineers and physicists to take that ECS chart and duplicate it for “alternative energy sources in developed and rapidly developing nations”

Where the “corroborated possibilities” would be hydro, nuclear, natural gas

The “unverified possibilities” would be CCS, any renewable penetration over about 20-30%,

“impossible” = 100% renewable, “radical lifestyle and economic system changes,” verifiable International treaties absent breakthrough technologies.

Geo-engineering being one that straddles “unverified” possibilities and “impossible.”

At least we’d focus the international and political debate, international investment and R&D, and remove the political activists’ motive to keep absurd alarmist scenarios alive in the media.

good one :)

Here’s a graph from Wikipedia made from empirical data on CO2 free electricity generation:

https://pbs.twimg.com/media/DpGrf5dWsAAMIMi.png

What you have done for WG1 should also be performed for WG2 and WG3 issues.

You refer to CBAs, but I think arguments referencing the social cost of carbon need to also take into account the very real social costs of reducing carbon.Those costs need to include factors like early retirement of capital investment, foregone development in non-OECD countries, etc.

We could (of course) convert to a mix of nuclear, hydro, solar, wind and biofuels in a fairly short time frame. This problem is eminently solvable. Bu the costs of telescoping this energy transition are roughly double those of allowing market forces to lead us to this promised land, if indeed it is such. I did back of the envelope calculations four or five years ago and they need to be adjusted for inflation, but it sure looked like a rush to green (including a dash to gas as a bridge fuel) would cost around $23 trillion, compared to $12 trillion for natural evolution of our fuel portfolio.

Tom, you did really good work showing that the world will need (and therefore produce) much more energy than it does now.

Early retirement costs of coal plants is real but not the whole story. Gas went from ~20% of the electricity generation in the US to ~34% from 2006 to 2018- adding 20 gigawatts of gas generating capacity in 2018 alone. Renwables from ~4% to ~9%.

If wind/solar were as cost-competitive and effective as the advocates claim, transition to renewables would be well under way in developing nations that need new capacity and in the US.

Interesting link- look at the mix of energy sources by region of the US.

https://www.eia.gov/todayinenergy/detail.php?id=34612

The American midwest is the only place where coal reigns supreme. It’s also the region that pipeline protests would cut off from gas. It’s also the region that would be hit hardest by carbon pricing initiatives (especially if the only allowed option is non-performing renewables).

It’s also where the Democrats lost the election for president in 2016.

Judith:

Sadly, you continue to maintain the fiction that climate change is fraught with uncertainties, when in fact, it is very simple.

It has two components:

1. Natural recovery from the Little Ice Age cooling: Approx.0 .05 deg C./decade (the warming rate from 1900 to circa 1975). Thereafter, it increased because of reduced anthropogenic SO2 aerosol emissions, due to Clean Air efforts, which cleansed the air, to about 0.16 deg. C/decade.

2. The amount of anthropogenic SO2 aerosol emissions in the atmosphere: Approx. 0.02 deg. C .of change for each net Megaton of change in global SO2 aerosol emissions, anthropogenic or volcanic.

All of the anomalous warming since 1975 has been due cleansing of the atmosphere due to Clean Air reductions in dimming SO2 aerosol emissions, which HAS to occur, and with no hint of any additional warming due to “greenhouse gasses”.

Superimposed upon upon this trend are temporary temperature increases or decreases because of VEI4, or larger, volcanic eruptions: La Ninas, caused by increased atmospheric SO2 levels, typically form about 15 months after the date of an eruption, and El Ninos, due to settling out of the aerosols, appear around 24 months after an eruption.

Anthropogenic SO2 levels can be adjusted, but we are at the mercy of random volcanic eruptions, making it impossible to predict future temperatures beyond a few years.

Currently, our Clean Air efforts to reduce SO2 emissions are solely responsible for the higher temperatures that are responsible for the many climate-related disasters around the wor!d.

I post this with certainty, but if anyone can provide clear evidence that i am wrong, let’s have a discussion.

Wow. Thanks. There are few examples of model uncertainty at the level of a perturbed physics model. The famous Murphy 2009 and Rowlands et al 2012. There may be others?

This shows the growth in uncertainty in a set of solutions – from slightly different starting points – of a model. Even constraining solutions they get a broader range of future climates than the IPCC. The unconstrained solution space is broader still.

https://watertechbyrie.files.wordpress.com/2018/05/rowlands-2012-add.png

https://www.nature.com/articles/ngeo1430

Starting with uncertain and imprecise sets of initial conditions – model uncertainty increases until saturating out at a level intrinsic to initial conditions precision and model structure.

The thick, black, linear extrapolation is mine.

Reminiscent of when Curry made basic mistakes in her previous discussion of uncertainty (and her supposed “positive feedback loop”), and other scientists had to come in an correct the nonsense. It’s amazing to me how people are still falling for this manufacture of false doubt (the tobacco strategy).

“Comment on “Climate science and the uncertainty monster” JA Curry and PJ Webster”

https://journals.ametsoc.org/doi/pdf/10.1175/BAMS-D-11-00191.1

“It seems [Judity Curry] is far too busy to deal with this minor issue (which underpins, or rather undermines, every quantitative statement she has made regarding the purported failings of the IPCC analysis).”

http://julesandjames.blogspot.com/2010/11/wheres-beef-curry.html

https://climatefeedback.org/scientists-reactions-us-house-science-committee-hearing-climate-science/

“Many of the strategies used by the opponents of both evolution and global warming are based on sowing misinformation and doubt. This approach is often called the “tobacco strategy”, because tobacco companies used it effectively to delay health warnings and regulation of smoking.”

http://reports.ncse.com/index.php/rncse/article/viewFile/71/64

““Corporations use a range of strategies to dispute their role in causing public health harms and to limit the scope of effective public health interventions. […] these industries argue that aetiology is complex, so individual products cannot be blamed […].

[…]

Arguments about the complex, multifactorial aetiology of CHD and cancer have long been used by the tobacco industry to dispute the epidemiological and other evidence. […] Demands for perfect evidence, while misrepresenting the existing evidence, can also be observed in climate change denialism.”

http://jech.bmj.com/content/71/11/1078

Yawn

LOL

Scott

Some people are really boring…

Re: “Yawn”

A response that perfectly illustrates James Annan’s point. And Gavin Schmidt’s point. And Victor Venema’s point. And…

I’m still yawning.

Maybe you can yawn long enough for the stadium wave to show up.

I’m watching the variations in regional ice extent and AMO. stay tuned.

Judy: very interesting field! Here I show the September extent ( area, the lower graph) with a linear trend and a 10a Loess Smoothing:

https://i.imgur.com/gt5W5yG.gif

Some of the “best freinds” should be alarmed? :-)

Lol.

Yes Atomski, Some alarmists like to call Judith a denier [sic] when its an obvious lie. Some disagree with her science and resort to personal attacks on her. Kind of reminds some of us of certain “people’s paradise” countries. I would suggest you might need to revisit what “diversity” means and try to focus on the issue at hand rather than clumsy ad hominems. You benefit immensely from Judith’s commitment to providing an open forum. I suggest you show a little appreciation of her hospitality and try to provide something that adds value.

Well said !!

Dr Curry

Please forgive me for not being as polite and professional as the majority of those posting comments.

Atomsk’s Sanakan

I am so sick and tired of jokers like you nit picking over stuff when every single solitary prediction which has been made has failed to come to pass. When hurricanes, forest fires, floods, droughts, and tornadoes all are doing the exact opposite of what we were told that they would do. When for the past 20 to 30 years we have been told that within the next 10 to 12 years we will reach a tipping point. When we watch CO2 concentrations in steadily increase and temperatures do not by any significant amount. When we are told that we have just experienced the hottest year ever but you fail to tell us that it was only by 0.04 degrees. When the only demonstrable results of increased levels of CO2 in the atmosphere have been beneficial. Have you no shame sir? More and more of the people that I talk to just laugh whenever they hear people like you speak. If you can point to any prediction which has actually been accurate then please tell me.

Re: “I am so sick and tired of jokers like you nit picking over stuff when every single solitary prediction which has been made has failed to come to pass”

If you think every prediction has failed to pass, then you don’t understand the science at all. Or you’re making stuff up. Take your pick.

You’d basically not have to know about stratospheric cooling, thermospheric cooling, mesopsheric cooling, positive feedback from water vapor, positive feedback from clouds, the negative lapse rate feedback, Arctic amplification of warming, decreasing Antarctic land ice, decreasing Arctic land ice, decreasing Arctic sea ice, sea level rise acceleration, and so on.

Really, it’s on par with a creationist there’s not evidence of human evolution, and none of the predictions of evolutionary biology have come to pass.

Re: “When we watch CO2 concentrations in steadily increase and temperatures do not by any significant amount”

Oh, you’re one of those “a pause means CO2 doesn’t cause significant warming” people. That’s like saying Earth’s axial tilt relative to the Sun doesn’t cause a multi-month warming trend from in Canada from mid-winter to mid-summer, since this week was the same temperature as last week. That’s just end-point bias on your part:

“Unusually cold winters, a slowing in upward global temperatures, or an increase in Arctic sea ice extent are often falsely cast as here-and-now disconfirmation of the scientific consensus on climate change. Such conclusions are examples of “end point bias,” the well documented psychological tendency to interpret a recent short-term fluctuation as a reversal of a long-term trend.”

http://www.tandfonline.com/doi/full/10.1080/17524032.2016.1241814?scroll=top&needAccess=true

https://3.bp.blogspot.com/-JSwHieVphcI/Ww71fCYWf3I/AAAAAAAABm4/a_-uMC2zPtokU7eeAu_dgXdkLFyAbNnsQCPcBGAYYCw/s640/global%2Btemperature%2Bcomparisons.JPG

[from: “Recent United Kingdom and global temperature variations”]

crowcane: every single solitary prediction which has been made has failed to come to pass.

That is not a correct assertion. There have been a lot of incorrect predictions, such as [the end of snow as we know it]., but not every single solitary prediction … .

atomsk’s Sanakan: “Unusually cold winters, a slowing in upward global temperatures, or an increase in Arctic sea ice extent are often falsely cast as here-and-now disconfirmation of the scientific consensus on climate change. Such conclusions are examples of “end point bias,” the well documented psychological tendency to interpret a recent short-term fluctuation as a reversal of a long-term trend.”

There were also the Arctic Ice Death spiral which reverted to the general trrend and did not produce an ice-free Arctic by 2013; and children will grow up not knowing what snow is; and the recent el Nino proves that the “pause” is over.

The pause clearly showed that the quantitative forecasts were unreliable, no matter how confidently expressed. Whether the pause will continue after the effect of the recent el Nino has passed is not yet known.

And, of course, James Hansen’s grandchildren have nothing to fear from climate change; possibly irrelevant because the “scientific consensus” has not addressed their particular case.

If only we could get scientists not to stray so far from the consensus! which is that human activities have contributed to global warming, not that temperatures would rise strictly monotonically toward disastrous levels; and not that modest efforts directed against CO2 (e.g. the Paris Accords) will prevent future warming due to other causes such as land use changes..

Seriously? Those are the things that you are grabbing and scraping for in order not to admit that all the the predictions which actually have an impactful effect on the climate which I will experience have utterly and completely failed to come to pass. That has got to be one of the lamest responses that I have ever run across. The factor will increase and that factor will decrease and this other one will change in this or that manner and when it is all said and done niether I nor few if any of the other people populating this planet will know the difference. You sir just identified yourself as someone I should completely ignore. I haven’t a clue who you are or any awards which you may have received or what papers you may have written and really do not care. With such a sorry excuse of a response absolutely none of that matters.

Re: “Those are the things that you are grabbing and scraping for in order not to admit that all the the predictions which actually have an impactful effect on the climate which I will experience have utterly and completely failed to come to pass.”

You don’t understand the science, which is why what you think what I sad was irrelevant.

The “stratospheric cooling, thermospheric cooling, mesopsheric cooling” points to CO2 (and other greenhouse gases) being the predominant cause of the troposphere and surface warming, not increased solar output. That’s relevant for attribution.

The “positive feedback from water vapor, positive feedback from clouds, the negative lapse rate feedback” affects how much warming that increased CO2 causes.

The “decreasing Antarctic land ice, decreasing Arctic land ice, […] sea level rise acceleration” are relevant impacts.

So those are several accurate predictions. Thus you were wrong when you claimed:

“I am so sick and tired of jokers like you nit picking over stuff when every single solitary prediction which has been made has failed to come to pass.”

https://judithcurry.com/2018/10/11/climate-uncertainty-monster-whats-the-worst-case/#comment-882183

Re: “The factor will increase and that factor will decrease and this other one will change in this or that manner and when it is all said and done niether I nor few if any of the other people populating this planet will know the difference.”

I don’t care if you notice a difference, anymore than I care if anti-vaxxers notice the effects of vaccination. I care what the scientific evidence shows on attribution, detection, impact, etc. What you happen to notice (or choose to notice) in your everyday life, is not the barometer for what actually happened. What non-experts like you are aware of is dwarfed by what the published peer-reviewed evidence shows.

Atomsk: “You don’t understand the science, which is why what you think what I sad was irrelevant.”

You are without any failure, the only problem of you is your much too big modesty! :-)

Atomsk’s Sanakan: “Many of the strategies used by the opponents of both evolution and global warming are based on sowing misinformation and doubt. This approach is often called the “tobacco strategy”, because tobacco companies used it effectively to delay health warnings and regulation of smoking.”

http://reports.ncse.com/index.php/rncse/article/viewFile/71/64

““Corporations use a range of strategies to dispute their role in causing public health harms and to limit the scope of effective public health interventions. […] these industries argue that aetiology is complex, so individual products cannot be blamed […].

[…]

Arguments about the complex, multifactorial aetiology of CHD and cancer have long been used by the tobacco industry to dispute the epidemiological and other evidence. […] Demands for perfect evidence, while misrepresenting the existing evidence, can also be observed in climate change denialism.”

IMO, you’d be better off to stick with the science of CO2 and climate. To start with, nobody here [denies climate change]. Besides that, the case that CO2 has played a major role in climate warming has serious liabilities, a few of which I have pointed out. Everybody is wrong sometimes, and previous errors do not imply that they are wrong this time.

This approach is often called the “tobacco strategy”

So in your world anyone who questions the alarmist point of view is invoking the “tobacco strategy”? What a convenient way to dismiss other points of view. I’m surprised you didn’t use the other often over used non argument which states: Anyone who questions human caused climate change is like questioning Einstein’s theory of relativity. How dare we invoke the scientific method.

Unfortunately the possibility of negative feedback (what almost always occurs to thermodynamic systems when one component is changed, and the system readjusts from direct response to the component change alone) is left out. The most likely mechanism for this on Earth (a mainly water covered planet) is cloud adjustment to modify solar reflection. Also, using a starting point at the end of the little ice age is suspect, as this temperature is lower than the temperature level over most of the Holocene.

The ozone hole and CFC precedent.

Re: “The ozone hole and CFC precedent.”

That was a nice precedent, since it actually worked. I actually take it a be a fairly good prediction on what will happen:

1) political conservatives will continue to lag behind on accepting the science, mostly for ideological reasons [same as they did in the case of smoking and second-hand smoking]

2) industries will support regulations on the topic, for the sake of profit

3) what everyday conservatives think won’t matter as much, since the industries will introduce enough funding to push regulations through.

Is that what I want to happen? I don’t really care. But it’s what I think will likely, as per this:

“The ozone story: A model for addressing climate change?

[…]

The ozone hole case suggests that nothing will likely happen until a dominant energy producer—or a consortium of dominant energy producers—develops profitable proprietary alternatives to fossil fuels and other greenhouse gas–emitting processes. Then, they will change positions to support regulations to drastically reduce greenhouse gas emissions, with the purpose of giving themselves a competitive advantage and significant profits. Public awareness and energy conservation are important, and the public’s and government representatives’ opinions can put pressure on corporate profits. But it really doesn’t matter what the public or government representatives want or believe; short-term and long-term corporate profits will drive any decision to greatly limit greenhouse gas emissions.”

Oh, and please don’t pretend the Montreal Protocol didn’t work. There’s abundant scientific evidence showing that it did. Ozone depletion was mitigated. Let me know know when you can address some of the research on that:

“Quantifying the ozone and ultraviolet benefits already achieved by the Montreal Protocol”

“Evidence for the effectiveness of the Montreal Protocol to protect the ozone layer”

“Emergence of healing in the Antarctic ozone layer”

“Antarctic ozone loss in 1979–2010: First sign of ozone recovery”

“Depletion of the ozone layer in the 21st Century”

“The Antarctic ozone hole: An update”

Let’s just say that a social alarm was successfully created over a poorly studied phenomenon, a group of compounds was blamed, and a group of countries passed regulation banning them. Then victory was declared and everybody was happy.

Politicians thought global warming and CO2 was the same only bigger and better, after all you don’t get the chance to tax the air very often. And then the agenda started to grow with wealth redistribution and energy transition until the present conundrum.

Now politicians don’t know how to get out of this mess, so it is all empty declarations and no action. They’ll make scientists pay for it. Look for the backlash.

Scenario planning, adaptive management and robustness/resilience/antifragility strategies are much better suited to conditions of scenario/deep uncertainty and a climate that is uncontrollable. 👍

Dynamical risk is best seen in data – and by the nature of these dynamical systems gets larger with time. With enough time we might revisit any of the ergodic states of the Earth system.

But strength and resilience is a celebration brought about by care of Earth systems – catchments, rivers and oceans. Using modern notions of polycentric policy and local and regional management – of the global commons especially. The fundament is economic development – free trade, fair laws and robust and peaceful democracies.

https://watertechbyrie.files.wordpress.com/2018/10/food-smiles.jpg

MS. Curry, J.A. Have you read anything I have sent you, or am I wasting you’r and my time?

Dr. Curry ==> Great Presentation to Rand.

I have been calling one type of “Unknown Knowns” the impossible act of “Unknowing Known Uncertainty”. Using statistics to create over-averaged temperature anomalies producing nearly certain (errorless) global values.

Can you supply a paper title (or link) for the Cheng et al. 2017, the sea level graph used in the PowerPoint? ( I can’t seem to find the right Cheng 2017 — …)

Thank you for sharing your Rand presentation.

Can anyone identify the research paper Cheng et al. 2017 as the source fore the sea level graph (page 27) in Dr. Curry’s presentation?

oops it is Chen

https://www.researchgate.net/publication/318043755_The_increasing_rate_of_global_mean_sea-level_rise_during_1993-2014

Dr. Curry ==> Thank you!

— I think the “surname et al. year” citing standard needs to change — with so many papers being published, too many surnames get duplicated in a single year, even within one topic — and not just Chinese names either — lots of Jones’ and Hansen’s and Smith’s etc.

I usually try to provide online links to anything I cite, but not enough time on this one.

Dr. Curry ==> No worries, can’t quite imagine how many hats you are wearing simultaneously.

There is no necessity for carbon action because the climate drives CO2:

https://i.postimg.cc/qvfYLvG8/Had-SST3-leads-12mo-CO2-change.jpg

The worst case scenario is people never learn this en masse and fall for anything… are we there yet?

The best case scenario is people learn there is no such thing as a CO2 driven climate and therefore ECS estimates, CO2 mitigation proposals, and carbon taxes are irrelevant.

This debate appears to be based on the assumption that the temperature trend is as described by the BEST data, which were published in a very questionable journal and are opaque unless you are able to address directly the original files; I believe the closest that one can come to reality lies in some national met agencies – such as Iceland – and in the GHCN archives freely available at KNMI. Only that way can one avoid the manipulations to obtain a desired curve done at Goddard and the CRU.

There has been no warming that cannot reasonably be attributed to natural causes.

Alan Longhurst

we use ghcn.

all of our data is open

goddard uses ushcn. defunct since 2014

Amen! What makes the BEST time-series more than just “opaque” is the total distortion of low-frequency spectral content in by the artificial assembly of mere snippets of record by their geophysically naive “empirical break-detection” and “homogenization” algorithms. Thus their long-term “data” are products more of academic imagination than of direct, model-free measurement,

JC’s post says:

Given that any realistically achievable amount of global warming we could get this century would be beneficial (total of all impact sectors), then the worst case climate change and policy scenarios are:

1. Abrupt global cooling – this could kill millions, or perhaps even billions this century

2. spending huge amounts of funds on policies to reduce global warming, there by retarding global economic growth by a) reducing the benefits of global warming, and b) wasting these funds when they could be spent on doing real good (see for example Bjorn Lomborg’s analyses and publications). The global climate change industry was estimated at $1.4 trillion in 2013 (~1.9% of global GDP) and increasing at 2 to 10 times the average global GDP growth rate.

If you don’t like models, you can use observations instead.

http://woodfortrees.org/plot/best/mean:12/from:1950/plot/esrl-co2/scale:0.01/offset:-3.25

These give a relatively sharp effective TCR near 2.3 C per doubling. It is effective because it takes into account other proportionate anthropogenic effects like methane, black carbon and aerosols, as well as feedbacks to the net (not just CO2) forcing. It turns out to be highly correlated to CO2 and log CO2 for the last 60 years (0.935) because that is the dominant component of the net forcing. From this, an effective ECS near 3 C is very likely, and there is much less uncertainty in the observations than in the rather too cautious IPCC range.

Bottom line: don’t believe the uncertainty range. Sixty years of observations say otherwise and give numbers sharply consistent with and effective ECS of 3 C per doubling.

I get 1.8C, not 2.3C, but yes, observed trends would seem to be a good starting forecast.

https://turbulenteddies.files.wordpress.com/2018/09/noaa_ghg_tcr_2017.png

Not sure what NCDC is like as a climate dataset, but I use BEST, GISTEMP and HADCRUT4 with higher values than that.

Also my data covers the last 60 years, while yours doesn’t, and you are not using the CO2 forcing which maximizes at 2 W/m2, but some estimate that is rather above the high end of IPCC values. To get warming as a function of CO2, use CO2 for the forcing. It fits with a high correlation.

Also my data covers the last 60 years, while yours doesn’t

Think about what that would imply – decreasing response and decelerating warming since 1979.

But the evidence you presented, temperature and concentration of CO2, is not temperature and assumed radiative forcing anyway.

TE

60 years.! 2 mile long time machine, Greenland and Arctic ice bore holes should be used. Around 800,000 years.

Problem for CAGW activists is resolution shows no changes identified in 60 years!

Science really damaged by these political players.

Scott

You have this backwards. CO2 is sensitive to the climate, not the other way around as you presume.

There is no TCR or ECS for carbon dioxide because CO2 lags ocean warming by 10 months, meaning CO2 doesn’t warm the climate, therefore any discussion about the climate being sensitive to CO2 is science fiction.

Do you actually believe the future can control the past?

As far as I am concerned comments like yours enforce the BIG LIE.

I’m asking you all to stop giving credence to an impossibility.

This looks like the cranky Salby stuff.

This looks like the cranky Salby stuff.

The problem here is you wish to deflect with a typical school-yard smear attempt instead of arguing the point.

Would you say the same thing about “The phase relation between atmospheric carbon dioxide and global temperature”, Humlum etal. 2013?

If you are unwilling to acknowledge the fact that CO2 change lags ocean temperature change, and the ocean is driving CO2 via Henry’s Law, and that this fact alone invalidates the entire AGW theory, then what you believe is science is not based on reality – it’s science fiction.

https://i.postimg.cc/yxvS1wg8/12mo-CO2-lags-Had-SST3.jpg

This is old stuff. The ocean sink of CO2 is expected to be weaker in warmer years, which is why you see a correlation, but it is always a sink because the source is us, and you see that because it is acidifying too meaning it is gaining carbon.

The old stuff you referred to wasn’t my topic per se. Whether or not the ocean is becoming more acidic neither proves or disproves whether the CO2 increase is from us.

There is a strong lagged correlation because the ocean temperature is driving the CO2 outgassing/uptake, which has nothing to do with us, it’s natural, as always.

The human contribution to ocean-driven atm CO2 is lost in the noise.

Even if man-made emissions impossibly were the whole CO2 signal you haven’t addressed the 10 month lag and the apparent reverse causality.

The temperature affects the sink, so this explains the lag. It is a sink because you can’t just dismiss that the ocean is gaining carbon and the atmosphere is gaining only half as much as we emit because the rest is going into the ocean and land. These crank ideas are completely devoid of any concept of a carbon budget.

Handwaving about a carbon cycle doesn’t get you out of the logical fail.

The temperature change precedes both the sourcing and sinking of CO2.

Thanks for the “projection”, but its your ideas that are the “crank stuff” as you haven’t really addressed the lag issue at all. Everyone can see for themselves that CO2 follows temperature – admit it or look foolish.

Your side is full of illogical cranks who cannot even reckon with the logical fail I’ve presented you, seemingly willing to accept reverse causality.

BTW, I made my graph yesterday, and I didn’t use Salby or Humlum etal. It’s called independent verification, and I didn’t need your permission to know what I found out is true.

When you can just ignore the carbon budget it makes it easier for you to come up with these wacko theories, right? Follow the carbon.

Cool:

“The temperature change precedes both the sourcing and sinking of CO2.”

Oh, the Humlum et al paper – ideologically motivated crap “science” …

https://www.sciencedirect.com/science/article/pii/S0921818113000908

“Humlum et al., 2013 conclude that the change in atmospheric CO2 from January 1980 is natural, rather than human induced. However, their use of differentiated time series removes long term trends such that the presented results cannot support this conclusion. Using the same data sources it is shown that this conclusion violates conservation of mass. Furthermore it is determined that human emissions explain the entire observed long term trend with a residual that is indistinguishable from zero, and that the natural temperature-dependent effect identified by Humlum et al. is an important contributor to the variability, but does not explain any of the observed long term trend of + 1.62 ppm yr− 1.”

https://www.sciencedirect.com/science/article/pii/S0921818113000891

“The paper by Humlum et al. (2013) suggests that much of the increase in atmospheric CO2 concentration since 1980 results from changes in ocean temperatures, rather than from the burning of fossil fuels. We show that these conclusions stem from methodological errors and from not recognizing the impact of the El Niño–Southern Oscillation on inter-annual variations in atmospheric CO2.”

https://www.sciencedirect.com/science/article/pii/S0921818113001562

“A recent study relying purely on statistical analysis of relatively short time series suggested substantial re-thinking of the traditional view about causality explaining the detected rising trend of atmospheric CO2 (atmCO2) concentrations. If these results are well-justified then they should surely compel a fundamental scientific shift in paradigms regarding both atmospheric greenhouse warming mechanism and global carbon cycle. However, the presented work suffers from serious logical deficiencies such as, 1) what could be the sink for fossil fuel CO2 emissions, if neither the atmosphere nor the ocean – as suggested by the authors – plays a role? 2) What is the alternative explanation for ocean acidification if the ocean is a net source of CO2 to the atmosphere? Probably the most provocative point of the commented study is that anthropogenic emissions have little influence on atmCO2 concentrations. The authors have obviously ignored the reconstructed and directly measured carbon isotopic trends of atmCO2 (both δ13C, and radiocarbon dilution) and the declining O2/N2 ratio, although these parameters provide solid evidence that fossil fuel combustion is the major source of atmCO2 increase throughout the Industrial Era.”

My graph shows it is an upward curve of both CO2 forcing and the temperature. In fact CO2 forcing is more highly correlated with the temperature than the year is because these changes are nonlinear. Given the 93% correlation it is a good proxy to total forcing and can be used as a direct predictor. This high correlation is not surprising because CO2 forcing is 70-100% (most likely 85%) of the total. As such, when CO2 is doubled, a 2.3 C per doubling is what can be used for a predicted net effect. This is easier than doing it your way where you have to add back in the other ~50% of correlated forcing increase to get a final temperature change.

Hi Jim, I’m wondering what you think of Lewis and Curry ECS estimate. I’m guessing you feel it’s a soft estimate. Can you explain why.

You dont need models

Corroberated Possibility;

It has happened before

When c02 levels were 400ppm in the past what was the sea level

+9m

http://www.pnas.org/content/110/4/1209

If you base your scales of Uncertainty on categorical variables (it has happened before) you will very quikcly run into cases where people will quibble about the meanings of “It has happened before”

we know that at 400 ppm in the past that we have had warmer conditions than predicted by models and higher sea levels.

Let the special pleading begin.

But yes we can agree that 10C ECS is off the table.

More importantly, will we continue to subsidize people to re build in places that are prone to risk. Like hurricanes.

Economic Losses, Poverty & Disasters 1998-2017

https://reliefweb.int/report/world/economic-losses-poverty-disasters-1998-2017

“In the period 1998-2017, disaster-hit countries reported direct economic losses of US$2,908 billion of which climate-related disasters accounted for US$2,245 billion or 77% of the total.

This compares with total reported losses for the period 1978-1997 of US$1,313 billion of which climate-related disasters accounted for US$895 billion or 68%.

In terms of occurrences, climate-related disasters also dominate the picture, accounting for 91% of all 7,255 major recorded events between 1998 and 2017. Floods, 43.4%, and storms, 28.2%, are the two most frequently occurring disasters.

The greatest economic losses have been experienced by the USA, US$ 944.8 billion; China, US$492.2 billion; Japan, US$376.3 billion; India, US$ 79.5 billion; and Puerto Rico, US$ 71.7 billion.”

“This report highlights the protection gap between rich and poor. Those who are suffering the most from climate change are those who are contributing least to greenhouse gas emissions. The economic losses suffered by low and lower-middle income countries have drastic consequences for their future development.

“Clearly there is great room for improvement in data collection on economic losses but we know from our analysis of the available data using georeferencing that people in low-income countries are six times more likely to lose all their worldly possessions or suffer injury in a disaster than people in high-income countries.”

The report concludes that climate change is increasing the frequency and severity of extreme weather events, and that disasters will continue to be major impediments to sustainable development so long as the economic incentives to build and develop hazard-prone locations outweigh the perceived disaster risks.

“Integrating disaster risk reduction into investment decisions is the most cost-effective way to reduce these risks; investing in disaster risk reduction is therefore a pre-condition for developing sustainable in a changing climate,” the report states.

If history could teach us anything we would stop building in vulnerable areas like low lying coastal zones and flood plains.

See Fig. 1 of Foster and Rohling paper. CO2 varied from 200 to 280 ppm without SUV’s in past 550,000 years. The correlation with sea level is best explained by glacial-interglacial periods. In glacial periods, sea level decreased and oceans were cooler absorbing more CO2. In interglacial periods, sea level increased and oceans were warmer releasing more CO2.

If you apply the same correlation today, you have to assume the 400 ppm CO2 came from the oceans. What about the SUVs?

Pretty sure this is a Groundhog Day argument. In the past when sea levels were 9 meters higher, temperature was driving CO2. I hope I’ve indicated before now, it’s now CO2 driving temperature more than the other way around. We have 3 variables in the discussion: CO2, GMST and Sea Level.

CO2 > GMST > SL

SL > GMST > CO2

Now and Then.

Ice Sheets > SL > GMST > CO2

How can SL drive the GMST? How can the system be bistable? I’d bet on ice sheet mass driving rather than the GMST driving. The GMST is a result of various masses. Let’s try the opposite, Various masses are the result of the GMST. The glacial/interglacial cycle has a good link to the GMST and I’d suggest the average ocean temperature.

Paleo is going to suffer to some extent if CO2 is now the driver and before it wasn’t. Suffer in that, I don’t care what used to happen if the system is now in another mode. You can’t change the system and then use the past to make your points. To the extent it is the fastest mostest ever, unless you say, we didn’t change that part of it. All SLR is limited by mass. If ocean warming is not off the charts yet, it’s not going to go off the charts. If ocean warming is suppressing the GMST warming, how much more can it suck in in terms of joules per year? These background masses are going to determine the fastest mostest ever. It’s been a fun experiment. Look what we can do, look what mass can do. I think all my chains above are linear. Which of course is wrong.

Not Grant Foster1 and Stefan Rahmstorf but

Gavin L. Foster and Eelco J. Rohling.

Phew, you had me going for a moment there.

“we know that at 400 ppm in the past that we have had warmer conditions than predicted by models and higher sea levels.”

Warming seas and massive plant growth fostered by warmer conditions will cause CO2 to go over 400. You know this though. So why claim a cart before the horse privilege?

Rising CO2 can put up temps a little, your concern but claiming precisely which came first a million or 100 million years ago?

Only Foster number 1 would claim that.

Is it correct that ECS increases as GMST decreases, as per Gill Figure 1:

On this basis, if ECS = 1.5 to 3.0°C when GMST =15°C, we should expect ECS will decrease as GMST increases.

Ghil, 2013, A mathematical theory of climate sensitivity

https://pdfs.semanticscholar.org/3df8/284c921d0194a443411db7a63772b659a79c.pdf

A question. It appears that a primary concern with our projections has to do with the amount of precipital water in the upper troposphere. The empirical evidence that I have seen, one from satellite studies between 1988 and 2001, and a second from radiosonde date from 1948 to 2012 show water vapor declining, in direct contradiction to the theory created in the Charney Report of 1979. Is there other empirical evidence that shows the theoretically expected increase in upper tropospheric water vapor, or are we simply relying on modelled expectations over reality?

If there is no additional empirical evidence, it would appear that modelled projections of temperature increase are starting from a base that is triple what is reasonable.

Thanks; awaiting a response from the moderator or a fellow reader.

drhealy,

Try this paper:-

http://www.pnas.org/content/111/32/11636

Thanks, I will read it thoroughly and add it to the collection I am compiling. It does concern me that this and virtually all of the other papers involve “modeling” and “re-analysis” of earlier work that sometimes showed contradictory conclusions. Perhaps it is too much to ask for, but I was hoping for straight forward empirical evidence, as opposed to modeling efforts.

Just had to think of an asylum where the inmates are investigating the probabilities of their delusions and visions. A major question is of course who will qualify as an expert. This is just the “climate” department. Wait until the other departments join the fun and start to cross-compare. At which moment the whole thing begins to somehow look so real ….

Perhaps someone should remind us that this is all about subjective probabilities. No way to give them objective meaning. There is just one event in the sample.

How did we land in this situation of such a serious science-policy mismatch?

Isn’t it because policies were predetermined in a doctrinal way (no science involved)?

When the UNFCCC was agreed in 1992, “climate change” was defined once and for all as ““Climate change” means a change of climate which is attributed directly or indirectly to human activity that alters…“.

Thus the troops were sent to take this hill, no other ones.

The whole matter is a delirious distraction from our prime task of unraveling how the Sun drives our weather pattern and ocean cycles, the stuff we need to know about most.

And the whole show rests upon unproven and irrational net positive feedbacks to increases in climate forcing.

When economists create models there can be no science involved, its bookmaking. It fully meets Feyman’s definition of a pseudo science. There are no laws and it cannot be proven.

Same thing happened with renewable energy. In the case of renewable enrgy they just made up their own laws that said subsidising renewable energy made the known inadequacy and intermittency of its energy sources far more intense and continuous, by law.

This has problems in engineering delivery of what engineers know is impossible. This does no stop economists subsidising it by law and hoping nobody notices it failing as the laws of physics say it must.

As far as climate change. What do you think stops the relentless rise of the interglacial stone dead in while CO2 is still burgeoning/ Answer is evaporation forming clouds at 140W/M^2 currently. So why should 1W/M^2 of AGW not be retuned to equilibrium by asmall change in this effect, which occurs naturally by ocean warming.

Who seriously claims and can show how water vapour amplifies CO2 emissions to catastrophic tipping point runaway again rather than create the dominant clouds that cool things down?

Go Figure. This applies to any other 2 or 3W/m^2 of heat increase that will be transfered to space along with increased albedo from greater cliud formation that will simply maintain the equilibrium. Why the planet has such a stable ice age cycle between very close limiting temperatures, and doesn’t freeze over or boil off the oceans. It’s the clouds, stupid!

Now that’s what I call a climate model, it uses the facts and explains what happens in basic physics terms. No belief is required to grasp the massive forces at work relative to human impact.. No need to believe mumbo jumbo models that are in fact ignoring a chunk of the environment completely, oceans and lithosphere are not passive or constant in this, and that assume pre agreed causes and effects their models are engineered to track by the assumptions o the climate priests who programme them, with many wholly presumptive assumptions that are the antithesis of reality that physics does understand. One key example is the the dominant negative feedback control of water vapour on temperature during interglacials. Cloud control. Why does any real physicist accept the science denying nonsense that asserts otherwise? Show me the physics!

The simple yin and yang of it is that, the network of feedback loops (respect for life, liberty, property and acceptance of personal responsibility) also can ’cause’ greatness beyond human imagination.

The Sun will continue to heat the oceans or not, without our help, and there’s nothing humans can do about it and that’s not a theory: it is a fact, even if we eagerly sacrifice the constitution, the country, capitalism, liberty and the scientific method on the altar of global warming alarmism because we read in the NYT that humanity’s CO2 is a dangerous pollutant that causes global warming [i.e., AGW theory]. Nevertheless, when the oceans begin to cool there will be no global warming, irrespective of Westerners’ theorizing about AGW.

“Climate science has made a massive framing error, in terms of framing future climate change as being solely driven by CO2 emissions”.

It’s framed that way because CO2 is the thing that human beings can change. We are not going to derive future policy over the possibility of a huge volcanic eruption.

There’s no rational grounds for predicting chaos-causing continually increasing average global waming– whether the atmosphere as a whole is either warming or cooling, a pragmatist can only conclude global warming has been good for humanity. No one is clamoring for a colder Earth.

Odd that someone so aware that energy is the line integral of force per distance leaves out of consideration the initiation of force–and power– politicians can practice with impunity in a mixed or socialist economy.

I see no problem. If the earth warms 10 degrees, that means the entire Russian continent becomes a paradise. Putin opens up immigration to Orthodox Christians around the world, we go settle our 40 acres and the West can burn in the godless Hell that it has already become.

-d

The CO2 control knob is rather simplistic and too coarse for decadal time scales. A better analogy would be an airplane:

> CO2 is like the trim wheel.

> Water vapor and aerosols are like the stick and rudder pedals.

> Science is like the instruments. It’s probably a good idea to reset the gyros once in a while.

> The methane time bomb is like the mountain off on the horizon that we’re trying to avoid crashing into.

Methane feedbacks are not entirely positive. Meaning there is a studied biological response to arctic methane release.

Curry presents a laudable breakdown of risk assessment for politicians and other laymen. The portrayal of the “CO2 control-knob” as rubber ducky is particularly apt. Natural variability, however, is by no means any black swan, but as commonplace as mallards in Canada. Only irretrievably politicized minds can argue otherwise.

John, this is what I find so frustrating. Dr. Curry rightly explains the wide margins in ECS estimates and she is crowned the queen the “uncertainty”. Why is it such a cardinal sin to demonstrate the disagreement?

It is really strange that the diagram “Classifying Possibilities” shows possibilities going in all directions from the “Strongly Verified” yet in both diagrams “Equilibrium Climate sensitivity to Doubled Co2” shows only possibilities going in one direction from the “Strongly Verified” . That is nothing less than 1 C.

Murphy’s Law is “Anything that can go wrong (in a scientific theory) will go wrong (In a scientific theory). Especially when billions of dollars have been spent proving the theory and everyone has been told they are idiots if they don’t accept the theory.

My guess is the sensitivity to a doubly of CO2 is less than 1 degree C.

Cheers and have fun.

“More striking is that the [AGW] hypothesis is not testable. It cannot be falsified. The alternate hypothesis, the network hypothesis, is rooted in observation…” ~Marcia Wyatt

Rainfall in the Mediterranean Basin is influenced by ocean surface temperatures in the tropical Pacific and the north Atlantic. The variability in ocean surface temperature year to year, decade to decade, century to century result in persistent regimes of droughts and floods. Because of the importance of Nile River flows to the Egyptian civilisation water levels have been measured for 5,000 years and recorded for more than 1,300. The ‘Nilometer’ – known as al-Miqyas in Arabic – in Cairo dates back to the Arab conquest of Egypt. The Cairo Nilometer has an inner stilling well connected to the river and a central stone pillar on which levels were observed. The exterior of the stilling well can be seen in the photo below. The Nilometer remained useful until the 20th century when major dams changed the Nile River flow regimes. – http://www.waterhistory.org/histories/cairo/

https://watertechbyrie.files.wordpress.com/2015/06/nilometer.jpg

In a field in which observation and an abductive mode of inference is the primary guide – such a record is an amazing resource. And because it’s even more chaotic than random.

https://watertechbyrie.files.wordpress.com/2018/05/nile-e1527722953992.jpg

In mentioning of the Egyptian Nilometer it is wise to remember Plato saying that according to the Egyptian priests Egypt was not effected by events [ in the Holocene]. The priest were talking about the sun changing its place of rising beside other things.

That said, events in the Mediterranean were far wilder than they are made out to be. Recent earthquakes around Etna and the mention of a possibility of a flank slump, this article here : https://agupubs.onlinelibrary.wiley.com/doi/pdf/10.1029/2006GL027790 [at 28] refers to an earlier event around 7k6 years ago (Holocene max). However that date marks events elsewhere as well, making the Etna event one of several concurrent events. Note from the link here: https://melitamegalithic.wordpress.com/2018/07/24/searching-evidence-update/ around 7k7 BP (5k7bce) a peak in Eddy cycle and correlating with abrupt polar and equatorial temperature trend change. Those dates also correlate with seismic geological events, a whole series of them, that have left their record in archaeology and in a host of proxies from around the world.

Then note also the temperature anomaly level. Those were taken from Wiki. Taken at face value, the chronological correlation was the important aspect here. Now we are heading for another Eddy peak. (They say forewarned is forearmed).

I have been getting a little into megalithic ‘dragon-kings’ – event outliers esp. dynamical – lately.

“Solar activity during the current sunspot minimum has fallen to levels unknown since the start of the 20th century. The Maunder minimum (about 1650–1700) was a prolonged episode of low solar activity which coincided with more severe winters in the United Kingdom and continental Europe.” http://iopscience.iop.org/article/10.1088/1748-9326/5/2/024001/meta

But I was thinking primary operators on major Earth sub-systems. Solar intensity has been linked to surface pressure – and therefore wind fields – in the polar annual modes. Systems with internal feedack loops – but none the less solar modulated to some degree.

https://watertechbyrie.files.wordpress.com/2017/01/nao_fig_4-e1520191198670.jpg

A future more stormy and colder for some northern climes, cooler by degrees C in Central England, reduced AMOC and increased ice sheet growth – for centuries. More meridional winds in a cooler sun drive great ocean gyres and set the scene – in both hemispheres – for enhanced upwelling on the western margins of continents. Cool water at the surface of the eastern and central Pacific enhances closed cell, marine strato-cumulus cloud cover – increasing albedo from that of the dark ocean below to bright cloud over much of the global tropics. And thus with more upwelling an internal feedback loop with a negative energy storage dynamic and a cooling planet?

https://watertechbyrie.files.wordpress.com/2017/04/gyre-impact-e1532565836300.png

Re your earlier comment on ‘Dragon kings’, has got me intrigued. However note the only solid evidence – the real dragon – to work on is in my second link, at the top of the chart. IMO some of the rest in DK theory is ‘looking for elephants with a microscope’.

Didier Sornette – https://arxiv.org/abs/0907.4290

According to Bjorn Lomborg in The Weekend Australian:

• Paris commitments will cost $1-2 trillion p.a. from 2030 by increasing energy costs, thereby reducing global GDP growth.

• This will do nothing to change the climate.

• Climate scientists say keeping global warming to less than 2C will require reducing emissions by 6000 Gt CO2.

• But Paris commitments, if achieved, will cut emissions by just 56 Gt CO2 by 2030, i.e. leaves 99% in place.

• WHO estimates that since 1970s climate change has causes 140,000 deaths p.a., and projects 250,000 p.a. by 2050.

• For $5 billion per year (i.e. 0.5% of the Paris commitment’s increased cost of energy) more than 1 million deaths from TB could be avoided per year.

• More than $30 billion of OECD aid is climate related.

• That is more than three times what would be needed to eradicate the world’s worst infectious diseases.

• Nearly 10 billion people responded to a survey in which they were asked their policy priorities. The answer:

• Education and better healthcare ranked top priorities.

• Climate polices were bottom of the list.

A recent Dutch report on future sea level rise completely relied on the scenario that half of the Antarctic Icecap could melt this century. Should the dutch policymakers take notice of this report?

Err, no …..

The WAIS is not “half of the Antarctic Icecap”.

And it was up to 2130.

file:///C:/Users/Tony/Downloads/waishollandwp.pdf

“A possible effect of the ongoing change in the climate system would be the disintegration of the West-Antarctic Ice Sheet (WAIS). Such event would lead to a 5 to 6 m sea level rise. Although chances are small, the consequences are enormous. Obviously, communities in low elevation coastal areas would be threatened. However, what more is there to say about this risk? What could actually happen under such 5 m SLR? This question – the topic of the present paper – is relevant, for instance when considering policies to avoid and adapt to climate change. “

What if the Sun does not rise tomorrow? Although chances are small, the consequences are enormous. Let’s better sacrifice another human.

And then a handful of Spaniards conquered the Aztec empire.

Hi Tony, can’t access your c-drive :-) you are referring to https://www.researchgate.net/publication/24130188_Neo-Atlantis_Dutch_Responses_to_Five_Meter_Sea_Level_Rise

Recently there was are report that under RCP8.5 a three meter sea level rise was possible.

https://www.deingenieur.nl/uploads/media/5ba1137c88916/Zeespiegelstijging.jpg

But how likely is it, what are the boundary conditions? Quite important for the dutch taxpayer.

We need to wait for Zwally to publish

Regarding worst case scenarios, policies to reduce global warming are based on what may be a false premise – i.e. that a few degrees of global warming from current icehouse conditions is dangerous. Various lines of evidence suggest global warming would be beneficial, not damaging and not dangerous. One of them is empirical paleo evidence.

Below is a summary of some key points from a discussion on the previous thread, in response to this comment https://judithcurry.com/2018/10/08/1-5-degrees/#comment-881915. The temperatures quoted here are from Scotese 2018, Phanerozoic Temperature chart, p.3 here: https://www.researchgate.net/publication/324017003_Phanerozoic_Temperatures_Tropical_Mean_Annual_Temperature_TMAT_Polar_Mean_Annual_Temperature_PMAT_and_Global_Mean_Annual_Temperature_GMAT_for_the_last_540_million_years

• Optimum GMST for life on Earth over the past 500 million years is centred on about 22°C, which is about 7°C warmer than now (GMST is now about 15°C).