by Judith Curry

Interest is running high this week on the topic of climate sensitivity.

Nic Lewis’ analysis of the consequences of reduced aerosol forcing is creating a stir. Plus this week there is Workshop on Climate Sensitivity in Germany (details at RealClimate); hashtag #ringberg15.

The topic I want to focus on in this post is what we can infer about the upper bound of climate sensitivity, and structural uncertainty in how we even approach this problem.

Tail risk

The economic value of CO2 mitigation depends sensitively on the possibility of extreme warming. This insight has been obtained through a focus on the fat upper tail of the climate sensitivity probability distribution.

In fact, some have argued that more uncertainty in the extremes means more urgency to tackle global warming. My counter argument to this is provided in the post Uncertainty, risk and (in)action; see also Tall tales and fat tails,

In light of recent research on climate sensitivity, it is time to revisit these ‘fat tails.’ My previous post Worst case scenario versus fat tails describes a way forward, in context of scenario falsification.

History of IPCC sensitivity estimates

An interesting historical overview of the history of climate sensitivity estimates is provided by Euan Mearns. Below is a summary of the IPCC assessments of equilibrium climate sensitivity (ECS):

FAR (1990): The range of results from model studies is 1.9 to 5.2°C. Most results are close to 4.0°C but recent studies using a more detailed but not necessarily more accurate representation of cloud processes give results in the lower half of this range. Hence the models results do not justify altering the previously accepted range of 1.5 to 4.5°C. Taking into account the model results, together with observational evidence over the last century which is suggestive of the climate sensitivity being in the lower half of the range, a value of climate sensitivity of 2.5°C has been chosen as the best estimate.

SAR (1995): No strong reasons have emerged to change [FAR] estimates of the climate sensitivity.

TAR (2001): likely to be in the range of 1.5 to 4.5 °C.

AR4 (2007): Equilibrium climate sensitivity very likely is greater than 1.5 °C (2.7 °F) and likely to lie in the range 2 to 4.5 °C, with a most likely value of about 3 °C . A climate sensitivity higher than 4.5 °C cannot be ruled out.

AR5 (2013): Equilibrium climate sensitivity is likely in the range 1.5°C to 4.5°C (high confidence), extremely unlikely less than 1°C (high confidence), and very unlikely greater than 6°C (medium confidence).

The bottom line is that ECS estimates have remained within the range 1.5-4.5C for decades, with a brief diversion of the lower bound to 2.0C in the AR4. FAR has a best estimate of 2.5C; AR4 provides a best estimate of 3.0C; AR5 declines to provide a best estimate owing to disagreement between climate model and observational methods. The other significant statement of AR5 vs AR4 is ‘very unlikely greater than 6C (medium confidence)’, whereas AR4 declined to specify any kind of upper limit.

The ‘tails’

Lets take a look at the sensitivity distributions provided by the AR4, AR5, plus Nic Lewis’ recent analyses.

First, the equilibrium climate sensitivity, with the gray shading reflecting the 1.5-4.5C likely range of the AR5.

ECS from the AR4:

ECS from the AR5, instrumental estimates:

ECS from the AR5, climate models:

ECS from Nic Lewis, revised for new estimates of aerosol forcing [link].

ECS from Nic Lewis, revised for new estimates of aerosol forcing [link].

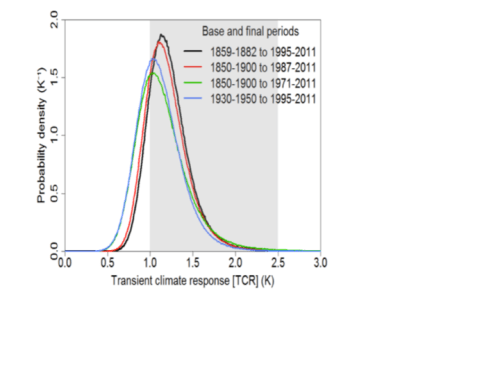

And now, for the transient climate sensitivity (TCR), with shading from 1.0-2.5C (reflecting the AR5 ‘likely’ range). Note, the AR5 states: it is very unlikely that TCR is less than 1°C and very unlikely that TCR is greater than 3.5°C.

And now, for the transient climate sensitivity (TCR), with shading from 1.0-2.5C (reflecting the AR5 ‘likely’ range). Note, the AR5 states: it is very unlikely that TCR is less than 1°C and very unlikely that TCR is greater than 3.5°C.

TCR from the AR4:

TCR from the AR5, instrumental and climate model estimates:

TCR from the AR5, instrumental and climate model estimates:

TCR from Nic Lewis, revised for new estimates of aerosol forcing.

I’ve tried to align the scales for ECR and TCR. The new distributions of climate sensitivity calculated by Nic Lewis are strikingly lower than the AR4/AR5 estimates, particularly with regards to the upper tail.

I’ve tried to align the scales for ECR and TCR. The new distributions of climate sensitivity calculated by Nic Lewis are strikingly lower than the AR4/AR5 estimates, particularly with regards to the upper tail.

Clarifying the fat tail: Modal falsification

On a 2010 thread (can’t find it now), I stated that I thought the ‘very likely’ range for equilibrium climate sensitivity was something like 0.5-10C (I can’t exactly recall the lower bound). My rationale for this was the AR4 ECS figure (shown above). I had no reason at that time to ‘reject’ any of the values shown in that figure.

Gregor Betz defines modal falsification as follows [BetzModalFalsification]:

Modal falsification: It is scientifically shown that a certain statement about the future is possibly true as long as it is not shown that this statement is incompatible with our relevant background knowledge, i.e. as long as the possibility statement is not falsified.

Nic Lewis’ research arguably falsifies the high values of climate sensitivity determined from instrumental data, owing to problems with the statistical methodology and the forcing data. IMO, Nic’s methodology for determining climate sensitivity from observations and energy balance model represents the best current method.

With regards to climate model determinations, see this paper: The upper end of climate model temperature projections is inconsistent with past warming, by Peter Stott, Peter Good, Gareth Jones, Nathan Gillett, Ed Hawkins, published in Environmental Research Letters (open access) [link]. This paper does not directly address the issue of climate sensitivity. It is obvious from comparing climate model simulations with observations that most climate models are running too hot for the early years of the 21st century.

Some recent inferences have been made that reduce the upper likely bound for ECS. James Annan has stated It’s increasingly difficult to reconcile a high climate sensitivity (say over 4C) with the observational evidence for the planetary energy balance over the industrial era.

At the Ringberg Workshop, the title of Bjorn Stevens: Some (not yet entirely convincing) reasons for 2.0 < ECS < 3.5C. I suspect that these reasons are based on inferences from climate models.

JC assessment of climate sensitivity

Here is my assessment, based on current background knowledge.

Climate models are not fit for the purpose of determining TCR, owing to their lacking capability to simulate the patterns and phasing of decadal to multi-decadal internal variability.

That leaves the energy balance climate models using historical observations, which seem well suited to determining TCR. Nic Lewis analysis is arguably ‘best in class’, providing two recent analyses:

- AR5 forcing: 1.05 – 1.8C

- New (lower) aerosol forcing: 1.05 – 1.45C

Compare these values to the AR5 likely range for TCR of 1.o-2.5C. I would place ‘medium confidence’ on Nic Lewis’ ranges of TCR (I’m not sure we’ve heard the last word on aerosol forcing, but I strongly suspect that the lower bound is less than what was used in the AR5).

The situation is much more complex and uncertain for ECS determinations. The energy balance method is associated with uncertainties in ocean heat uptake, which is probably rather variable. Failure to adequately simulate internal variability is much less relevant when climate models are run to equilibrium. However, most of the climate models are using aerosol forcing that is too large, which is masking a sensitivity to greenhouse gases that is also too large.

Using Nic Lewis’ method for determining uncertainty, meaningful pdf’s can be determined (and hence a statistically meaningful ‘likely’ range can be determined.) Once climate models are in the mix, I have argued previously (Probabilistic estimates of climate sensitivity) that Bayesian analysis and expert judgment are not up to the task of providing limits to the range of expected climate sensitivity. Simply put, the collection of climate model simulations do not comprise a meaningful pdf.

So what to do? Betz’s modal falsification provides a way forward. Look at simulations from each climate model (preferably an ensemble) and see if there are any reasons to reject that model. Reasons would include using a highly unrealistic value of aerosol forcing, simulations that do not agree with observations. Figuring out how to actually do this in a meaningful way should be the main activity of climate model ensemble interpretation, IMO.

Where does this leave us for now, in terms of bounding ECS?

- lower bound: I would use Nic Lewis’ values for the lower bound: 1.05 (for the 5-95% range) and 1.2 (for the 17-83% range)

- upper bound: we need to look at climate models, for which there is no meaningful pdf, we can only look at discrete simulations. The highest model simulations seem to be slightly less than 5C. Once the simulations are culled to reject models with very large aerosol forcing, I suspect that this will lower substantially (perhaps to Bjorn Steven’s value of 3.5C)

Compare to the AR5’s ‘likely’ range of 1.5-4.5C. I would place low/medium confidence on these numbers. The most striking issue is that the observational estimates are almost completely outside the range of the climate model simulations.

There is one climate model that falls within the range of the observational estimates: INMCM4 (Russian). I have not looked at this model, but on a previous thread RonC makes the following comments.

On a previous thread, I showed how one CMIP5 model produced historical temperature trends closely comparable to HADCRUT4. That same model, INMCM4, was also closest to Berkeley Earth and RSS series.

Curious about what makes this model different from the others, I consulted several comparative surveys of CMIP5 models. There appear to be 3 features of INMCM4 that differentiate it from the others.

1.INMCM4 has the lowest CO2 forcing response at 4.1K for 4XCO2. That is 37% lower than multi-model mean

2.INMCM4 has by far the highest climate system inertia: Deep ocean heat capacity in INMCM4 is 317 W yr m22 K-1, 200% of the mean (which excluded INMCM4 because it was such an outlier)

3.INMCM4 exactly matches observed atmospheric H2O content in lower troposphere (215 hPa), and is biased low above that. Most others are biased high.

So the model that most closely reproduces the temperature history has high inertia from ocean heat capacities, low forcing from CO2 and less water for feedback.

Definitely worth taking a closer look at this model, it seems genuinely different from the others.

Structural uncertainty and unknowability

So, how are we going to resolve this issue? Are we running in circles and chasing our tails, with the same ‘official’ range of 1.5-4.5C since the 1979 Charney report?

Will the Ringberg Workshop this week move things forward? Well, I’m not optimistic. Last week I tweeted:

wkshp seems to miss discussion of structural uncertainty in ECS/TCR determination

Tamsin responded:

Er…how do you know that if it hasn’t happened yet…?

Well the list of participants and the talk titles and suggested post AR5 papers all omit what I regard to be the elephant in the room: the confounding factor of natural internal variability. Lewis and Curry (2014) gave a nod to this by choosing baseline period to be compatible in terms of the AMO index. This partially address the issue, but there are other modes of multidecadal variability and there are also longer term modes; we have no idea to what extent a longer term oscillation might be contributing to the overall warming since circa 1600.

There is an important paper What is the effect of unresolved internal variability on climate sensitivity estimates?, that was discussed on this previous thread Meta-uncertainty in the determination of climate sensitivity. Punchline:

We demonstrate that a single realization of the internal variability can result in a sizable discrepancy between the best CS estimate and the truth. Specifically, the average discrepancy is 0.84 °C, with the feasible range up to several °C. The results open the possibility that recent climate sensitivity estimates from global observations and EMICs are systematically considerably lower or higher than the truth, since they are typically based on the same realization of climate variability. This possibility should be investigated in future work. We also find that estimation uncertainties increase at higher climate sensitivities, suggesting that a high CS might be difficult to detect.

IMO, the most important of climate sensitivity papers published post AR5 is by Michael Ghil: A mathematical theory of climate sensitivity, or how to deal with both anthropogenic forcing and natural variability? (discussed at CE here). No sign of Ghil’s ideas at this Workshop. See also this paper by Zaliapin and Ghil: Another look at climate sensitivity.

There are major structural uncertainties in climate models that contribute to problems with sensitivity, particularly related to the fast thermodynamic feedbacks (clouds, water vapor, lapse rate), a topic that I discussed on a previous thread Model structural uncertainty – are GCM’s the best tools? It looks like some of these issues will be discussed at Ringberg.

There are also structural uncertainties in the energy balance model methods, in addition to the issue of ocean heat uptake. Some of these are being addressed in a new paper by Nic Lewis that is under review (I suspect that this will be the topic of his talk at Ringberg).

Where does this leave us? Well it is difficult to argue that we should have more than ‘medium’ (at best) confidence in the range of climate sensitivity estimates.

It seems that we are on the verge of some progress in addressing model structural uncertainties, but there does not seem to be a path forward by this group to address the meta-issue of natural internal variability as structural uncertainty.

JC conclusions

Our methods of inferring climate sensitivity – using GCM climate models and energy balance models – are leading us to reject the highest values of climate sensitivity that were determined using methods or models that have been deemed erroneous.

In short, the ‘fat tail’ of climate sensitivity is getting skinnier and shorter. Does this imply that ‘true’ climate sensitivity is relatively low? No. The absence of evidence and incompleteness of our knowledge (e.g. low/medium confidence) does not rule out unforeseen and unforeseeable extreme values of climate sensitivity.

So how are we to interpret our current understanding (and lack of understanding) of climate sensitivity in terms of policy? Well that will be the topic of a forthcoming post. The bottom line is that the justification for high values of climate sensitivity (using global and energy balance models) continues to diminish.

I hope that the Ringberg Workshop will be productive and interesting. I will do a post on that once the talks are online.

http://c3headlines.typepad.com/.a/6a010536b58035970c017eea5c93d4970d-pi

Here is the data . I forgot AGW theory throws the data out if it does not conform to their theory.

I think this is topical to CS.

What kind of temperature change are we interested in?

For instance:

The range of temps from the minimum temps of the year, the coldest night of winter, to the maximum of the summer?

Or are we interested in that it warms earlier (and later) in the year, even if the max temps don’t go up.

Or that on a daily basis does Co2 cause the day to be a little warmer?

All of these would show up in an average annual temperature as an increase.

They all mean different things, and station data has to be looked at specifically for each case. For instance when you look at yesterday’s warming compared to last nights cooling, cooling is an almost exact match to warming, even years that have a larger range.

How Co2 effect temperatures is more than a change in average temp, especially since almost none of the changes in temp are a positive trend, they are regional swings, or changes in the rates temps change over the year, but when temps are up so is night time cooling.

http://www.science20.com/sites/all/modules/author_gallery/uploads/543663916-global.png

http://www.science20.com/sites/all/modules/author_gallery/uploads/1871094542-global.png

Whatever caused the temperature cycles of the past has not stopped.

Climate sensitivity to CO2 is very close to zero, plus or minus some tiny amount.

Temperature has not deviated from the natural cycle of the past ten thousand years. The Roman Warm time and the Medieval Warm time were just like this Warm time. Temperature is doing exactly what it should be doing.

The Models have been worse than useless. Find out what is missing from the models or quit using them.

When oceans get warm, the polar oceans, in the north and the south, become open and the there are more clouds and more rain and more snow and the warming ends and cooling starts, every time.

Climate sensitivity to CO2 is very close to zero, plus or minus some tiny amount that cannot be detected with actual data.

When you say “close to zero” what do you mean?

My own rough estimate is based on the following. First we need the direct affect of CO2. I wish we had something other than just Hermann Harde’s work with the “latest” HITRAN (0.6C for a doubling of CO2), although as it’s 2007 it’s almost ancient. But still far better than the 1998 HITRAN figure of 1C used by the IPCC.

So, I use the 0.6 for a doubling of CO2. Then as negative feedbacks will dominate in an interglacial, we can simply estimate the amount of warming as slightly less than 0.6C so perhaps 0.3-0.5C – because that scale of feedback would have the rather modestly stable climate we see in interglacials.

http://popesclimatetheory.com/page16.html

Manmade CO2 is around 100 parts per million of the atmosphere.

That is one molecule per ten thousand.

That is 0.0001

The influence of that cannot be measured to be different than zero.

if manmade influence does cause CO2 to double, then the manmade part would be more than the natural part.

Man’s input to CO2 is tiny compared to what nature puts in and takes out every year. Nature can make small adjustments and blow our part away.

Even dreaming about humans controlling the temperature of earth by controlling CO2 is really sick.

Hm. A bunch of papers to read, there.

While it doesn’t follow that high values of sensitivity can be completely ruled out, though, looking at it as a Bayesian it would appear that the posterior distribution is going the be both narrower and farther to the low end than it has been. If that’s true, then other forcings are more important and the cost-benefit ratio of major carbon reduction plans is much reduced.

Allo Chaco, join in the Ring.

========

In the room the elephant is loudly trumpeting

but consensus-confirmation-bias’s’ trumping

the blasts of heresy regarding climate sensitivity.

If the only tool you have is a hammer, every problem looks like something you can hit.

Likewise, when the only tool you consider is positive “climate sensitivity”, it appears any old noise looks like positive climate sensitivity.

When will anyone start talking about climate insensitivity? Or the fact that inter-glacials are patently stable and therefore have negative feedback to temperature rise?

Or am I just being insensitive not joining in the hug-fest pats on the back for anything with positive feedbacks?

All of the estimates for climate sensitivity are wrong because the peak should be centered on what has been measured, zero.

From 1690-1730 Central England saw a massive rise in temperature at a time when there would have been virtually no increase in CO2. From 1970-2000 we saw a much lower level of warming when CO2 was present. From this, we might as well deduce that CO2 reduces change than that causes change. But no, the “normal” climate is falsely taken to be unchanging from which all change is then falsely attributed to a scaled up warming from CO2.

If someone did that kind of nonsense in economics, they’d never get away with it, but print this rubbish on climate and it is deemed to be “settled science” or something equally crazy.

the “normal” climate is falsely taken to be unchanging from which all change is then falsely attributed to a scaled up warming from CO2.

In some documents, that is called “the hockey stick”

http://jennifermarohasy.com/2013/12/agw-falsified-noaa-long-wave-radiation-data-incompatible-with-the-theory-of-anthropogenic-global-warming-2/

More data which again is being ignored. This to me is devastating data for the AGW cause. I do not understand why this is not not receiving more attention.

When you contrast this data ,along with other data with estimates on climate sensitivity to CO2 the high end estimates do not hold water.

I argue that the reality is the opposite which is not how sensitive the climate is to CO2 , but rather CO2’S sensitivity to the climate. Data shows that CO2 FOLLOWS the temperature does not lead it which lends support that it is CO2 which is sensitive to the climate not the other way around.

Then again as I am going to stress over and over again AGW theory does not acknowledge data if it does not conform to their theory.

Salvatore del Prete: http://jennifermarohasy.com/2013/12/agw-falsified-noaa-long-wave-radiation-data-incompatible-with-the-theory-of-anthropogenic-global-warming-2/

Thank you for the link.

http://data.giss.nasa.gov/modelforce/

I would argue much of the data here needs to be reevaluated and adjusted.

Is that a polite way to say “thrown away”?

Dr Curry

Bjorn Stevens recently co-authored “Adjustments in the forcing-feedback framework for understanding climate change”. The authors describe it as a new paradigm. It looks relevant to this thread.

Richard

Hi Richard, thanks for the link, i was just reading this paper today.

So, what’s your take on it? Will you write a blog about it? Are we seeing the first steps towards acknowledging that the climate system is intrinsically more complex than has been suggested for over three decades now? Is there finally room for new ideas along the line of thoughts of for example Robert Brown @ Duke (but there are others) without actually being ridiculed and ostracized? Is this the start of the climate community (re-)discovering non-linearity?

Unfortunately, the irony of the architect of the schloss, urges the works grand.

============

Maybe they could just pitch a hockey stick off the tower. I’m all for symbolic instead of living sacrifices.

===========================

OK, OK, OK, pitch the bloody fat tail off the tower. That’s been the architect of present fearful disasters from future fantastic disasters after all.

=================

Knutti 2002 seems like one that takes variance (slightly) more sensibly in that it goes up and down like the Assyrian Empire.

It has a fat tail shaped like the Loch Ness Monster.

Here’s a link to the paper:

http://journals.ametsoc.org/doi/pdf/10.1175/BAMS-D-13-00167.1

Richard

Thanks for that link. I skimmed an earlier draft of that paper not long ago. Nice to see it has been accepted..

richardswarthout: http://journals.ametsoc.org/doi/pdf/10.1175/BAMS-D-13-00167.1

thank you for the link.

Yeah, very nice. Looks like a plate glass paradigm. Also, under ‘Geo-engineering’, what is safer, more cost effective, and more easily reversible than anthropogenic release of CO2?

==========

Judith,

Interesting post, thanks.

It may be worth emphasizing a bit more how uncertainty in forcing and temperature response has a far greater impact on the ‘fat tail’ of the distribution; that is, any reduction in uncertainty narrows the fat tail of high sensitivity far more that it narrows the ‘skinny tail’ low sensitivity. The rather large reduction in the fat tail part of the distribution Nic showed by applying the lower Stevens range of aerosol effects to the Lewis&Curry approach is the perfect example of this.

One might be tempted to suggest that efforts to narrow the uncertainties in ocean heat uptake, aerosol influences, and ‘natural variability’ represent the most potential bang for the taxpayers’ buck…… Public policy will be automatically more appropriate if uncertainty in sensitivity is reduced.

To what extent are we trying to find an exact figure for a changing parameter? Is there any reason why we should think sensitivity (certainly TCR, but arguably ECS as well) is a fixed value? I can think of a dozen real world reasons why sensitivity would change–and I know I keep asking this question, but it seems not to get answered… Help me Mr. (Ms.) Wizard!

Hello Thomas,

There is considerable published work that discusses exactly this subject (for example: Armour et al, http://journals.ametsoc.org/doi/abs/10.1175/JCLI-D-12-00544.1). You need to draw a distinction between temporal non-linearity (eg non-linear response of temperature over time to a step-change in forcing), which is for certain true, and non-linearity in the temperature domain (a change in sensitivity to forcing due to a temperature change). While there is probably fairly broad agreement that there has to be *some* change in sensitivity in the temperature domain, the extent of that change is not at all clear. Over small temperature changes (a couple of degrees) in average surface temperature, a reasonable first approximation is that sensitivity is nearly constant, but there is disagreement even here; some GCM’s show considerable (apparent) change in sensitivity with temperature, while others show relatively little change. Armour et al postulate that *apparent* non-linearity in the temperature domain is in fact due to differences in temporal response rates in different geographic regions…. and that in fact the response is very close to linear in temperature. Hope this helps. (FWIW, I did not find Armour et al very persuasive; the difference in regional response rates would have to be enormous to generate large, but only apparent, non-linearity in the temperature domain.)

I think Armour encapsulates the reason for the well known published short-term observation-based estimates of ECS likely being underestimated. It relates to the different response rates of various parts of the earth system. In short, much of the water vapor feedback is delayed by the ocean inertia. What you get first is the land warming faster, as it is now, and the ocean heat content building up instead of the water vapor.

Steve and Jim, thank you both. I have received similar replies in the past when I have posed this question–I’ll re-read Armour, but remain a bit frustrated by the lack of attention to this.

Seems fairly important…

Hello Thomas,

What is frustrating about this? The subject has received quite a lot of attention, at least in the published literature. It is a subject that is a bit esoteric, but lots of people do understand the importance. See for example http://rankexploits.com/musings/2013/observation-vs-model-bringing-heavy-armour-into-the-war/ for an in depth discussion of the Armour et al paper. Isaac Held also had a couple of posts on this subject (although he formulated the subject differently from Armour et al). The question is far from closed, and substantial disagreement, even between different climate models, likely means it will not be resolved any time soon. The frustration for me, if there is one, is that projected increases in apparent sensitivity in the temperature domain only become clear a very long time (up to centuries) after an increase in radiative forcing. This means meaningful empirical tests are probably out of reach for many decades or more. Perhaps there are ways of testing empirically more quickly, but I have not seen them.

Climate sensitivity does not inform policy. Climate sensitivity is one input to estimating costs and benefits of policy options. It is the costs and benefits of policy options, and the probability of success of the proposed policy in the real world that policy analysts need to know.

http://c3headlines.typepad.com/.a/6a010536b58035970c017ee9240faa970d-pi

Last one.

I could present data like this forever which show AGW theory time and time again does not conform to the data. The data says the theory is W R O N G.

Salvatore,

There is of course a great deal of evidence that GHG forcing (CO2, methane, etc) is not the only factor which influences Earth’s climate. The problem with looking at CO2 estimates over hundreds of millions of years is at least three-fold: 1) the position of the continents has undergone dramatic change during that period, changing weather patterns, average albedo, and ocean circulation, 2) the intensity of the sun has been gradually rising over the 500 million years in your graphic… by about 5%, or about 16 watts/M^2 at the top of the atmosphere (globally averaged, due to conversion of hydrogen to helium in the Sun), and life on Earth has evolved over that time and impacted both the carbon cycle and surface albedo.

So it is not very informative to simply plot estimates of CO2 in the very distant past against estimates of past temperatures. There are just too many other confounding variables. Determining Earth’s sensitivity to GHG forcing is for sure very important, but the distant past is unlikely to help us much with that determination, since there are too potential factors we are not certain of. This is why I find estimates of sensitivity based on glacial/interglacial differences wholly unconvincing…. just too many potential unknowns.

Simply correlating

the surface temperature index with the estimated GHG forcing yields:

http://climatewatcher.webs.com/TCR.png

1.6C ‘observed’ sensitivity.

Lucifer,

The devil is in the details. You have to look at a longer period to avoid potentially misleading influence of natural variability. You have to account for ocean heat uptake. You have to account for aerosol influence. You have to account for serial correlation when you estimate uncertainty. Many simple plots yield simple, superficially appealing results, but they aren’t very informative.

Someone just posted this link on twitter

http://link.springer.com/article/10.1134/S0001433814040239

a paper that discusses low sensitivity of the russian model

I think I’ve never heard so loud

The quiet message of a cloud.

And the deep blue sea,

Stirring mysteriously.

And water, you phaser,

You’ve given me vapor.

=================

Judith –

==> “I would place ‘medium confidence’ on Nic Lewis’ ranges of TCR… ”

How does your method for determining “confidence level” compensate for the criticisms that you have of how the IPCC determines confidence levels?

Expert judgment. my expertise versus the negotiated conclusion of the IPCC. Note, for AR5, i would have judged both TCR and ECS to be low/medium confidence. I argue here that Nic Lewis work raises TCR to ‘medium’

Prof. Curry instructs: “Confidence levels relates to what you DON”T know, which is by definition not a statistical assessment.”

Little bewildered joshie stammers: “Perhaps, but I don’t know what difference that really makes.”

as an example, IPCC makes some ‘very likely’ statements, associated with medium confidence.

the ‘very likely’ relates to the statistical distributions from their models or obs or whatever, then confidence level (low, med, high) relates to whether or not they believe the statistics.

Confidence relates to how likely, after all that statistical analysis, that the conclusions are likely to prove to be wrong or right.

Thanks for the explanation. Getting through the verbiage and structure is tough enough and then I kept running into those terms. This helps.

Don’t bother us with sigmas or other Greek letters. A confidence is simply a subjective measure of how confident you are. Consistent with the rest of climatology.

==> “A confidence is simply a subjective measure of how confident you are. ”

Ok. So Judith doesn’t base her confidence level on some kind of mathematical assessment of probabilities, but on a subjective assessment of how confident she feels, modulated by her subjective feelings about her degree of expertise, and her subjective assessment related to how directly her expertise qualifies her answers to the questions at hand.

Thanks.

It should probably be relabeled as a “comfort level”.

==> “It should probably be relabeled as a “comfort level””

Perhaps, but I don’t know what difference that really makes.

==>”Perhaps, but I don’t know what difference that really makes.”

Of course you don’t. And you don’t know enough to even make a WAG about how to determine confidence levels. Why you dogging Judith about this? Don’t you have anything better to do?

Confidence levels relates to what you DON”T know, which is by definition not a statistical assessment.

“Don’t you have anything better to do?”

Of course Josh wants to attack the woman for the terrible sin of making subjective judgments, but he can’t quite find a way to pull it off.

Joshie is getting dinged up pretty good, this morning. He’s gotta be sorry that Judith let him out of the moderation cage.

Joshua,

Curry’s level of expertise is not a “subjective feeling” trollish one.

Judith A. Curry is an American climatologist and former chair of the School of Earth and Atmospheric Sciences at the Georgia Institute of Technology.

You should, as always, write that down. Perhaps on the wall where you live underneath the bridge.

“..underneath the bridge”

David,

That gave me a good old fashioned belly laugh. Many thanks.

==> “Judith A. Curry is an American climatologist and former chair of the School of Earth and Atmospheric Sciences at the Georgia Institute of Technology.”

Another day, another climate “skeptic” appeal to authority.

J ignores that appeal to authority is sound, when authority is right.

Now call in the sophists; I haven’t had enough laughs yet today, and it’s been uproarious, already.

============

kim –

==> “J ignores that appeal to authority is sound, when authority is right.”

Not ignoring it at all. That’s my point, actually.

The problem is determining on whose authority to determine which authority is right. I know that you’re satisfied with your own authority. Hence, the problem.

Speaking as a casualty actuary, the uncertainly probabilities seem to have little meaning. We don’t know how much error there might in the various temperature readings or in the adjusted temperature readings. We don’t know how valid any of the models might be. I understand that some parameters are based on judgment; we don’t know how uncertain these parameters are.

Uncertainty can be estimated just by looking at the pattern of past temperatures. That’s obviously crude, but I am not convinced that the sophisticated estimates of uncertainty are superior.

Here is my calculation: According to the theory, a doubling of the CO2 concentration will result in an increase in the power carried by the downwelling long wave infrared radiation (DWLWIR), up from approximately 346 W/m^2 (for simplicity I am rounding to the unit place and suppressing the uncertainty) by 4 W/m^2 (2), and the Earth surface will warm until the sum of the upwelling long wave infrared radiation (UWLWIR), the latent heating of the troposphere (LH), and the sensible heating of the troposphere (SH) has increased by 4 W/m^2. How much surface warming might that be? I illustrate by calculating the increase due to a 0.5C increase in surface temperature.

UWLWIR is proportional to T^4, (2) with emissivity constant, so the increase in UWLWIR, assuming that the global mean surface temperature is equal 288K, works out to delta U = (288.5/288)^4×398 – 398 = 2.8 W/m^2.

LH results from the hydrologic cycle, cloud formation and precipitation. The review by O’Gorman et al(3) reports that a 1C increase in global mean temperature will result in a 2% – 7% increase in the precipitation rate; the lower values are results of GCM output, and the upper values are results from regressing estimated annual rainfalls on annual mean temperatures. Using the value 4%, a 0.5C increase in global mean temperature will produce an increase of 2% of 88 W/m^2 = 1.8 W/m^2.

The increase in SH can be estimated from a result reported by Romps et al(4). Their main result was an increase in the cloud-to-lightning ground strike rate by 12% per 1C increase in mean temperature over the US east of the Rocky Mountains. The most important result for this presentation was the estimate of a 12% increase in the power of the process that generated lightning, and that estimate was not confined to the US east of the Rockies. Up to a constant of proportionality, the power of the process generating the lightning was calculated as CAPExPR, where CAPE is “convective available potential energy” (5) and PR was precipitation rate. Precipitation rate was used in the calculation rate not because of the latent energy in the water vapor, but because the precipitation rate was treated as proportional to the rate of transfer of air (with water vapor mixed in) from the surface to the upper cloud level; and the fraction of each kilogram of air that was water vapor was treated as constant. That result depended on the modeled lapse rate and difference between the interior and exterior of the cumulus column. Assuming that their result is widely accurate wherever those can be modeled, and PR rate is proportional to the rate of ascension of air, the increase of SH due to a 0.5C increase of surface mean temperature should be approximately 6% of 24 W/m^2 = 1.4 W/m^2.

The changes in SH, LH, and UWLWIR sum to approximately 6 W/m^2 (with considerable uncertainty), so the sensitivity of the Earth surface temperature is approximately (4/6)x0.5C = 0.33C (again with considerable uncertainty). This result is lower than most other estimates, and it is approximate and conjectural besides, but the computation is straightforward and based on published research. An obvious omission is a potential increase in the DWLWIR from a warmer upper troposphere (the feedback from the feedback). I hope that many much better estimates can be computed from the actual energy flows in the coming years.

SH is “sensible heat” and LH is “latent heat”. The Romps et al result is only suggestive, but they compute an increase in both CAPE and upward air flow rate (using precipitation rate as a proxy and assuming a constant fraction of water vapor in air) with an increase in surface temperature; they do not specifically relate that energy flow calculation to one of the energy flows in the Stephens et al and Trenberth et al flow diagrams. A 1C increase in water surface temp produces a 7% increase in water vapor pressure (at the temperature of water in the tropics), which may be why the estimate for increased rainfall in the tropics, reported in the survey by O’Gorman, is 7%. The lower estimates, like 2%, are from GCMs, which are known to be running hot.

I have shown this to about 20 people now, climate scientists and statisticians. I have posted parts of the computation at ClimateEtc, WUWT, and RealClimate over the past few weeks. I have not assumed that “climate sensitivity” is constant, but have worked with estimates of how the energy flows are now, and the current mean global temperature. I also have not assumed an equilibrium, but only the possibility of an approximate steady-state.

quick notes: I have already been alerted that, because all of the other estimates are higher, this can’t possibly be correct; I am a new comer; equilibrium-based results are higher; if this were a good idea someone else would have written it already; I have omitted consideration of clouds; I have not accounted for warming in the past, such as why the mean temperature of the Earth is 288K instead of 255K.

mattstat, I am not sure you have seen this though I have posted the link a number of times.

http://www.ilovemycarbondioxide.com/archives/Kimoto%20paper01.pdf

very similar approach but it assumes “all things remaining equal”, i.e. consistent cloud and convective feedback etc.

He used K&T though not Stephens et al. so some adjustments could be made. The interesting thing is that he assumes energy is fungible, not a bad thing, but it implies that Ts isn’t fungible. In other words, GMST isn’t all that useful.

From the link to the Sherwood et al paper,

“The second assumption is that all responses are uniquely determined by the scalar dT regardless of how the temperature change is brought about, a situation that may be called fungibility. Complete fungibility requires either that temperature changes always occur with the same spatial and seasonal pattern, or that different patterns produce the same dR=dT where dR is the change in global- and annual-mean radiation balance. However this will not be the case since different forcings will generally produce different warming patterns.”

http://journals.ametsoc.org/doi/pdf/10.1175/BAMS-D-13-00167.1

Climate science is getting a bit of a retro look it seems to me.

CaptDallas2 0.8 +/- 0.2 http://www.ilovemycarbondioxide.com/archives/Kimoto%20paper01.pdf

That was new to me. Thank you.

The interesting thing is that he assumes energy is fungible, not a bad thing, but it implies that Ts isn’t fungible. In other words, GMST isn’t all that useful.

I agree, but we have to start with what we have now, imo, and there is an audience that takes GMST seriously.

Matthew –

1. Lu and Cai (2009) conclude that there is a reduction in sensible heat flux with warming, and purport to show that this is consistent with energetic constraints on tropospheric energy transport and the reduction in convective mass flux found by other researchers. What aspect of their analysis do you think is in error? (The fact that they use model output should not be an issue as long as one focuses on internal consistency.)

2. Assuming that…PR rate is proportional to the rate of ascension of air

You continue to assume a simple proportionality between the change in latent heat transport and the change in sensible heat transport. Such is not the case; see the discussion in Lu and Cai following their eqn. 4b.

2. Would you care to share any of the reactions that you have received from the scientists you have contacted – perhaps those you regard as most constructive?

Pat Cassen: (The fact that they use model output should not be an issue as long as one focuses on internal consistency.)

I don’t think it is a good idea to ignore the fact that the models are running hot. If the result you quote from Lu and Cai is accurate, then the result from Romps et al can not be accurate. I think that people working from different assumptions (and subsets of model space, so to speak) get different results. Much more follows from the equilibrium assumption than that input and output are always in balance everywhere — indeed, that is steady-state, and the equilibrium assumption is that flows have stopped.

2. Would you care to share any of the reactions that you have received from the scientists you have contacted – perhaps those you regard as most constructive?

I put it to a statistician that half of me feels like I have a really good approach, and half of me feels like I am a crank. He assured me that I am not a crank. Another scientist averred that this was a good approach.

Pat Cassen: You continue to assume a simple proportionality between the change in latent heat transport and the change in sensible heat transport.

Not so.

Romps et al (actually, their references) assume that the fraction of each kg of air that is water vapor is constant. That way the upward air flow rate can be approximated by the precipitation rate. Latent heat change is not included in their calculation.

For latent heat change I use only the survey of precipitation rate change (O’Gorman et al) and the evapotranspirative heat flow rate (Stephens et al.)

Matthew –

If the result you quote from Lu and Cai is accurate, then the result from Romps et al can not be accurate.

We disagree. The change in energy that Romps et al. calculate for lightning is orders of magnitude smaller than the energies considered in Lu and Cai. That is why the lightning energy does not even appear in the equations of Lu and Cai. The two analyses are not contradictory.

…half of me feels like I am a crank. He assured me that I am not a crank.

I agree; you are not a crank. I applaud your effort to understand the issues at hand. But you are mistaken in the notion that Romps et al. constrains changes in net energy balance with warming.

Your assumptions about the relations between upward air flow rate, precipitation rate, and lightning production are exactly equivalent to assuming proportionality between changes in sensible heat transport and changes in lightning production; that is why you get 6% change in sensible heat per .5 degree C.

Convince me by writing down an equation. Understand Lu and Cai’s eqns. 3 and 4. What would they look like if you added in Romps’ lightning generation term? As Isaac Held told you, “There is no requirement for an increase in the energy transport comparable to the increase in lightning.”

Gaia’s searching under the thunderbolts for her missing thermodynamics. No, silly, that’s where she lost them.

=======================

Pat Cassen:

Lu and Cai (2009) conclude that there is a reduction in sensible heat flux with warming, and purport to show that this is consistent with energetic constraints on tropospheric energy transport and the reduction in convective mass flux found by other researchers.

I have difficulty with this. Most of the radiative forcing is realized in the lower levels of the troposphere. RF from 2x CO2 would heat the lower levels of the troposphere more than the upper levels. Even if mass exchange remained constant, an increase in sensible heat flux would appear likely.

Warming and/or humidifying the troposphere further increases the difference between upper and lower troposphere, imposing cooling on the upper troposphere.

Lucifer –

I have difficulty with this….Even if mass exchange remained constant, an increase in sensible heat flux would appear likely.

Yes, the result of Lu and Cai is not particularly intuitive. But the reduction in sensible heat flux they find is closely related to an increase of stability of the planetary boundary layer, which diminishes the turbulent transfer coefficient. Whether or not their conclusion is robust, they clearly demonstrate that the relative change in sensible heat flux with warming is not necessarily the same as the relative change in latent heat flux (much less the rate of lightning production), which assumption (I believe) drives Matthew R Marler’s results.

Read the paper. The issues are set out quite clearly by their energy equations. One can argue about the numerical model results, but the inherent constraints, although not necessarily obvious, are well-exposed.

Pat Cassen: The change in energy that Romps et al. calculate for lightning is orders of magnitude smaller than the energies considered in Lu and Cai.

Romps et al calculated the change in energy transferred by the whole process that generates the lightning (moist thermal, cumulus cloud, etc), and got the lightning discharge rate as a tiny regression coefficient on the whole energy. To me, the model of the lightning discharge rate was the least interesting part of the paper.

Pat Cassen: Your assumptions about the relations between upward air flow rate, precipitation rate, and lightning production are exactly equivalent to assuming proportionality between changes in sensible heat transport and changes in lightning production; that is why you get 6% change in sensible heat per .5 degree C.

Yes, sensible heat. Not latent heat.

You had written this: You continue to assume a simple proportionality between the change in latent heat transport and the change in sensible heat transport.

Nowhere do I assume a constant proportion between the latent heat change and the sensible heat change. Romps et al report a 10% change in CAPE and a 2% change in precipitation rate, but all I take away from that is that the upward flow rate change may be well represented by their precipitation rate calculation. Obviously, better estimates of actual flow rates and flow rate changes would be very informative.

matthewrmarler – thank you for the info. Using precipitation as a proxy for air flow rate is interesting. I suppose precipitation is also a proxy for a good part part of the heat released into the upper atmosphere, making it available for an exit to space.

Pat Cassen: “There is no requirement for an increase in the energy transport comparable to the increase in lightning.”

Romps et al have the lightning rate linear in the CAPE*PR, with the constant of proportionality estimated by linear regression with an R^2 of appx 0.7. Despite the lack of a requirement, that is how it worked out.

Earlier, I had thought that the 12% increase i CAPE*PR implied a 12% increase in the latent heat flux. I think that it is a better approximation to the change in the sensible heat flux. It could approximate something like

SH + 0.25LH. A really good estimate of the change in SH would add a lot to this discussion.

Mr. Cassen,

I’m really glad you are here writing about this. If I could handcuff you to this blog I would.

Pat Cassen: Lu and Cai (2009) conclude that there is a reduction in sensible heat flux with warming, and purport to show that this is consistent with energetic constraints on tropospheric energy transport and the reduction in convective mass flux found by other researchers. What aspect of their analysis do you think is in error? (The fact that they use model output should not be an issue as long as one focuses on internal consistency.) e

Second point first, though I repeat myself, their models are running hot, and therefore should not be considered better, more, reliable, more accurate than the calculations that I have proposed. They may in the end be better, but right now they are already disconfirmed by the data.

On to the first. “Equilibrium” has strong consequences. Since the assumption of equilibrium is clearly counterfactual, there is no reason to believe the consequences (unless the model results have been confirmed to be sufficiently accurate for the purposes.) At present there is a radiative imbalance at the surface, the imbalance made up by the non-radiative cooling. Suppose in your modeling you treat this as an equilibrium, assume that adding CO2 adds downwelling LWIR, and in addition assume that the exact same radiative imbalance is maintained. In order for that to be true, the upward flow of SH or LH must diminish. The result that you cite is driven by the counterfactual equilibrium assumption. Romps et al (also in part model-derived) show that the upflow of CAPE*PR increases. O’Gorman’s review shows that rainfall, hence the upward flow of LH, increases. The two modeling approaches make clearly distinct predictions about what will happen with an increased surface temperature. It looks to me like the evidence to date disfavors Lu and Cai, but obviously more evidence should be acquired.

Pat Cassen: Understand Lu and Cai’s eqns. 3 and 4. What would they look like if you added in Romps’ lightning generation term?

I think of that as a good assignment for the future. My goal, should I take it on, would be to bring the model results more in line with the global mean temperature trend; increasing the assumed change in non-radiative flow as a consequence of surface heating (i.e. making the changes positive) would be the first obvious change to make in the model.

thomaswfuller2: Mr. Cassen,

I’m really glad you are here writing about this.

Me, too. Whether I am close to the truth or not (I am alternately credent and dubious), his comments have been illuminating.

Pat Cassen: 2. Would you care to share any of the reactions that you have received from the scientists you have contacted – perhaps those you regard as most constructive?

The overwhelming response has been disdain: only 3 people have responded at all. About what anyone reasonable would expect in response to unsolicited email from an unknown.

“..what we can infer about the upper bound of climate sensitivity, and structural uncertainty in how we even approach this problem.”

Because of land temperatures being so dependent on precipitation effects ENSO and AMO modes, I would look at long term sea surface temperature trends.

“Well the list of participants and the talk titles and suggested post AR5 papers all omit what I regard to be the elephant in the room: the confounding factor of natural internal variability. Lewis and Curry (2014) gave a nod to this by choosing baseline period to be compatible in terms of the AMO index.”

I do not agree that it is internal, but interestingly 65 year SST’s trends from cold AMO years 1911-1976, and warm AMO years from 1945-2010, do not show a significant increase in warming rates for the latter period:

http://www.woodfortrees.org/plot/hadsst3gl/from:1900/plot/hadsst3gl/from:1911/to:1976/trend/plot/hadsst3gl/from:1945/to:2010/trend

Effectively around +0.57°C per century.

Of course more CO2 in the atmosphere if everything else is equal would cause a temperature increase which is the focus of present day climate science.

This is unfortunate because the real focus should be two fold which is first of all does CO2 respond to the climate not the other way around ,and secondly evaluating how a trace gas with a trace increase could somehow not only direct the vast climate system into another regime but do it as if it (CO2 ) operates in a vacuum in that there is no other forcing or if there is, it just pails to this trace gas with a trace increase when it comes to exerting forcing upon the climate.

What makes this more amazing is this argument keeps persisting despite data which does not support AGW theory’s basic assumptions, such as the tropical hot spot in the troposphere, more El Nino conditions in the tropics, Antarctic Sea Ice decreasing, a lessening of out going long wave radiation from earth to space, the basic atmospheric circulation becoming more meridional not more zonal, and failure of global temperatures to continue their rise post 1998. There are more I just highlighted the more notable blunders.

As I write this the absurdity of the direction climate science has taken, really becomes apparent.

Salvatore,

There are several factual errors in your comment.

1) There is overwhelming evidence that the recent CO2 (and other GHG’s) increase in the atmosphere is from human activities, including burning fossil fuels, land use changes, and concrete production. If you really think the rise in atmospheric CO2 is caused by recent warming, then you have been listing to the nonsense at WUWT for far too long.

2) If you think that an increase in trace GHG’s in the atmosphere can’t possibly have much influence, then you do not understand the basic science of radiative heat transfer.

3) The “lack of a hot spot” is likely an indication of either inaccurate measurements, inaccurate model treatment of boundary layers and/or moist convection, or a combination of these things. This has nothing to do with the accuracy of the moist adiabat, which is pretty easily calculated from thermodynamics.

The rest of your comment is impossible for me to really understand.

In any case, it is clear that unless you learn a great deal that you do not now know, engaging you any further in blog comments would be a waste of your time and mine. As my friends in Brasil would say: a Deus.

stevefitzpatrick: This has nothing to do with the accuracy of the moist adiabat, which is pretty easily calculated from thermodynamics.

What is easily calculated from thermodynamics is the “asymptotic” or “equilibrium” approximation. The actual moist adiabatic lapse rates for the same surface temperature over ocean, rainforest, savannah, and desert at any particular time of day are not known with much accuracy. That is, neither the vertical temperature profile nor the vertical specific humidity profile is known.

stevefitzpatrick: 3) The “lack of a hot spot” is likely an indication of either inaccurate measurements, inaccurate model treatment of boundary layers and/or moist convection, or a combination of these things.

Yeh, that about covers it: either errorfull data or errorfull models (inclusive or). Either way, what was confidently predicted has not happened.

“stevefitzpatrick | March 23, 2015 at 1:36 pm |

1) There is overwhelming evidence that the recent CO2 (and other GHG’s) increase in the atmosphere is from human activities, including burning fossil fuels, land use changes, and concrete production.

3) The “lack of a hot spot” is likely an indication of either inaccurate measurements, inaccurate model treatment of boundary layers and/or moist convection, or a combination of these things.”

1) What overwhelming evidence are you referring to?

3) If you believe that the “lack of a hot spot” is from inaccurate measurements why do you believe the measurements are accurate when they support your believes in AGW? Same question regarding “model treatment of…”

3) The “lack of a hot spot” is likely an indication of either inaccurate measurements, inaccurate model treatment of boundary layers and/or moist convection, or a combination of these things.

I have prepared and launched a few radio-sondes in my day, including the old-school and the improved Vaisalla systems. And I’ve written code to track satellites ( porting the Simplified General Perturbation code from NORAD ). These experiences have informed my understanding of some of the problems and the technical reports detail many others. Yes, there are problems with all data sets.

However, look closely again at the temperature trends from 1979 through the present ( below ) for the GISS model, the MSU and Radiosonde data. Ascribing the missing hot spot to temperature error is on par with claiming there is no warming because the surface temperature data sets are in error.

And yet, even learned individuals that I have respect for ( Isaac Held ) are hesitant to accept the data. The seductive lure of our preconceived ideas and hypotheses, is all pervasive.

http://climatewatcher.webs.com/HotSpot.png

So how are we to interpret our current understanding (and lack of understanding) of climate sensitivity in terms of policy?

We’re acknowledging the fact that we live a relatively rich society that has the means pay people to engage in esoteric thinking. Poorer cultures don’t the luxury. Even so, even here, we pay a price: the billion dollars a day is coming out of the pockets of the productive and in return, little or no utility is received for the money spent. Like the lawyer tax that eats into the earnings of the productive, we have a climate tax that lowers the net present wealth of the country, robs opportunity and impoverishes millions. Global warming has become a vanity science.

As I said, I Now May Concede Maturity 4 models.

Now there’s a high Five, follow the link to the cloud kingdom.

================

Oops, didn’t think that would post.

===================

Steve I disagree because CO2 shows no correlation to global temperatures no matter what length of time is used. This past century is a great example, where the position of the continents have not changed.

As far as your solar argument that the sun has been steadily increasing over the past many million years has absolutely nothing to do with all of the dramatic climatic changes that have taken place during the Pleistocene Epic, nor for that matter the ocean/land arrangements which have been essentially the same through out the Pleistocene.

So what you are saying Steve holds no water when it comes to climate changes from the Pleistocene to present day, which do not follow the co2 trend, it is the other way around according to all of the data.

What is responsible for the Ice Ages ? I will send you a brief summary on my next post.

I don’t think an ECS close to 0 is unlikely and I don’t think a negative ECS is entirely implausible.

Infact, I doubt an ECS or 2.5 is more likely than ECS 0.

I doubt an ECS above 2.5 is more likely than ECS very near 0.

Steve here is a very brief summary on my opinion on climatic changes in the past. It is much more extensive then this but it is off topic so I kept it short. Hopefully however you will see where I am coming from.

Data leads me to believe that a climatic variable force which changes often which is superimposed upon the climate trend has to be at play in the changing climatic scheme of things. The most likely candidate for that climatic variable force that comes to mind is solar variability (because I can think of no other force that can change or reverse in a different trend often enough, and quick enough to account for the historical climatic record) and the primary and secondary effects associated with this solar variability which I feel are a significant player in glacial/inter-glacial cycles, counter climatic trends when taken into consideration with these factors which are , land/ocean arrangements , mean land elevation ,mean magnetic field strength of the earth(magnetic excursions), the mean state of the climate (average global temperature gradient equator to pole), the initial state of the earth’s climate(how close to interglacial-glacial threshold condition it is/ average global temperature) the state of random terrestrial(violent volcanic eruption, or a random atmospheric circulation/oceanic pattern that feeds upon itself possibly) /extra terrestrial events (super-nova in vicinity of earth or a random impact) along with Milankovitch Cycles.

What I think happens is land /ocean arrangements, mean land elevation, mean magnetic field strength of the earth, the mean state of the climate, the initial state of the climate, and Milankovitch Cycles, keep the climate of the earth moving in a general trend toward either cooling or warming on a very loose cyclic or semi cyclic beat but get consistently interrupted by solar variability and the associated primary and secondary effects associated with this solar variability, and on occasion from random terrestrial/extra terrestrial events, which brings about at times counter trends in the climate of the earth within the overall trend.

A Deus Salvatore, nada mas.

Faith ‘n Begorrah, m’lad.

A question I would like to see answered is what would convince them to lower sensitivity estimates?

Anything.at.all?

Surely they should know the answer to this question. If they don’t, then I have no idea how they know that they should keep the estimates where they are today.

I’m not accusing anyone here of outright bias, but one way to give people confidence in your unbiased assessments is to set the rules in place BEFORE the future model assessments take place. It is easy to imagine how one can manipulate ad-hoc hindsight assessments.

I would have more confidence in these assessments if the rules were clear and concise on how these parameters would be interpreted going forward. They don’t have to be set in stone, it can be a living document. If they want to change the assessment methods and there is good justification for it, fine.

What if we have an extended solar minimum and temperatures actually decline? Is that considered an absurdity and completely out of the range of possibility?

It’s not an impossibility that we could have an extended solar minimum, but it is unlikely that it could lead to a temperature decline if we continue to emit at the rate we currently are, or faster. The general view is that even if there were an extended solar minimum, the effect would be relatively small and it would probably only delay a temperature rise by a matter of years (5 – 10). You can read Section 9 of this paper.

Depends on the meaning of ‘extended’ and the value of sensitivity.

Remember, the higher the sensitivity, the colder it would now be without man’s effect. You’d better pray to Gaia that the recovery from the coldest depths of the Holocene was primarily natural, ‘cuz if man’s done the heavy lifting of warming, we can’t keep it up.

=============

Not really. Even if we moved into a Maunder minimum-like state, the solar forcing would reduce by a few tenths of a Watt per square metre. If we continue increasing our emissions, we can increase anthropogenic forcings by 2W/m^2 by the mid-2100s. Therefore even if the solar minimum were very extended and even if climate sensitivity were small, we would still – on average – warm.

Well, you only admit TSI, and I’ll admit other solar forcings aren’t shown. But if not, then the oceans rise higher in credibility as the cause of millennial scale changes. And heh, clouds, capable of unexpected behaviour at all time scales.

But you must also admit, the low sensitivity you include in your possibilities, would imply that man’s effect will be beneficial.

Also, what sensitivity, whether transient or equilibrated, would you consider to be net harmful to man and the biome, and why? What, pray tell, is the cost/benefit ratio of all past warmings?

========

well the issue is indirect solar effects; some of which are unknown and others not included in climate models.

Well, expect that there is little evidence (if any) that these have some kind of significant impact on our climate.

ATTP,

https://lh3.googleusercontent.com/-5J1eslaL-VU/VRBriebSFEI/AAAAAAAANRI/-cWq_5ehbss/w877-h477-no/stratospheric%2Bcooling%2Band%2BPMOD%2Bsolar.png

“well the issue is indirect solar effects; some of which are unknown and others not included in climate models.

Well, expect that there is little evidence (if any) that these have some kind of significant impact on our climate.”

Try not to be too surprised should that thought change. ENSO and the Stratosphere have more correlation with solar variability than is normally mentioned on blogs. The Brewer-Dobson circulation, Sudden Stratospheric Warming Events and Arctic Winter Warming events are all coupled with solar and ENSO to a degree. When solar has a better correlation with stratospheric cooling than CO2 equivalent forcing plus ou have a missing tropical troposphere hot spot, there are some substantial holes in the conventional wisdom.

I believe these are starting to be called adjustments instead of feedback because they go a bit beyond the standard forcing/feedback model based on Ts.

And Then There’s Biology. Hey, what’s the albedo change for the greening? I’d guess that would track more reliably with rising CO2 than any temperature guessed up by the latest zillion dollar machine.

===============

kim commented on

While albedo matters during the day, grass/vegetation also has a very different cooling profile at night compared to dirt and or concrete.

The IR temp of grass in the morning prior to Sunrise is 10-20F colder than dirt and concrete.

BTW, where exactly does the LW IR that Co2 returns to the surface actually come from?

Capt,

Why would I be surprised by that? I’m certainly not suggesting that solar variability does not influence our climate, I’m suggesting that there isn’t much evidence for a significant sensitivity to other solar effects.

What’s stark and blue and red all over?

=============================

Captain Dallas,

May I suggest a 2 year or 3 year centered moving average on both those trends?

Are you still in the Keys?

ATTP, “I’m suggesting that there isn’t much evidence for a significant sensitivity to other solar effects.”

You might want to expand your reading to include the impact of the Brewer-Dobson circulation, ENSO and SSW events on climate. There are decadal and longer trends that are just barely noticeable with the short satellite era data. Susan Solomon listed tropical ozone and water vapor, transported by the B-D pole ward as part of the cause of the pause and there are growing paleo references to ENSO and solar impact on climate. Since tropical ozone and water vapor transport are estimated to maintain the poles at temperatures on the order of 50 degrees warmer than other wise, this B-D transport would regulate the polar heat sinks A small changing in forcing in the tropics can have a much larger impact at the poles. That really shouldn’t be that hard to understand since one C degree change in the tropics has about 7Wm-2 influence versus 1 C degree change in the polar winter having about 1.5 Wm-2 influence. That makes GMST not as useful as one might think.

I believe the new Sherwood and Stevens et al. paper mentions something about the fungibility of dTs.

Capt,

Again, why do you think I would dispute that?

SteveF, “Captain Dallas,

May I suggest a 2 year or 3 year centered moving average on both those trends?

Are you still in the Keys?”

Yep, still fishing in the Keys. I normally use a 27 month moving average to match the QBO and there is a 27 month lag in tropical SST response, but that is generally a manipulation too far for blogs. I was also too lazy to update the PMOD :(

ATTP, the reason I said that is that there is growing evidence and you said there is little to any evidence. “Well, expect that there is little evidence (if any) that these have some kind of significant impact on our climate.”

ATTP, I’d be very interested in that paper from Springer. My tablet couldn’t open the link. I’d appreciate it if you could give the full address. Otherwise I’ll try to locate it from what I have.

Try this. Should be open access.

Bottom up well explored, top down not so much.

==============

ATTP, Thanks much I’ll read carefully :-)

The spectrum changes far more than TSI. I pointed this out on your blog and you deleted it. Maybe you better scurry on back there now otherwise people here might expect you to address the point. Presumably you know enough physics to realize that different wavelengths had different absorption and reflection characteristics gases, liquids, and solids. I have to ask you Rice because you don’t come across as the sharpest tool in the shed if you get my drift.

http://www2.mps.mpg.de/projects/sun-climate/resu_body.html

http://www2.mps.mpg.de/projects/sun-climate/image/Rel_contri_col.png

Over the past nine thousand years the Milankovitch cycles have removed about 40 watts per square meter from the north, above 60 degrees and sent it to the south, below 60 degrees and it did not change the ice core temperatures in the north or in the south a measurable amount. The recent cycles of warm and cold periods are just like they were. The sun has been through multiple cycles of a little warmer and colder and that did not make a difference either. Earth temperature is regulated using clouds and rain and snow. The set point for the thermostats is the temperature that polar oceans freeze and thaw.

popesclimatetheory commented

Might I be so bold to suggest the rest of waters state changes matter as well.

Boiling point, melting point, vapor pressure, heat capacity and entropy.

This applies over the tropical oceans limiting surface temps to ~31C, at night as rel humidity limits radiative cooling, with clouds acting as a radiative blanket, cooling rain (and where does that heat go?). As well as ocean warm spots what feed evaporated water over land masses.

Water is that’s controlling the fundamental profile of earths temps.

All the water makes a difference.

The Set Point Temperature that Polar Oceans freeze and thaw is the most important. That does change the area of water that is exposed to the atmosphere rapidly in response to what ever causes warming or cooling. That does change clouds and rain and snow immediately, as needed to maintain the temperature. Ice builds up and advances and and melts and retreats slowly and acts as a huge capacitor, inductor, voltage system to smooth out the cycles. http://popesclimatetheory.com/page50.html

popesclimatetheory commented

I think the tropics are the boiling kettle, the source of the warm that spreads over much of the land, and the poles are the cold return and cooling regulation side.

We’re bound by both ends. Co2 might tweak the profile between those two bounds, but I suspect it’s completely possible that it doesn’t alter those ends. The state change in water requires far more energy than a small increase in Co2 can add to the system, and there is a lot of water in the atm to work with.

Steve ,let us agree to disagree.

Steve any time any place I would debate you and win.

AGW enthusiast are coming out in full force today. The blind leading the blind.

http://www.c3headlines.com/2014/03/those-stubborn-facts-chinese-scientists-confirm-that-medieval-period-warmer-that-solar-impact-is-hug.html

More data supporting solar activity as the main contributor to climate change.

Salvatore del Prete: http://www.c3headlines.com/2014/03/those-stubborn-facts-chinese-scientists-confirm-that-medieval-period-warmer-that-solar-impact-is-hug.html

Thank you for the link.

First came the discovery that CO2 change has no significant effect on average global temperature and therefore climate sensitivity is not significantly different from zero. http://agwunveiled.blogspot.com

Next came the realization that a forcing (such as some consider CO2 to be), or its anomaly, in Joules/sec/m/m must exist for a duration to change energy content, Joules/m/m. Thus the time-integral of the forcing, or the forcing anomaly, equals the energy change. (Energy content change divided by effective thermal capacitance = temperature change). This, using accepted assessments of paleo CO2 levels, proves that CO2 change has no significant effect on climate.

“So how are we to interpret our current understanding (and lack of understanding) of climate sensitivity in terms of policy? ”

Critical decisions with potential for severe adverse consequences should not be made on the basis of highly uncertain metrics. Policy decisions, by law, are focused only on the next 300 years of cost/benefit analyses. TCR should be just as good for 300 year forecasts of AGW as the much more uncertain ECS. Moreover, much of the uncertainty in ECS results from sketchy ocean heat uptake data and hypothesized phenomena in climate simulation models that occur after a 300 year period.

Why can’t we get scientific agreement that TCR is the correct metric to use for policy decisions? And while we are at it, why don’t we change TCR to Transient Climate Sensitivity (TCS), a verifiable metric based on the actual slow rise of atmospheric GHG, since the most reliable Conservation of Energy based methods for determining TCR, such as in Lewis and Curry (2014), don’t come anywhere close to measuring the temperature increase and radiative force increase over a hypothetical 70 year period with raditative forcing increasing at 1 percent/year, almost twice actual levels. Why continue to use the hypothetical definition of TCR to describe a different physical result? It is scientifically embarrassing to me that this important metric, as defined by the IPCC, can only be assessed, in theory, by a climate simulation model that can simulate the hypothetical 1%/yr GHG concentration rise rate.

Still sticking to my previous claims (also based in Conservation of Energy approach) that TCS = TCR < 1.2K The main reason for the (<) symbol is that I believe it is most likely that some of the observed warming since 1850 is natural and a continuation of a long term (approx 1000 year cycle) in NH temperatures observed in the paleo record, Ljungqvist (2010), and also responsible for the RWP, MWP AND LIA.

TCR more readily allows for comparison with climate models than TCS.

“TCS = TCR < 1.2K"

The CO2 doubling time for observation based TCS can be centuries. Given a slooow rate of forcing increase, TCS might be better used to estimate ECS. Did you really mean to write TCS = TCR?

http://www.actuaries.org/HongKong2012/Papers/WBR9_Walker.pdf

All of the latest research supporting solar/climate connections. Great study

+1 Thx.

Your tax dollars at work:

It turns out that the models are quite good if you include anthropogenic forcing, but not so much if you don’t, so I would not discount the value in the sensitivities that they provide so easily. This is well known even from AR4.

http://www.climate-skeptic.com/wp-content/uploads/2009/02/ipcc1.gif

Jim D commented

This is graph is based on circular logic, it’s nonsense. And that’s assuming the black line is even close to correct.

I’ve always been curious how they can have confidence in their modeling of natural forcings in this diagram.

JimR commented

They can’t, it’s just more circular logic as they use their model to separate natural vs anthro

Same as they use their model to homogenize and infill the surface temps, and the 4 groups (NOAA,GISS,CRU, and BEST) think they are all about the same and that makes what they’re doing right, yet they are only the same because they all use a variation of the same surface model of temps.