by Judith Curry

My new talk on improving seasonal to interannual climate predictions.

This week, I am attending the Weather Risk Management Conference (WRMA) in Miami.

Utility of climate forecasts for risk mgt

In providing forecasts for the private sector, I’ve come to realize that there is a gap between climate forecast information and the needs of users. Even if the scientific community believes certain information is valuable, users may not. There is also a gap between how users value forecast information compared to its quality.

CFAN has focused its forecast efforts on bridging this gap, by providing predictions of extreme eventsand objective assessments of forecast confidence.

Ensembles and probabilistic weather forecasting

This figure illustrates the concept of probabilistic weather forecasting, using a global ensemble model prediction system.

For each forecast, the global model produces an ensemble of multiple forecasts, initialized with slightly different conditions. The ECMWF model has an ensemble size of 51 forecasts. A single forecast (say the gold dot) may be rather far away from the actual observed outcome (the red dot). If the ensemble is large enough, meaningful probabilistic forecasts can be provided. The objective of the probability forecast is to bound the observed outcome (the red dot) in a probability space (reflected by the darker blue region) that is much smaller than climatology (the lighter blue region).

The actual model prediction is characterized by the green region. The potential predictability of the model is characterized by the dark blue region. This potential predictability can be realized in a prediction through forecast calibration and ensemble interpretation techniques.

Ensembles and probabilistic climate prediction

The challenge for probabilistic climateprediction is that in order to encompass the observed outcome, the ensemble size needs to be very large and becomes as large as the climatology. The challenge is even greater when climate is changing, such as the slow creep of global warming or an abrupt shift in a climate regime such as the Atlantic Multidecadal Oscillation.

Predictability, prediction and scenarios

For weather prediction timescales of 2 weeks or less, ensemble prediction methods can provide meaningful probabilities. This time horizon is being extended into the subseasonal time frame, potentially out to 6 weeks. However forecasts beyond two months often show little skill, and probabilistic forecasts can actually mislead decision makers. On seasonal time scales, predictive insights are typically provided, whereby a forecaster integrates the model predictions with an analysis of analogues and perhaps some statistical forecast techniques.

A predictability gap is seen around 1 year, where there is very little predictability. At longer time scales some predictably is recovered, associated with longer-term climate regimes. However at longer time horizons, predictions become increasingly uncertain.

Possible future scenarios can be enumerated but are not ranked, e.g. because of ambiguity.

A useful long range forecast keeps open the possibility of being wrong or being surprised, associated with abrupt changes or black swan events. Anticipating abrupt changes or black swan events would be most valuable.

The key forecasting challenge is is to extend the time horizon for meaningful probability forecasts, and to extend the time horizon for predictive insights of the likelihood of future events.

How can we extend these forecast horizons?

ECMWF ENSO prediction skill

Improvements to global climate models are helping extend the time horizon for meaningful probabilistic forecasts.

This slide compares El Nino Southern Oscillation forecasts from the latest version of the European model with the previous model version. The y-axis is the initialization month, and the x-axis represents the forecast time horizon in months. The colors represent the strength of the correlation between historical forecasts and observations.

The most notable feature of this diagram is the spring predictability barrier. If you initialize a forecast in April, it will rapidly lose skill by July, and the correlation coefficient drops below 0.7 (which is reflected by the white region). However, if the forecast is initialized in July, the forecast skill remains strong for 7 months and beyond.

The skill for the new version of the ECMWF forecast model is shown on the right, and we see substantial improvement. While the color schemes are slightly different, you can see that the white region, indicating correlation below 0.7, is much smaller, indicating that the model performs much better during the springtime predictability barrier.

The improved skill in Version 5 is attributed to improvements to the ocean model and also to parameterizations of tropical convection.

Climate prediction: signal to noise problem

On timescales beyond a few weeks, the challenge is to identify the predictable components. Predictable components include

- Any long-term trend

- Regimes and teleconnection patterns

- And any cyclical or seasonal effects

Once you identify and isolate the predictable components, you can ride the wave.

The challenge is to separate the predictable components from the ‘noise’. These include

- Unpredictable chaotic components

- Random weather variability

- Model error

The biggest challenge is regime shifts, particularly when the shifts were triggered by random events. You may recall that in 2015, it really looked like an El Nino wanted develop. However, its development was thwarted by random but strong easterly wind outburst in the tropical Pacific. This failed 2015 El Nino set the stage for the super El Nino of 2016.

Climate prediction: can we beat climatology?

The big challenge in making a climate prediction is whether you can beat climatology. Forecast skill depends on several things.

When the forecast is initialized relative to the annual cycle is an important determinant of skill. Also, a forecast initialized during a well-established regime, such as an El Nino, are more skillful.

One of the most important predictive insights that a forecaster can provide is whether the current forecast can beat climatology

The forecast windows of opportunityapproach identifies windows in time and space when expected forecast skill is higher than usual because of the presence of certain phases of large-scale circulation patterns.

I often use a ‘poker’ analogy when explaining this to energy traders – you need to know whether to ‘hold’ or ‘fold’. In forecasting terms, this is the difference between a forecast with high or low confidence.

Data-driven prediction methods

Statistical forecasts have been used for many decades before global climate models were developed, notably for Asian monsoon rainfall. Traditional statistical forecasts have been time-series based or based on past analogues. While these methods do include insights from climate dynamics, they have proven to be too simplistic and there are an insufficient number of past analogues.

The biggest challenge for statistical forecasts is that they stop working when a regime shifts. You may recall in 1995 when Bill Gray’s statistical hurricane forecast model stopped working, when the Atlantic circulation pattern shifted. A more recent example is use of October snow cover in Siberia as a predictor of wintertime temperatures, this one also stopped working once the climate regime shifted.

Currently, big data analytics is all the rage for weather and climate prediction. IBM’s Watson is an example. Mathematicians and statisticians are applying data mining and artificial intelligence techniques to weather and climate prediction.

Artificial intelligence guru Richard DeVeaux provides the following recipe for success:

Good data + Domain Knowledge + Data Mining + Thinking= Success

The limiting ingredients are domain knowledge andthinking. Without a good knowledge of climate dynamics, fools gold will be the fruit of any climate forecasts based on data mining.

Seasonal to interannual predictions: predictive insights –> probabilistic forecasts

The way forward in pushing the time horizon further for meaningful probabilistic forecasts is to integrate global climate model forecasts with insights from data driven forecast methods.

The problem with climate model forecasts is that they invariably revert to climatology after a few months. The problem with data driven forecasts is that they lack space-time resolution.

These can be integrated by clustering the climate model ensemble members based on predictors from the data-driven forecasts.

Confidence assessment can be made based on the probability of regime shift.

Data-driven forecasts: climate dynamics analysis

In data-driven approaches to climate prediction, it is essential to understand the range of climate regimes that can influence your forecast. These regimes indicate memory in the climate system. The challenge is to identify the appropriate regimes, understand their impact on the target forecast variables, and to predict future shifts in these regimes.

CFAN’s analysis of climate dynamics includes consideration of these 5 time scales and their associated regimes, ranging from the annual cycle to multi-decadal time scales.

Circulation modes: sources of predictability

Our data mining efforts have identified a number of new circulation regimes that are useful as predictors over a range of time scales. These include:

- North Atlantic ARC pattern

- Indo-Pacific-Atlantic pattern

- Northern and southern hemispheric stratospheric vortex anomalies

- Bi Polar patterns in the stratosphere

- NAOX:North Atlantic SLP/wind pattern

- Global patterns of North –South winds in upper troposphere/lower stratosphere

North Atlantic ARC pattern

An intriguing development is underway in the Atlantic. This figure shows sea surface temperature anomalies in the Atlantic for May. You see an arc of cold blue temperature anomalies extending from the equatorial Atlantic, up the coast of Africa and then in an east-west band just south of Greenland and Iceland. This pattern is referred to as the Atlantic ARC pattern.

North Atlantic ARC SST anomalies

A time series of sea surface temperature anomalies in the ARC region since 1880 shows that changes occur in sharp shifts, you can see shifts occurring in 1902, 1926, 1971, and 1995

On the bottom graph, you see that the ARC temperatures show a precipitous drop over the past few months. Is this just a cool anomaly, similar to 2002? Or does this portend a shift to cool phase?

CFAN’s research has identified precursors to the shifts, we are actively assessing the current situation.

Atlantic AMO cool phase: impacts

A shift to the cool phase of the Atlantic Multidecadal Oscillation is expected to have profound impacts, based on past shifts:

- diminished Atlantic hurricane activity

- increased U.S. rainfall

- decreased rainfall over India and Sahel

- shift in north Atlantic fish stocks

- acceleration of sea level rise on NE U.S. coast

AMO impacts on Atlantic hurricanes

The Atlantic Multidecadal Oscillation has a substantial impact on Atlantic hurricanes. The top figure shows the time series of the number of Major Hurricanes since 1920. The warm phases of the AMO are shaded in yellow. You see substantially higher numbers of major hurricanes during the periods shaded in yellow

A similar effect of the AMO is seen on the Accumulated Cyclone Energy.

AMO impacts on U.S. landfalling hurricanes

By contrast, you see that the warm versus cool phases of the AMO has little impact on the frequency of U.S. landfalling hurricanes.

AMO impacts on FL hurricanes

However, the phase of the Atlantic Multidecadal Oscillation has a huge impact on Florida. During the previous cold phase, no season had more than 1 Florida landfall, while during the warm phase there have been multiple years with as many as three landfalls. A major hurricane striking florida is more than twice as likely during the warm phase relative to the cool phase.

These variations in Florida landfalls associated with changes in the AMO have had a substantial impact on development in Florida. The spate of hurricanes starting in 1926 0’ killed the economic boom that started in 1920. Population and development accelerated in the 1970’s, aided by a period of low hurricane activity.

CFAN’s seasonal forecasts of Atlantic hurricanes

CFAN’s approach examines global and regional interactions among ocean, tropospheric and stratospheric circulations. Precursor patterns are identified through data mining, interpreted in the context of climate dynamics analysis, and then subjected to statistical tests in hindcasts.

We consider three periods in our analysis, defined by current circulation regimes:

- The period since 1995, corresponding to the warm phase of the Atlantic Multidecadal Oscillation

- The period since 2002, defined by a 2001 shift in the North Pacific Ocean circulation patterns

- The period since 2008, characterized by a predominance of El Nino events, occurring every 3 years.

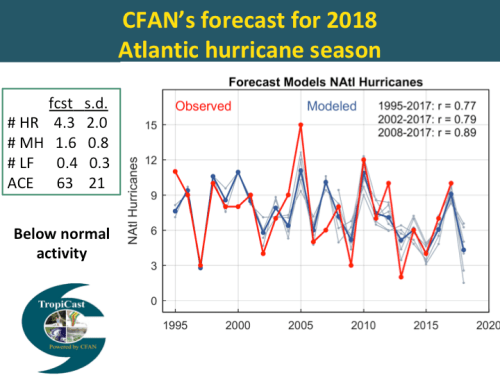

CFAN’s forecast for the 2018 atlantic hurricane season

Last week, we issued our third forecast for the 2018 Atlantic hurricane season. We are predicting below normal activity, with an ACE value of 63 and 4 hurricanes.

The figure on the right shows hindcast verification for our June forecast model for the # of hurricanes. The forecast is developed using historical data for 3 different periods, corresponding to the 3 regimes described earlier.

CFAN’s predictors for seasonal atlantic hurricane forecast

CFAN’s seasonal predictors are identified for each forecast using a data mining approach, with different predictors used for each lead time. The candidate predictors are then subjected to a climate dynamics analysis to verify that the predictors make sense from a mechanistic point of view.

The predictors that we use are predominantly related to atmospheric circulation patterns. Note, our predictors differ substantially from other groups providing statistical forecasts, who rely primarily on sea surface temperature and sea level pressure predictors.

2017 seasonal forecast

Here is the forecast we made a year ago for the 2017 Atlantic hurricane season. We predicted an active season with 3 U.S. landfalls. We got the number of U.S. landfalls correct, but we substantially underestimated the Accumulated Cyclone Energy, or ACE.

From the perspective of the financial sector, the key issue is whether we will see another extremely active hurricane season and how that will translate into U.S. landfalls.

Atlantic hurricane forecast: worst case scenario

We conducted a data mining exercise to identify patterns that explained the extremely active seasons in 1995, 2004, 2005, 2017. Our current forecast model does capture the extremes in 1995 and 2017, but not 2004 and 2005.

The only predictor that popped up for 2004/2005 is a pattern in the stratosphere near Antarctica. At this point we have no idea whether this pattern could provide a plausible physically based predictor for Atlantic hurricane activity. The disturbing thing tho, is that polar stratosphere predictors predict an extremely active 2018 season in contrast to the other predictors we are using.

So at this point we don’t know weather we have unearthed a diamond or fools gold. In any event, this is a good example of both the promise and perils of data mining.

Congratulations for going well beyond the usual climate talking points to science. I’ll be back.

The lower end of the color code of the right hand forecast skill graphic is blue btw.

If you want to see how government corrupts the process of learning you need go no further than to look at a study In an Environmental Research Letters (“Are cold winters in Europe associated with low solar activity?”) the authors concluded, as follows:

I thought this was a good example of erstwhile authors being forced to dissemble and dance around the idea that perhaps the sun might play a role on local weather and maybe even some bearing on regional weather but certainly not across an entire hemisphere, let alone globally.

This was a key study for me. Low solar activity causing cold winters in England? The question is how. The answer emerges from the polar region with modulation of surface pressure. High pressure changes wind patterns from more zonal (east/west) to more meridional (north/south). With greater penetration of cold and stormy polar conditions into lower latitudes with meridional patterns. It has significant implications for northern higher latitude temperature – and the central England temperature record is perfectly placed to observe this pattern.

https://watertechbyrie.files.wordpress.com/2017/01/nao_fig_4-e1520191198670.jpg

And we can see how the cumulative NAO – the Atlantic expression of the Arctic Oscillation – projects onto both the AMO and AMOC. Both the latter are the result of altered heat transport – patterns of ocean circulation – caused by changes in zonal or meridional winds and not primary causal agents themselves.

https://watertechbyrie.files.wordpress.com/2014/06/smeed-fig-72-e1518284539722.png

Solar activity is thought to project as a factor onto polar surface pressure through solar UV/ozone interaction in the middle atmosphere translating through atmospheric pathways to polar regions. Based on the extended hypotheses of process level computer models. So I would not be surprised if there were stratospheric signals – although I can’t ‘unearth’ it from the information provided.

e.g. https://www.nature.com/articles/ncomms8535

These zonal and meridional patterns at both poles spin up less or more sub-polar ocean gyres with implications for NH temperature and hydrology – and for upwelling in the eastern Pacific – both hemispheres – with implications for global temperature and hydrology. Google ‘decadal wind and gyre’ if you are interested. No surprise either that the “Decadal Patterns of Westerly Winds, Temperatures, Ocean Gyre Circulations and Fish Abundance” are the familiar 20 to 30 year regimes of 20th climate, hydrology and biology records. There are profound implications of prospective lower solar activity this century for global temperatures, hydrology and biology

e.g. https://www.semanticscholar.org/paper/Decadal-Patterns-of-Westerly-Winds%2C-Temperatures%2C-A-Oviatt-Smith/2dcd12c6742b11cd277d4a75d9fb75d252402451

It fits the conceptual model of these mechanisms as stochastically forced resonant internal responses. Something I have seen described as Lorenzian forcing – defined as a small change triggering a large internal response. The 20 to 30 year patterns – occurring in the context of millennial variability – it has been suggested – least of all by me – is related to the Hale Cycle of solar-magnetic reversal. Projecting onto part of the polar surface signals – UV changes in the Hale Cycle bias the globally coupled system to specific outcomes. Cumulative values of the AO and AAO suggest solar Lorenzian forcing of the system – but this this is still unpredictible. Another Russian doll within a Russian doll.

New ENSO models are coarse scale climate models providing boundary conditions with nested finer scale models in specific regions. This is conceptually the way to do it – with probabilities derived from multiple runs . I have done similar things with 3-D ocean models – although the objective there was typically to derive parameters for diffusion modeling. ENSO models do well once patterns have emerged and far less so at this time of year when patterns are changing. That has not changed and will not until the fundamental mechanisms of ENSO are better defined.

.

… the primary causal agents being, more/less clouds?

The Pacific is the largest source of cloud variability.

https://watertechbyrie.files.wordpress.com/2018/03/clement-figure-3-e1523509236648.jpg

It seems at least in part a coupled ocean/atmosphere mechanism involving Rayleigh-Bénard convection in a fluid heated from below.

“Marine stratocumulus cloud decks forming over dark, subtropical oceans are regarded as the reflectors of the atmosphere.1 The decks of low clouds 1000s of km in scale reflect back to space a significant portion of the direct solar radiation and therefore dramatically increase the local albedo of areas otherwise characterized by dark oceans below.2,3 This cloud system has been shown to have two stable states: open and closed cells. Closed cell cloud systems have high cloud

fraction and are usually shallower, while open cells have low cloud fraction and form thicker clouds mostly over the convective cell walls and therefore have a smaller domain average albedo.4–6 Closed cells tend to be associated with the eastern part of the subtropical oceans, forming over cold water

(upwelling areas) and within a low, stable atmospheric marine boundary layer (MBL), while open cells tend to form over warmer water with a deeper MBL.” https://aip.scitation.org/doi/pdf/10.1063/1.4973593

I misunderstood the question. Whatv causes the AMO? Multi-decadal variability in the Pacific is defined as the Interdecadal Pacific Oscillation (e.g. Folland et al,2002, Meinke et al, 2005, Parker et al, 2007, Power et al, 1999) – a proliferation of oscillations it seems. The latest Pacific Ocean climate shift in 1998/2001 is linked to increased flow in the north (Di Lorenzo et al, 2008) and the south (Roemmich et al, 2007, Qiu, Bo et al 2006) Pacific Ocean gyres. Roemmich et al (2007) suggest that mid-latitude gyres in all of the world’s oceans are influenced by decadal variability in the Southern and Northern Annular Modes (SAM and NAM respectively) as wind driven currents in baroclinic oceans (Sverdrup, 1947).

e.g. https://eos.org/research-spotlights/ocean-dynamics-may-drive-north-atlantic-temperature-anomalies

Reblogged this on Climate Collections.

“For weather prediction timescales of 2 weeks or less, ensemble prediction methods can provide meaningful probabilities.”

You cannot predict a black swan but black swans occur nonetheless.

I am amused to see an attempt to predict a black swan in the envelope.

If it is a black swan it is by definition outside the envelope.

All you have is a prediction of an extremely unlikely event .

Which is something quite different, and predictable.

“This potential [un]predictability can be realized in a prediction through forecast calibration and ensemble interpretation techniques.”

Except it cannot.

You give a model with a prediction and then change it to another model based on these values.

All you have is a new model with an unknown further prediction based on whatever went wrong with the current reinterpretation.

Don’t get me wrong. I am a big fan. As the chiefio and chaos suggest the weather is prone to the occasional surprise. Best to give a block quote and qualify with a subject to updates approach.

Better to try and fail than never to try at all and most of the time you will be right. Just remember those Italian scientists before offering too strong a guarantee.

On the North Atlantic ARC, the latest 7-day change:

https://i.imgur.com/KOUTsfr.png

Good presentation JC.

Now, this is somewhat OT, but still about the predictability of global temperature indices (and riding the wave).

http://www.woodfortrees.org/graph/hadcrut4gl/mean:240/mean:179/mean:134/derivative/scale:120/plot/hadcrut4gl/mean:300/mean:224/mean:168/derivative/scale:120/plot/hadcrut4gl/mean:360/mean:269/mean:201/derivative/scale:120/plot/hadcrut4gl/mean:420/mean:314/mean:235/derivative/scale:120

The hadcrut4 global index is low-pass filtered using triple running means of various cutoff frequencies (wavelengths of 20, 25, 30 and 35 years). Derivatives are used and scaled to show the slope (trend) of the low-pass filtered temperature indices in °C/decade.

Is it not easy to (roughly) predict what happens next?

Yes, climate is a very complex and multi-factorial system – so testing the longer term (multi-year & multi-decadal) possibilities takes a lot of effort and extended time frames – so roll up our sleeves and chip away

Further, the investigative groups really need to work together and help one another

Reblogged this on I Didn't Ask To Be a Blog.

I like the conceptual framework in light of the last example. Sort of a formalized version of what Joe Bastardi does in his head from long experience.

One reason why Florida’s population grew very fast between 1960 and 1980 was the large flow of Cuban refugees. In my family’s case my mother and sister arrived in 1968 and moved to Fort Lauderdale. I was living with a family which had taken me in in the NY suburbs, and moved to Florida the summer of 68. My grandparents escaped later that year, and my dad escaped 10 years later in 1978. I also read we had a significant influence on the development of south Florida as a trading hub because we were mostly middle class, spoke Spanish and came from a trader culture.

One thing we noted was that housing built in the 1950s was much better built than later housing. Being aware of how hurricanes destroy buildings we tended to buy older houses with cement block walls and solid tiled roofs. That type of house will last forever.

That’s interesting. I’ve noticed as well houses built in the thirties flurries and fifties are built to last.

Charles Krauthammer… just 5 days to live– he sure took charge of the endgame! Always thoughtful, it’s no surprise he nailed the human-caused CO2-global warming alarmist debate: “…scientists who pretend to know exactly what this will cause in 20, 30 or 50 years are white-coated propagandists.” (Washington Post, Feb 20, 2014)

This is slightly off subject but still relevant. There have been two significant hurricanes this year. One in Hawaii and one in Guatemala. I’ve tried to find out how much ash was spued into the air to no avail. Does ayone have information on this? Will these volcanoes effect the climate or are they spuing to little to make a difference?

Oh nevermind, I found my answer in a new Newsweek report. May have a very small cooling affect. They are considered small volcanoes.

What does CFAN predict exactly? I know hurricanes and typhoons. Does it predict, for instance, draught in the SW? Wet regime in Europe? I’m guessing along the lines of wet/cold climate VS hot/dry climate? Colder winters vs hotter summers visa versa? More or less snow and rain?

To my knowledge IBM’s Watson just answers simple questions, using text analysis. People are touting neural nets, since these are basically analog-like pattern recognition machines and weather has patterns. I am not optimistic about either approach.

Judy

Z10, I see the red blob and the scale, no description, what is it temperature, blocking pressure ?

The period is MAM does that set the Atlantic up as being the predominant cyclone basin, or the intensity.?

2005 and 2018 have one thing in common so far, both started with an East Pacific hurricane.

Researching Z10 on a small cell ph is not easy.

Regards

Thank you. Well done.

On the bottom graph, you see that the ARC temperatures show a precipitous drop over the past few months. Is this just a cool anomaly, similar to 2002? Or does this portend a shift to cool phase?

Good question, and one of several examples for specific reasons for uncertainty in specific situations (not vague appeals a la “ignatology”). There are in fact competing predictions, some presented with great confidence, are there not?

oops: “agnatology” in place of “ignatology”.

Agnotology relates to cognitive bias and not scientific uncertainty. There is a great deal of both.

Have we unearthed a diamond or a fool’s gold?

“Predicting” the past is curve fitting. – Dr. Strangelove

“Prediction is very difficult, especially if it’s about the future.” – Niels Bohr

“With four parameters, I can fit an elephant, and with five I can make him wiggle his trunk.” – John von Neumann

Von Neumann’s elephant with 5 five complex parameters. His trunk really wiggles!

http://2.bp.blogspot.com/-CkKUPo04Zw0/VNyeHnv0zuI/AAAAAAAABq8/2BiVrFHTO2Q/s1600/Untitled.jpg

On the limits of predictability, evidently GFS predicts a tropical cyclone forming and striking South Texas next weekend:

Verification awaits:

http://ready.arl.noaa.gov/ready2-bin/jmovie.pl?id=GFS&mdl=grads/gfs&file=panel1&nplts=41

Hmmmm….

So much for predictability.

Latest GFS has no such TC at all.

Just a slight depression, which is what I’m afraid I’m coming down with.

I predict that for every successive decade, the land and oceans will warm, and CO2 will rise even if solar irradiation is lower. I have predicted this every decade for the past three.

This is too easy. I wonder how many people still think that CO2 is not the primary forcing.

The combination of AGW and internal variation produced an incremental rate of warming in the latter part of the 20th century of 0.1K/decade. Not in itself an existential threat. And one that may diminish this century with a 7% reduction in solar UV possible. This would translate into more negative polar annular modes, more north/south blocking patterns and substantial Northern Hemisphere (NH) cooling – this NH winter may be a taste of things to come – and enhanced upwelling in the eastern Pacific (Oviatt et al 2015) – bringing global cooling. But chaos introduces an intractable uncertainty that preclude any simple prognostication. The place to look for uncertainty is in the deepwater formation zones of the north Atlantic that are implicated in abrupt and catastrophic change over the last 800,000 years.

https://www.facebook.com/Australian.Iriai/posts/1608494905933432

Notwithstanding simple and inherently silly climate memes.

“even if solar irradiation is lower”

Are you writing about net solar irradiation? or just incoming?

Net has not been measured very long and still isn’t measured very well.

But it appears that for the period of record (CERES) that net solar irradiation has been higher ( because albedo has decreased ), not lower:

https://turbulenteddies.files.wordpress.com/2018/05/albedo_comparison_2017.png

So little cloud feedback – so much measured change. The earlier segment of the graph is completely inconsistent with ERBE and ISCCP.

I doubt that the land and oceans have warmed much, we really do not know as the statistics are completely unreliable. But if they have warmed it is not due to CO2, because the atmosphere has only warmed a small amount, and that was due to the giant ENSO cycle. See my http://www.cfact.org/2018/01/02/no-co2-warming-for-the-last-40-years/. Increasing GHGs such as CO2 can only warm the surface by first warming the atmosphere, where they act. This has not happened.

“I doubt that the land and oceans have warmed much, we really do not know as the statistics are completely unreliable.”

The records of land and oceann temperature allow us to create spatial PREDICTIONS, of the temperature where there are no measurements.

There are two ways we test they reliability of these predictions.

1. Hold out testing: using a small set of measurements (1,000, 5000, )

that are selected randomly, we build the prediction. Then we test

the prediction using the held out measurements. The predictions

work. In an extreme case, for example, You could use CET

to predict the entire world and it would do pretty well ( with a larger

uncertainty of course. The reason why this works is that 90+ % of

the variance in Monthly average temperature is determined by altitude

and latitude. This is confirmed, independently, by satelite observations

of the entire ( nearly) globe. Since so much of the variance is determined by these two factors as long as your sample contains a good

distribution of latitude samples and elevation samples, the spatial prediction will work. The hardest area to predict are the poles, since direct

observations are sparse. Luckly the poles comprise asmall area, so

error due to spatial sampling is negligible, but interesting nonetheless.

2. Data recovery efforts. There are millions of historical records that have not been digitised. As efforts to recover the data complete their remit, they

add these “new” old records to the database. In the past, for example,

we have no digital records for Ben Nevis. Nevertheless the “averaging”

techniques through interpolation predict what temperature would

have been recorded there. Next, the written records are recovered and digitised. These digital records can then be compared to what

we predicted. This effort to digitize is done

https://www.bbc.com/news/science-environment-41166778

There are number of on going projects to recover old data. What I can tell you is that the “new” old data demonstrates that the spatial predictions work. Using sparse networks we can predict the data in places where there are “no” mesaurements. I use “no” in italics because in csome cases we “hold out” the data to test the model, and in other cases there is data, but it hasnt been turned into digital records.

We know the temperture pretty dang well. The reason for that is that 90+% of the variance is strictly determined by geography

https://www.facebook.com/Australian.Iriai/posts/1712078145575107

The total precipitable water graph in Daily graph section last one Arctic sea ice blog has provided interest for a year. One is able to see hurricanes forming out near Africa a week or more before nearing USA.

It shows currently a very large amount of water about to hit/hit Japan. Expect a big news item [if in Japan]. Or is it Trump meeting Kim?

Nonetheless I could see the swirl building up 2 weeks ago that now has given us 16 ml in country Victoria Australia 2 days ago.

The thing about these patterns though is how unpredictable they are though they give the semblance of predictability.

Four to 6 plumes of water going out in either direction from the Equator like giant fan blades of asynchronous and asymmetric length and anachronistic time.

A Hurricane will strike a coast near the same area every 30 years on average, which year, which coastal area?

Good luck with the predictions/assessments of risk.

Judy

From your post

— you may recall that in 2015 it looked like El Nino wanted to develop. –. Strong easerly outburst in the tropical Pacific —

They El Nino did occur in the second second six months of the year, the traditional method of record the 2 meter anomaly, NCEP CFSR / CFSv2 identified a July peak of .25 and a progressive rise in peaks through to .8 in December.

Importantly in El Nino home turf, the Pacific hurricane season was the second most active since 1992, eleven of the sixteen became major hurricanes, second highest ACE at 286 units, the season went unusually late and other records.

It looks like the ocean heat release got dealt to on its own doorstep, limiting the traditional impacts on anomalies.

Would this be a fair assumption.

Regards

https://en.m.wikipedia.org/wiki/2015_Pacific_hurricane_season

I forgot to include, three H4 hurricanes active similtainously.

@judith curry

Dear Judith, would you please let me know where I could find the original figure (or figures) about house density in 1940 vs 2017?

The resolution of your figure is not very good, can’t read clearly the legends.

I think it is a very good case/data to cite whenever warmists pull out one their favorite rants, about “the terrible cost of GW”…

Thanks in advance, and good luck with your talk.

R.

“The global coupled atmosphere-ocean-land-cryosphere system exhibits a wide range of physical and dynamical phenomena with associated physical,

biological, and chemical feedbacks that collectively result in a continuum of temporal and spatial variability. The traditional boundaries between weather and climate are, therefore, somewhat artificial. The large-scale climate, for instance, determines the environment for microscale (1 km or less) and mesoscale (from several kilometers to several hundred kilometers) processes that govern weather and local climate, and these small-scale processes

likely have significant impacts on the evolution of the large-scale circulation (Fig. 1;derived from Meehl et al. 2001).

The accurate representation of this continuum

of variability in numerical models is, consequently, a challenging but essential goal. Fundamental barriers to advancing weather and climate prediction on time

scales from days to years, as well as longstanding

systematic errors in weather and climate models, are partly attributable to our limited understanding of and capability for simulating the complex, multiscale

interactions intrinsic to atmospheric, ooceanic,

and cryospheric fluid motions.” https://journals.ametsoc.org/doi/10.1175/2009BAMS2752.1

https://i0.wp.com/watertechbyrie.files.wordpress.com/2014/06/hurrell.png

Probabilistic forecasts from nested, initialized, multi-scale models may enable us to get beyond ENSO.

Talking of ENSO – what happened to that el Nino some of us were predicting?

http://www.ospo.noaa.gov/Products/ocean/sst/anomaly/

The UNISYS Pacific SST map is AWOL – clearly a good day for hiding bad news

http://weather.unisys.com/surface/sst_anom_new.gif

Pingback: Jenseits ENSO: Neue Signale jahreszeitlicher und mehrjähriger Vorhersagbarkeit – EIKE – Europäisches Institut für Klima & Energie

“I would rather have questions that can’t be answered than answers that can’t be questioned.”

― Richard Feynman

For the greenhouse theory to operate as advertised requires a GHG up/down/”back” LWIR energy loop to “trap” energy and “warm” the earth and atmosphere.

For the GHG up/down/”back” radiation energy loop to operate as advertised requires ideal black body, 1.0 emissivity, LWIR of 396 W/m^2 from the surface. (K-T diagram)

The surface cannot do that because of a contiguous participating media, i.e. atmospheric molecules, moving over 50% ((17+80)/160) of the surface heat through non-radiative processes, i.e. conduction, convection, latent evaporation/condensation. (K-T diagram) Because of the contiguous turbulent non-radiative processes at the air interface the oceans cannot have an emissivity of 0.97.

No GHG energy loop & no greenhouse effect means no CO2/man caused climate change and no Gorebal warming.

https://www.linkedin.com/feed/update/urn:li:activity:6394226874976919552

http://www.writerbeat.com/articles/21036-S-B-amp-GHG-amp-LWIR-amp-RGHE-amp-CAGW